1. Introduction

Clustering is one of the central problems in network analysis and machine learning [Reference Newman, Watts and Strogatz59, Reference Ng, Jordan and Weiss60, Reference Shi and Malik65]. Many clustering algorithms make use of graph models, which represent pairwise relationships among data. A well-studied probabilistic model is the stochastic block model (SBM), which was first introduced in [Reference Holland, Laskey and Leinhardt39] as a random graph model that generates community structure with given ground truth for clusters so that one can study algorithm accuracy. The past decades have brought many notable results in the analysis of different algorithms and fundamental limits for community detection in SBMs in different settings [Reference Coja-Oghlan20, Reference Guédon and Vershynin37, Reference Montanari and Sen54, Reference Van70]. A major breakthrough was the proof of phase transition behaviours of community detection algorithms in various connectivity regimes [Reference Abbe, Bandeira and Hall2, Reference Abbe and Sandon5, Reference Bordenave, Lelarge and Massoulié12, Reference Massoulié52, Reference Mossel, Neeman and Sly55, Reference Mossel, Neeman and Sly57, Reference Mossel, Neeman and Sly58]. See the survey [Reference Abbe1] for more references.

Hypergraphs can represent more complex relationships among data [Reference Battiston, Cencetti, Iacopini, Latora, Lucas, Patania, Young and Petri10, Reference Benson, Gleich and Leskovec11], including recommendation systems [Reference Bu, Tan, Chen, Wang, Wu, Zhang and He13, Reference Li and Li49], computer vision [Reference Govindu34, Reference Wen, Du, Li, Bian and Lyu73], and biological networks [Reference Michoel and Nachtergaele53, Reference Tian, Hwang and Kuang68], and they have been shown empirically to have advantages over graphs [Reference Zhou, Huang and Schölkopf79]. Besides community detection problems, sparse hypergraphs and their spectral theory have also found applications in data science [Reference Harris and Zhu38, Reference Jain and Oh40, Reference Zhou and Zhu80], combinatorics [Reference Dumitriu and Zhu26, Reference Friedman and Wigderson29, Reference Soma and Yoshida66], and statistical physics [Reference Cáceres, Misobuchi and Pimentel14, Reference Sen64].

With the motivation from a broad set of applications, many efforts have been made in recent years to study community detection on random hypergraphs. The hypergraph stochastic block model (HSBM), as a generalisation of graph SBM, was first introduced and studied in [Reference Ghoshdastidar and Dukkipati31]. In this model, we observe a random uniform hypergraph where each hyperedge appears independently with some given probability depending on the community structure of the vertices in the hyperedge.

Succinctly put, the HSBM recovery problem is to find the ground truth clusters either approximately or exactly, given a sample hypergraph and estimates of model parameters. We may ask the following questions about the quality of the solutions (see [Reference Abbe1] for further details in the graph case).

1. Exact recovery (strong consistency): With high probability, find all clusters exactly (up to permutation).

2. Almost exact recovery (weak consistency): With high probability, find a partition of the vertex set such that at most

$o(n)$ vertices are misclassified.

$o(n)$ vertices are misclassified.3. Partial recovery: Given a fixed

$\gamma \in (0.5,1)$, with high probability, find a partition of the vertex set such that at least a fraction

$\gamma \in (0.5,1)$, with high probability, find a partition of the vertex set such that at least a fraction  $\gamma$ of the vertices are clustered correctly.

$\gamma$ of the vertices are clustered correctly.4. Weak recovery (detection): With high probability, find a partition correlated with the true partition.

For exact recovery of uniform HSBMs, it was shown that the phase transition occurs in the regime of logarithmic expected degrees in [16, Reference Eli Chien, Lin and Wang17, Reference Lin, Eli Chien and Wang50]. The thresholds are given for binary [Reference Gaudio and Joshi30, Reference Kim, Bandeira and Goemans43] and multiple [Reference Zhang and Tan77] community cases, by generalising the techniques in [Reference Abbe, Bandeira and Hall2–Reference Abbe and Sandon4]. After our work appeared on arXiv, thresholds for exact recovery on non-uniform HSBMs were given by [Reference Dumitriu and Wang25, Reference Wang71]. Strong consistency on the degree-corrected non-uniform HSBM was studied in [Reference Deng, Xu and Ying24]. Spectral methods were considered in [6, 16, Reference Cole and Zhu21, Reference Gaudio and Joshi30, Reference Yuan, Zhao and Zhao75, Reference Zhang and Tan77], while semidefinite programming methods were analysed in [Reference Alaluusua, Avrachenkov, Vinay Kumar and Leskelä8, Reference Kim, Bandeira and Goemans43, Reference Lee, Kim and Chung46]. Weak consistency for HSBMs was studied in [16, Reference Eli Chien, Lin and Wang17, Reference Ghoshdastidar and Dukkipati32, Reference Ghoshdastidar and Dukkipati33, Reference Ke, Shi and Xia42].

For detection of the HSBM, the authors of [Reference Angelini, Caltagirone, Krzakala and Zdeborová9] proposed a conjecture that the phase transition occurs in the regime of constant expected degrees. The positive part of the conjecture for the binary and multi-block case was solved in [Reference Pal and Zhu62] and [Reference Stephan and Zhu67], respectively. Their algorithms can output a partition better than a random guess when above the Kesten-Stigum threshold, but can not guarantee the correctness ratio. [Reference Gu and Pandey35, Reference Gu and Polyanskiy36] proved that detection is impossible and the Kesten-Stigum threshold is tight for ![]() $m$-uniform hypergraphs with binary communities when

$m$-uniform hypergraphs with binary communities when ![]() $m =3, 4$, while KS threshold is not tight when

$m =3, 4$, while KS threshold is not tight when ![]() $m\geq 7$, and some regimes remain unknown.

$m\geq 7$, and some regimes remain unknown.

1.1. Non-uniform hypergraph stochastic block model

The non-uniform HSBM was first studied in [Reference Ghoshdastidar and Dukkipati32], which removed the uniform hypergraph assumption in previous works, and it is a more realistic model to study higher-order interaction on networks [Reference Lung, Gaskó and Suciu51, Reference Wen, Du, Li, Bian and Lyu73]. It can be seen as a superposition of several uniform HSBMs with different model parameters. We first define the uniform HSBM in our setting and extend it to non-uniform hypergraphs.

Definition 1.1 (Uniform HSBM). Let ![]() $V=\{V_1,\dots V_k\}$ be a partition of the set

$V=\{V_1,\dots V_k\}$ be a partition of the set ![]() $[n]$ into

$[n]$ into ![]() $k$ blocks of size

$k$ blocks of size ![]() $\frac{n}{k}$ (assuming

$\frac{n}{k}$ (assuming ![]() $n$ is divisible by

$n$ is divisible by ![]() $k$). Let

$k$). Let ![]() $m \in \mathbb{N}$ be some fixed integer. For any set of

$m \in \mathbb{N}$ be some fixed integer. For any set of ![]() $m$ distinct vertices

$m$ distinct vertices ![]() $i_1,\dots i_m$, a hyperedge

$i_1,\dots i_m$, a hyperedge ![]() $\{i_1,\dots i_m\}$ is generated with probability

$\{i_1,\dots i_m\}$ is generated with probability ![]() $a_m/\binom{n}{m-1}$ if vertices

$a_m/\binom{n}{m-1}$ if vertices ![]() $i_1,\dots i_m$ are in the same block; otherwise with probability

$i_1,\dots i_m$ are in the same block; otherwise with probability ![]() $b_m/ \binom{n}{m-1}$. We denote this distribution on the set of

$b_m/ \binom{n}{m-1}$. We denote this distribution on the set of ![]() $m$-uniform hypergraphs as

$m$-uniform hypergraphs as

Definition 1.2 (Non-uniform HSBM). Let ![]() $H = (V, E)$ be a non-uniform random hypergraph, which can be considered as a collection of

$H = (V, E)$ be a non-uniform random hypergraph, which can be considered as a collection of ![]() $m$-uniform hypergraphs, i.e.,

$m$-uniform hypergraphs, i.e., ![]() $H = \bigcup _{m = 2}^{M} H_m$ with each

$H = \bigcup _{m = 2}^{M} H_m$ with each ![]() $H_m$ sampled from (1).

$H_m$ sampled from (1).

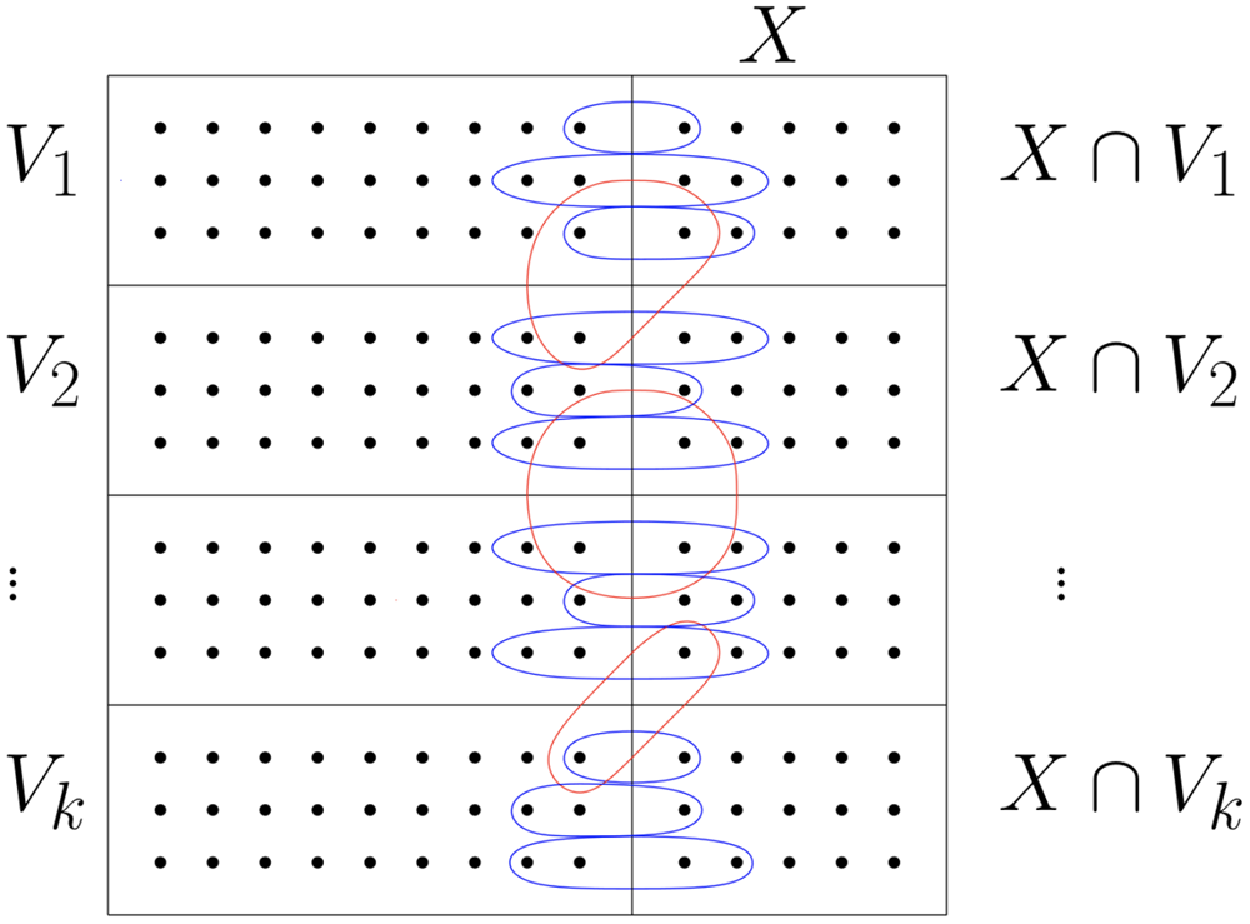

Examples of ![]() $2$-uniform and

$2$-uniform and ![]() $3$-uniform HSBM, and an example of non-uniform HSBM with

$3$-uniform HSBM, and an example of non-uniform HSBM with ![]() ${\mathcal M} = \{2, 3\}$ and

${\mathcal M} = \{2, 3\}$ and ![]() $k=3$ is displayed in Fig. 1a, b, c respectively.

$k=3$ is displayed in Fig. 1a, b, c respectively.

Figure 1. An example of non-uniform HSBM sampled from model 1.2.

1.2. Main results

To illustrate our main results, we first introduce the concepts ![]() $\gamma$-correctness and sigal-to-noise ratio to measure the accuracy of the obtained partitions.

$\gamma$-correctness and sigal-to-noise ratio to measure the accuracy of the obtained partitions.

Definition 1.3 (![]() $\gamma$-correctness). Suppose we have

$\gamma$-correctness). Suppose we have ![]() $k$ disjoint blocks

$k$ disjoint blocks ![]() $V_1,\dots, V_k$. A collection of subsets

$V_1,\dots, V_k$. A collection of subsets ![]() $\widehat{V}_1,\dots, \widehat{V}_k$ of

$\widehat{V}_1,\dots, \widehat{V}_k$ of ![]() $V$ is

$V$ is ![]() $\gamma$-correct if

$\gamma$-correct if ![]() $|V_i\cap \widehat{V}_i|\geq \gamma |V_i|$ for all

$|V_i\cap \widehat{V}_i|\geq \gamma |V_i|$ for all ![]() $i\in [k]$.

$i\in [k]$.

Definition 1.4. For model 1.2 under Assumption 1.5, we define the signal-to-noise ratio (![]() $\mathrm{SNR}$) as

$\mathrm{SNR}$) as

\begin{align} \mathrm{SNR}_{{\mathcal M}}(k) \,:\!=\,\frac{ \left [\sum _{m\in{\mathcal M}} (m-1)\left (\frac{a_m - b_m}{k^{m-1}} \right ) \right ]^2 }{\sum _{m\in{\mathcal M}} (m-1)\left (\frac{a_m - b_m}{k^{m-1}} + b_m \right )} \,. \end{align}

\begin{align} \mathrm{SNR}_{{\mathcal M}}(k) \,:\!=\,\frac{ \left [\sum _{m\in{\mathcal M}} (m-1)\left (\frac{a_m - b_m}{k^{m-1}} \right ) \right ]^2 }{\sum _{m\in{\mathcal M}} (m-1)\left (\frac{a_m - b_m}{k^{m-1}} + b_m \right )} \,. \end{align}

Let ![]() ${\mathcal M}_{\max }$ denote the maximum element in the set

${\mathcal M}_{\max }$ denote the maximum element in the set ![]() $\mathcal M$. The following constant

$\mathcal M$. The following constant ![]() $C_{{\mathcal M}}(k)$ is used to characterise the accuracy of the clustering result,

$C_{{\mathcal M}}(k)$ is used to characterise the accuracy of the clustering result,

Note that a non-uniform HSBM can be seen as a collection of noisy observations for the same underlying community structure through several uniform HSBMs of different orders. A possible issue is that some uniform hypergraphs with small SNR might not be informative (if we observe an ![]() $m$-uniform hypergraph with parameters

$m$-uniform hypergraph with parameters ![]() $a_m=b_m$, including hyperedge information from it ultimately increases the noise). To improve our error rate guarantees, we start by adding a pre-processing step (Algorithm 3) for hyperedge selection according to SNR and then apply the algorithm on the sub-hypergraph with maximal SNR.

$a_m=b_m$, including hyperedge information from it ultimately increases the noise). To improve our error rate guarantees, we start by adding a pre-processing step (Algorithm 3) for hyperedge selection according to SNR and then apply the algorithm on the sub-hypergraph with maximal SNR.

We state the following assumption that will be used in our analysis of Algorithms 1 (![]() $k=2$) and 2 (

$k=2$) and 2 (![]() $k\geq 3$).

$k\geq 3$).

Assumption 1.5. For each ![]() $m\in{\mathcal M}$, assume

$m\in{\mathcal M}$, assume ![]() $a_m, b_m$ are constants independent of

$a_m, b_m$ are constants independent of ![]() $n$, and

$n$, and ![]() $a_m \geq b_m$. Let

$a_m \geq b_m$. Let ![]() ${\mathcal M}_{\max }$ denote the maximum element in the set

${\mathcal M}_{\max }$ denote the maximum element in the set ![]() $\mathcal M$. Given

$\mathcal M$. Given ![]() $\nu \in (1/k, 1)$, assume that there exists a universal constant

$\nu \in (1/k, 1)$, assume that there exists a universal constant ![]() $C$ and some

$C$ and some ![]() $\nu$-dependent constant

$\nu$-dependent constant ![]() $C_{\nu } \gt 0$, such that

$C_{\nu } \gt 0$, such that

\begin{align} \sum _{m\in{\mathcal{M}}} (m-1)(a_m-b_m) &\geq C_{\nu } \sqrt{d}\cdot k^{{\mathcal{M}}_{\max }-1} \cdot \bigg ( 2^{3} \cdot \unicode{x1D7D9}_{\{ k = 2\}} + \sqrt{\log \Big (\frac{k}{1-\nu }\Big )} \cdot \unicode{x1D7D9}_{\{ k\geq 3\}} \bigg ) . \end{align}

\begin{align} \sum _{m\in{\mathcal{M}}} (m-1)(a_m-b_m) &\geq C_{\nu } \sqrt{d}\cdot k^{{\mathcal{M}}_{\max }-1} \cdot \bigg ( 2^{3} \cdot \unicode{x1D7D9}_{\{ k = 2\}} + \sqrt{\log \Big (\frac{k}{1-\nu }\Big )} \cdot \unicode{x1D7D9}_{\{ k\geq 3\}} \bigg ) . \end{align}

One does not have to take too large a ![]() $C$ for (4a); for example,

$C$ for (4a); for example, ![]() $C = (2^{1/{\mathcal M}_{\max }} - 1)^{-1/3}$ should suffice, but even smaller

$C = (2^{1/{\mathcal M}_{\max }} - 1)^{-1/3}$ should suffice, but even smaller ![]() $C$ may work. Both of the two inequalities above constant prevent the hypergraph from being too sparse, while (4b) also requires that the difference between in-block and across-blocks densities is large enough. The choices of

$C$ may work. Both of the two inequalities above constant prevent the hypergraph from being too sparse, while (4b) also requires that the difference between in-block and across-blocks densities is large enough. The choices of ![]() $C, C_{\nu }$ and their relationship will be discussed in Remark 5.15.

$C, C_{\nu }$ and their relationship will be discussed in Remark 5.15.

1.2.1. The 2-block case

We start with Algorithm 1, which outputs a ![]() $\gamma$-correct partition when the non-uniform HSBM

$\gamma$-correct partition when the non-uniform HSBM ![]() $H$ is sampled from model 1.2 with only

$H$ is sampled from model 1.2 with only ![]() $2$ communities. Inspired by the innovative graph algorithm in [Reference Chin, Rao and Van19], we generalise it to non-uniform hypergraphs while we provide a complete and detailed analysis at the same time.

$2$ communities. Inspired by the innovative graph algorithm in [Reference Chin, Rao and Van19], we generalise it to non-uniform hypergraphs while we provide a complete and detailed analysis at the same time.

Algorithm 1. Binary Partition

Theorem 1.6 (![]() $k=2$). Let

$k=2$). Let ![]() $\nu \in (0.5, 1)$ and

$\nu \in (0.5, 1)$ and ![]() $\rho = 2\exp ({-}C_{{\mathcal M}}(2) \cdot \mathrm{SNR}_{{\mathcal M}}(2))$ with

$\rho = 2\exp ({-}C_{{\mathcal M}}(2) \cdot \mathrm{SNR}_{{\mathcal M}}(2))$ with ![]() $\mathrm{SNR}_{{\mathcal M}}(k)$,

$\mathrm{SNR}_{{\mathcal M}}(k)$, ![]() $C_{{\mathcal M}}(k)$ defined in (2), (3), and let

$C_{{\mathcal M}}(k)$ defined in (2), (3), and let ![]() $\gamma = \max \{\nu,\, 1 - 2\rho \}$. Then under Assumption 1.5, Algorithm 1 outputs a

$\gamma = \max \{\nu,\, 1 - 2\rho \}$. Then under Assumption 1.5, Algorithm 1 outputs a ![]() $\gamma$-correct partition for sufficiently large

$\gamma$-correct partition for sufficiently large ![]() $n$ with probability at least

$n$ with probability at least ![]() $1 - O(n^{-2})$.

$1 - O(n^{-2})$.

1.2.2. The  $k$-block case

$k$-block case

For the multi-community case (![]() $k \geq 3$), another algorithm with more subroutines is developed in Algorithm 2, which outputs a

$k \geq 3$), another algorithm with more subroutines is developed in Algorithm 2, which outputs a ![]() $\gamma$-correct partition with high probability. We state the result as follows.

$\gamma$-correct partition with high probability. We state the result as follows.

Algorithm 2. General Partition

Theorem 1.7 (![]() $k\geq 3$). Let

$k\geq 3$). Let ![]() $\nu \in (1/k, 1)$ and

$\nu \in (1/k, 1)$ and ![]() $\rho = \exp ({-}C_{{\mathcal M}}(k) \cdot \mathrm{SNR}_{{\mathcal M}}(k))$ with

$\rho = \exp ({-}C_{{\mathcal M}}(k) \cdot \mathrm{SNR}_{{\mathcal M}}(k))$ with ![]() $\mathrm{SNR}_{{\mathcal M}}(k), C_{{\mathcal M}}(k)$ defined in (2), (3), and let

$\mathrm{SNR}_{{\mathcal M}}(k), C_{{\mathcal M}}(k)$ defined in (2), (3), and let ![]() $\gamma = \max \{\nu,\, 1 - k\rho \}$. Then under Assumption 1.5, Algorithm 2 outputs a

$\gamma = \max \{\nu,\, 1 - k\rho \}$. Then under Assumption 1.5, Algorithm 2 outputs a ![]() $\gamma$-correct partition for sufficiently large

$\gamma$-correct partition for sufficiently large ![]() $n$ with probability at least

$n$ with probability at least ![]() $1 - O(n^{-2})$.

$1 - O(n^{-2})$.

The time complexities of Algorithms 1 and 2 are ![]() $O(n^{3})$, with the bulk of time spent in Stage 1 by the spectral method.

$O(n^{3})$, with the bulk of time spent in Stage 1 by the spectral method.

To the best of our knowledge, Theorems 1.6 and 1.7 are the first results for partial recovery of non-uniform HSBMs. When the number of blocks is ![]() $2$, Algorithm 1 guarantees a better error rate for partial recovery as in Theorem 1.6. This happens because Algorithm 1 does not need the merging routine in Algorithm 2: if one of the communities is obtained, then the other one is also obtained via the complement.

$2$, Algorithm 1 guarantees a better error rate for partial recovery as in Theorem 1.6. This happens because Algorithm 1 does not need the merging routine in Algorithm 2: if one of the communities is obtained, then the other one is also obtained via the complement.

Remark 1.8. Taking ![]() ${\mathcal M} = \{2 \}$, Theorem 1.7 can be reduced to [Reference Chin, Rao and Van19, Lemma 9] for the graph case. The failure probability

${\mathcal M} = \{2 \}$, Theorem 1.7 can be reduced to [Reference Chin, Rao and Van19, Lemma 9] for the graph case. The failure probability ![]() $O(n^{-2})$ can be decreased to

$O(n^{-2})$ can be decreased to ![]() $O(n^{-p})$ for any

$O(n^{-p})$ for any ![]() $p\gt 0$, as long as one is willing to pay the price by increasing the constants

$p\gt 0$, as long as one is willing to pay the price by increasing the constants ![]() $C$,

$C$, ![]() $C_v$ in (4a), (4b).

$C_v$ in (4a), (4b).

Our Algorithms 1 and 2 can be summarised in 3 steps:

1. Hyperedge selection: select hyperedges of certain sizes to provide the maximal signal-to-noise ratio (SNR) for the induced sub-hypergraph.

2. Spectral partition: construct a regularised adjacency matrix and obtain an approximate partition based on singular vectors (first approximation).

3. Correction and merging: incorporate the hyperedge information from adjacency tensors to upgrade the error rate guarantee (second, better approximation).

The algorithm requires the input of model parameters ![]() $a_m,b_m$, which can be estimated by counting cycles in hypergraphs as shown in [Reference Mossel, Neeman and Sly55, Reference Yuan, Liu, Feng and Shang74]. Estimation of the number of blocks can be done by counting the outliers in the spectrum of the non-backtracking operator, e.g., as shown (for different regimes and different parameters) in [Reference Angelini, Caltagirone, Krzakala and Zdeborová9, Reference Le and Levina44, Reference Saade, Krzakala and Zdeborová63, Reference Stephan and Zhu67].

$a_m,b_m$, which can be estimated by counting cycles in hypergraphs as shown in [Reference Mossel, Neeman and Sly55, Reference Yuan, Liu, Feng and Shang74]. Estimation of the number of blocks can be done by counting the outliers in the spectrum of the non-backtracking operator, e.g., as shown (for different regimes and different parameters) in [Reference Angelini, Caltagirone, Krzakala and Zdeborová9, Reference Le and Levina44, Reference Saade, Krzakala and Zdeborová63, Reference Stephan and Zhu67].

1.2.3. Weak consistency

Throughout the proofs for Theorems 1.6 and 1.7, we make only one assumption on the growth or finiteness of ![]() $d$ and

$d$ and ![]() $\mathrm{SNR}_{{\mathcal M}}(k)$, and it happens in estimating the failure probability as noted in Remark 1.10. Consequently, the corollary below follows, which covers the case when

$\mathrm{SNR}_{{\mathcal M}}(k)$, and it happens in estimating the failure probability as noted in Remark 1.10. Consequently, the corollary below follows, which covers the case when ![]() $d$ and

$d$ and ![]() $\mathrm{SNR}_{{\mathcal M}}(k)$ grow with

$\mathrm{SNR}_{{\mathcal M}}(k)$ grow with ![]() $n$.

$n$.

Corollary 1.9 (Weak consistency). For fixed ![]() $M$ and

$M$ and ![]() $k$, if

$k$, if ![]() $\mathrm{SNR}_{{\mathcal M}}(k)$ defined in (2) goes to infinity as

$\mathrm{SNR}_{{\mathcal M}}(k)$ defined in (2) goes to infinity as ![]() $n\to \infty$ and

$n\to \infty$ and ![]() $\mathrm{SNR}_{{\mathcal M}}(k) = o(\log n)$, then with probability

$\mathrm{SNR}_{{\mathcal M}}(k) = o(\log n)$, then with probability ![]() $1- O(n^{-2})$, Algorithms 1 and 2 output a partition with only

$1- O(n^{-2})$, Algorithms 1 and 2 output a partition with only ![]() $o(n)$ misclassified vertices.

$o(n)$ misclassified vertices.

The paper [Reference Ghoshdastidar and Dukkipati32] also proves weak consistency for non-uniform HSBMs, but in a much denser regime than we do here (![]() $d = \Omega (\log ^2(n))$, instead of

$d = \Omega (\log ^2(n))$, instead of ![]() $d=\omega (1)$, as in Corollary 1.9). In fact, we now know that strong consistency should be achievable in this denser regime, as [Reference Dumitriu and Wang25] shows. When restricting to the uniform HSBM case, Corollary 1.9 achieves weak consistency under the same sparsity condition as in [Reference Ahn, Lee and Suh7].

$d=\omega (1)$, as in Corollary 1.9). In fact, we now know that strong consistency should be achievable in this denser regime, as [Reference Dumitriu and Wang25] shows. When restricting to the uniform HSBM case, Corollary 1.9 achieves weak consistency under the same sparsity condition as in [Reference Ahn, Lee and Suh7].

Remark 1.10. To be precise, Algorithms 1 and 2 work optimally in the ![]() $\mathrm{SNR}_{{\mathcal M}} = o (\log n)$ regime. When

$\mathrm{SNR}_{{\mathcal M}} = o (\log n)$ regime. When ![]() $\mathrm{SNR}_{{\mathcal M}}(k) = \Omega (\log n)$, it implies that

$\mathrm{SNR}_{{\mathcal M}}(k) = \Omega (\log n)$, it implies that ![]() $\rho = n^{-\Omega (1)}$, and one may have

$\rho = n^{-\Omega (1)}$, and one may have ![]() $e^{-n\rho } = \Omega (1)$ in (31), which may not decrease to

$e^{-n\rho } = \Omega (1)$ in (31), which may not decrease to ![]() $0$ as

$0$ as ![]() $n\to \infty$. Therefore the theoretical guarantees of Algorithms 5 and 6 may not remain valid. This, however, should not matter: in the regime when

$n\to \infty$. Therefore the theoretical guarantees of Algorithms 5 and 6 may not remain valid. This, however, should not matter: in the regime when ![]() $\mathrm{SNR}_{{\mathcal M}}(k) = \Omega (\log n)$, strong (rather than weak) consistency is expected, as per [Reference Dumitriu and Wang25]. Therefore, the regime of interest for weak consistency is

$\mathrm{SNR}_{{\mathcal M}}(k) = \Omega (\log n)$, strong (rather than weak) consistency is expected, as per [Reference Dumitriu and Wang25]. Therefore, the regime of interest for weak consistency is ![]() $\mathrm{SNR}_{{\mathcal M}} = o(\log n)$.

$\mathrm{SNR}_{{\mathcal M}} = o(\log n)$.

1.3. Comparison with existing results

Although many algorithms and theoretical results have been developed for hypergraph community detection, most of them are restricted to uniform hypergraphs, and few results are known for non-uniform ones. We will discuss the most relevant results.

In [Reference Ke, Shi and Xia42], the authors studied the degree-corrected HSBM with general connection probability parameters by using a tensor power iteration algorithm and Tucker decomposition. Their algorithm achieves weak consistency for uniform hypergraphs when the average degree is ![]() $\omega (\log ^2 n)$, which is the regime complementary to the regime we studied here. They discussed a way to generalise the algorithm to non-uniform hypergraphs, but the theoretical analysis remains open. The recent paper [Reference Zhen and Wang78] analysed non-uniform hypergraph community detection by using hypergraph embedding and optimisation algorithms and obtained weak consistency when the expected degrees are of

$\omega (\log ^2 n)$, which is the regime complementary to the regime we studied here. They discussed a way to generalise the algorithm to non-uniform hypergraphs, but the theoretical analysis remains open. The recent paper [Reference Zhen and Wang78] analysed non-uniform hypergraph community detection by using hypergraph embedding and optimisation algorithms and obtained weak consistency when the expected degrees are of ![]() $\omega (\log n)$, again a complementary regime to ours. Results on spectral norm concentration of sparse random tensors were obtained in [Reference Cooper23, Reference Jain and Oh40, Reference Lei, Chen and Lynch47, Reference Nguyen, Drineas and Tran61, Reference Zhou and Zhu80], but no provable tensor algorithm in the bounded expected degree case is known. Testing for the community structure for non-uniform hypergraphs was studied in [Reference Jin, Ke and Liang41, Reference Yuan, Liu, Feng and Shang74], which is a problem different from community detection.

$\omega (\log n)$, again a complementary regime to ours. Results on spectral norm concentration of sparse random tensors were obtained in [Reference Cooper23, Reference Jain and Oh40, Reference Lei, Chen and Lynch47, Reference Nguyen, Drineas and Tran61, Reference Zhou and Zhu80], but no provable tensor algorithm in the bounded expected degree case is known. Testing for the community structure for non-uniform hypergraphs was studied in [Reference Jin, Ke and Liang41, Reference Yuan, Liu, Feng and Shang74], which is a problem different from community detection.

In our approach, we relied on knowing the tensors for each uniform hypergraph. However, in computations, we only ran the spectral algorithm on the adjacency matrix of the entire hypergraph since the stability of tensor algorithms does not yet come with guarantees due to the lack of concentration, and for non-uniform hypergraphs, ![]() $M-1$ adjacency tensors would be needed. This approach presented the challenge that, unlike for graphs, the adjacency matrix of a random non-uniform hypergraph has dependent entries, and the concentration properties of such a random matrix were previously unknown. We overcame this issue and proved concentration bounds from scratch down to the bounded degree regime. Similar to [Reference Feige and Ofek28, Reference Le, Levina and Vershynin45], we provided here a regularisation analysis by removing rows in the adjacency matrix with large row sums (suggestive of large degree vertices) and proving a concentration result for the regularised matrix down to the bounded expected degree regime (see Theorem 3.3).

$M-1$ adjacency tensors would be needed. This approach presented the challenge that, unlike for graphs, the adjacency matrix of a random non-uniform hypergraph has dependent entries, and the concentration properties of such a random matrix were previously unknown. We overcame this issue and proved concentration bounds from scratch down to the bounded degree regime. Similar to [Reference Feige and Ofek28, Reference Le, Levina and Vershynin45], we provided here a regularisation analysis by removing rows in the adjacency matrix with large row sums (suggestive of large degree vertices) and proving a concentration result for the regularised matrix down to the bounded expected degree regime (see Theorem 3.3).

In terms of partial recovery for hypergraphs, our results are new, even in the uniform case. In [Reference Ahn, Lee and Suh7, Theorem 1], for uniform hypergraphs, the authors showed detection (not partial recovery) is possible when the average degree is ![]() $\Omega (1)$; in addition, the error rate is not exponential in the model parameters, but only polynomial. Here, we mention two more results for the graph case. In the arbitrarily slowly growing degrees regime, it was shown in [Reference Fei and Chen27, Reference Zhang and Zhou76] that the error rate in (2) is optimal up to a constant in the exponent. In the bounded expected degrees regime, the authors in [Reference Chin and Sly18, Reference Mossel, Neeman and Sly56] provided algorithms that can asymptotically recover the optimal fraction of vertices, when the signal-to-noise ratio is large enough. It’s an open problem to extend their analysis to obtain a minimax error rate for hypergraphs.

$\Omega (1)$; in addition, the error rate is not exponential in the model parameters, but only polynomial. Here, we mention two more results for the graph case. In the arbitrarily slowly growing degrees regime, it was shown in [Reference Fei and Chen27, Reference Zhang and Zhou76] that the error rate in (2) is optimal up to a constant in the exponent. In the bounded expected degrees regime, the authors in [Reference Chin and Sly18, Reference Mossel, Neeman and Sly56] provided algorithms that can asymptotically recover the optimal fraction of vertices, when the signal-to-noise ratio is large enough. It’s an open problem to extend their analysis to obtain a minimax error rate for hypergraphs.

In [Reference Ghoshdastidar and Dukkipati32], the authors considered weak consistency in a non-uniform HSBM model with a spectral algorithm based on the hypergraph Laplacian matrix, and showed that weak consistency is achievable if the expected degree is of ![]() $\Omega (\log ^2 n)$ with high probability [Reference Ghoshdastidar and Dukkipati31, Theorem 4.2]. Their algorithm can’t be applied to sparse regimes straightforwardly since the normalised Laplacian is not well-defined due to the existence of isolated vertices in the bounded degree case. In addition, our weak consistency results obtained here are valid as long as the expected degree is

$\Omega (\log ^2 n)$ with high probability [Reference Ghoshdastidar and Dukkipati31, Theorem 4.2]. Their algorithm can’t be applied to sparse regimes straightforwardly since the normalised Laplacian is not well-defined due to the existence of isolated vertices in the bounded degree case. In addition, our weak consistency results obtained here are valid as long as the expected degree is ![]() $\omega (1)$ and

$\omega (1)$ and ![]() $o(\log n)$, which is the entire set of problems on which weak consistency is expected. By contrast, in [Reference Ghoshdastidar and Dukkipati32], weak consistency is shown only when the minimum expected degree is

$o(\log n)$, which is the entire set of problems on which weak consistency is expected. By contrast, in [Reference Ghoshdastidar and Dukkipati32], weak consistency is shown only when the minimum expected degree is ![]() $\Omega (\log ^2(n))$, which is a regime complementary to ours and where exact recovery should (in principle) be possible: for example, this is known to be an exact recovery regime in the uniform case [Reference Eli Chien, Lin and Wang17, Reference Kim, Bandeira and Goemans43, Reference Lee, Kim and Chung46, Reference Zhang and Tan77].

$\Omega (\log ^2(n))$, which is a regime complementary to ours and where exact recovery should (in principle) be possible: for example, this is known to be an exact recovery regime in the uniform case [Reference Eli Chien, Lin and Wang17, Reference Kim, Bandeira and Goemans43, Reference Lee, Kim and Chung46, Reference Zhang and Tan77].

In subsequent works [Reference Dumitriu and Wang25, Reference Wang71] we proposed algorithms to achieve weak consistency. However, their methods can not cover the regime when the expected degree is ![]() $\Omega (1)$ due to the lack of concentration. Additionally, [Reference Wang, Pun, Wang, Wang and So72] proposed Projected Tensor Power Method as the refinement stage to achieve strong consistency, as long as the first stage partition is partially correct, as ours.

$\Omega (1)$ due to the lack of concentration. Additionally, [Reference Wang, Pun, Wang, Wang and So72] proposed Projected Tensor Power Method as the refinement stage to achieve strong consistency, as long as the first stage partition is partially correct, as ours.

1.4. Organization of the paper

In Section 2, we include the definitions of adjacency matrices of hypergraphs. The concentration results for the adjacency matrices are provided in Section 3. The algorithms for partial recovery are presented in Section 4. The proof for the correctness of our algorithms for Theorem 1.7 and Corollary 1.9 are given in Section 5. The proof of Theorem 1.6, as well as the proofs of many auxiliary lemmas and useful lemmas in the literature, are provided in the supplemental materials.

2. Preliminaries

Definition 2.1 (Adjacency tensor). Given an ![]() $m$-uniform hypergraph

$m$-uniform hypergraph ![]() $H_m=([n], E_m)$, we can associate to it an order-

$H_m=([n], E_m)$, we can associate to it an order- ![]() $m$ adjacency tensor

$m$ adjacency tensor ![]() $\boldsymbol{\mathcal{A}}^{(m)}$. For any

$\boldsymbol{\mathcal{A}}^{(m)}$. For any ![]() $m$-hyperedge

$m$-hyperedge ![]() $e = \{ i_1, \dots, i_m \}$, let

$e = \{ i_1, \dots, i_m \}$, let ![]() $\boldsymbol{\mathcal{A}}^{(m)}_e$ denote the corresponding entry

$\boldsymbol{\mathcal{A}}^{(m)}_e$ denote the corresponding entry ![]() $\boldsymbol{\mathcal{A}}_{[i_{1},\dots,i_{m}]}^{(m)}$, such that

$\boldsymbol{\mathcal{A}}_{[i_{1},\dots,i_{m}]}^{(m)}$, such that

Definition 2.2 (Adjacency matrix). For the non-uniform hypergraph ![]() $H$ sampled from model 1.2, let

$H$ sampled from model 1.2, let ![]() $\boldsymbol{\mathcal{A}}^{(m)}$ be the order-

$\boldsymbol{\mathcal{A}}^{(m)}$ be the order- ![]() $m$ adjacency tensor corresponding to the underlying

$m$ adjacency tensor corresponding to the underlying ![]() $m$-uniform hypergraph for each

$m$-uniform hypergraph for each ![]() $m\in{\mathcal M}$. The adjacency matrix

$m\in{\mathcal M}$. The adjacency matrix ![]() ${\mathbf{A}} \,:\!=\, [{\mathbf{A}}_{ij}]_{n \times n}$ of the non-uniform hypergraph

${\mathbf{A}} \,:\!=\, [{\mathbf{A}}_{ij}]_{n \times n}$ of the non-uniform hypergraph ![]() $H$ is defined by

$H$ is defined by

\begin{equation} {\mathbf{A}}_{ij} = \unicode{x1D7D9}_{\{ i \neq j\} } \cdot \sum _{m\in{\mathcal M}}\,\,\sum _{\substack{e\in E_m\\

\{i,j\}\subset e} }\boldsymbol{\mathcal{A}}^{(m)}_{e}\,. \end{equation}

\begin{equation} {\mathbf{A}}_{ij} = \unicode{x1D7D9}_{\{ i \neq j\} } \cdot \sum _{m\in{\mathcal M}}\,\,\sum _{\substack{e\in E_m\\

\{i,j\}\subset e} }\boldsymbol{\mathcal{A}}^{(m)}_{e}\,. \end{equation}

We compute the expectation of ![]() ${\mathbf{A}}$ first. In each

${\mathbf{A}}$ first. In each ![]() $m$-uniform hypergraph

$m$-uniform hypergraph ![]() $H_m$, two distinct vertices

$H_m$, two distinct vertices ![]() $i, j\in V$ with

$i, j\in V$ with ![]() $i \neq j$ are picked arbitrarily since our model does not allow for loops. Assume for a moment

$i \neq j$ are picked arbitrarily since our model does not allow for loops. Assume for a moment ![]() $\frac{n}{k} \in \mathbb{N}$, then the expected number of

$\frac{n}{k} \in \mathbb{N}$, then the expected number of ![]() $m$-hyperedge containing

$m$-hyperedge containing ![]() $i$ and

$i$ and ![]() $j$ can be computed as follows.

$j$ can be computed as follows.

• If

$i$ and

$i$ and  $j$ are from the same block, the

$j$ are from the same block, the  $m$-hyperedge is sampled with probability

$m$-hyperedge is sampled with probability  $a_m/\binom{n}{m-1}$ when the other

$a_m/\binom{n}{m-1}$ when the other  $m-2$ vertices are from the same block as

$m-2$ vertices are from the same block as  $i$,

$i$,  $j$, otherwise with probability

$j$, otherwise with probability  $b_m/\binom{n}{m-1}$. Then

$b_m/\binom{n}{m-1}$. Then

\begin{align*} \alpha _m\,:\!=\,{\mathbb{E}}{\mathbf{A}}_{ij} = \binom{\frac{n}{k} -2}{m-2} \frac{a_m}{\binom{n}{m-1}} + \left [ \binom{n-2}{m-2} - \binom{\frac{n}{k} - 2}{m-2} \right ]\frac{b_m}{\binom{n}{m-1}} \,. \end{align*}

\begin{align*} \alpha _m\,:\!=\,{\mathbb{E}}{\mathbf{A}}_{ij} = \binom{\frac{n}{k} -2}{m-2} \frac{a_m}{\binom{n}{m-1}} + \left [ \binom{n-2}{m-2} - \binom{\frac{n}{k} - 2}{m-2} \right ]\frac{b_m}{\binom{n}{m-1}} \,. \end{align*}

• If

$i$ and

$i$ and  $j$ are not from the same block, we sample the

$j$ are not from the same block, we sample the  $m$-hyperedge with probability

$m$-hyperedge with probability  $b_m/\binom{n}{m-1}$, and

$b_m/\binom{n}{m-1}$, and

\begin{align*} \beta _m \,:\!=\,{\mathbb{E}}{\mathbf{A}}_{ij} = \binom{n-2}{m-2} \frac{b_m}{\binom{n}{m-1}}\,. \end{align*}

\begin{align*} \beta _m \,:\!=\,{\mathbb{E}}{\mathbf{A}}_{ij} = \binom{n-2}{m-2} \frac{b_m}{\binom{n}{m-1}}\,. \end{align*}

By assumption ![]() $a_m \geq b_m$, then

$a_m \geq b_m$, then ![]() $\alpha _m \geq \beta _m$ for each

$\alpha _m \geq \beta _m$ for each ![]() $m\in{\mathcal M}$. Summing over

$m\in{\mathcal M}$. Summing over ![]() $m$, the expected adjacency matrix under the

$m$, the expected adjacency matrix under the ![]() $k$-block non-uniform

$k$-block non-uniform ![]() $\mathrm{HSBM}$ can be written as

$\mathrm{HSBM}$ can be written as

\begin{align} {\mathbb{E}}{\mathbf{A}} = \begin{bmatrix} \alpha{\mathbf{J}}_{\frac{n}{k}} & \quad \beta{\mathbf{J}}_{\frac{n}{k}} & \quad \cdots & \quad \beta{\mathbf{J}}_{\frac{n}{k}} \\[4pt]

\beta{\mathbf{J}}_{\frac{n}{k}} & \quad \alpha{\mathbf{J}}_{\frac{n}{k}} & \quad \cdots & \quad \beta{\mathbf{J}}_{\frac{n}{k}} \\[4pt]

\vdots & \quad \vdots & \quad \ddots & \quad \vdots \\[4pt]

\beta{\mathbf{J}}_{\frac{n}{k}} & \quad \beta{\mathbf{J}}_{\frac{n}{k}} & \quad \cdots & \quad \alpha{\mathbf{J}}_{\frac{n}{k}} \end{bmatrix} - \alpha{\mathbf{I}}_{n}\,, \end{align}

\begin{align} {\mathbb{E}}{\mathbf{A}} = \begin{bmatrix} \alpha{\mathbf{J}}_{\frac{n}{k}} & \quad \beta{\mathbf{J}}_{\frac{n}{k}} & \quad \cdots & \quad \beta{\mathbf{J}}_{\frac{n}{k}} \\[4pt]

\beta{\mathbf{J}}_{\frac{n}{k}} & \quad \alpha{\mathbf{J}}_{\frac{n}{k}} & \quad \cdots & \quad \beta{\mathbf{J}}_{\frac{n}{k}} \\[4pt]

\vdots & \quad \vdots & \quad \ddots & \quad \vdots \\[4pt]

\beta{\mathbf{J}}_{\frac{n}{k}} & \quad \beta{\mathbf{J}}_{\frac{n}{k}} & \quad \cdots & \quad \alpha{\mathbf{J}}_{\frac{n}{k}} \end{bmatrix} - \alpha{\mathbf{I}}_{n}\,, \end{align}

where ![]() ${\mathbf{J}}_{\frac{n}{k}}\in{\mathbb{R}}^{\frac{n}{k} \times \frac{n}{k}}$ denotes the all-one matrix and

${\mathbf{J}}_{\frac{n}{k}}\in{\mathbb{R}}^{\frac{n}{k} \times \frac{n}{k}}$ denotes the all-one matrix and

Lemma 2.3. The eigenvalues of ![]() ${\mathbb{E}}{\mathbf{A}}$ are given below:

${\mathbb{E}}{\mathbf{A}}$ are given below:

\begin{align*} \lambda _1({\mathbb{E}}{\mathbf{A}}) =&\, \frac{n}{k}(\alpha + (k-1)\beta ) - \alpha \,,\\[4pt]

\lambda _i({\mathbb{E}}{\mathbf{A}}) =&\, \frac{n}{k}(\alpha - \beta ) -\alpha \,, \quad 2\leq i \leq k\,,\\[4pt]

\lambda _i({\mathbb{E}}{\mathbf{A}}) =&\, - \alpha \,, \,\quad \quad \quad k+1\leq i \leq n\,. \end{align*}

\begin{align*} \lambda _1({\mathbb{E}}{\mathbf{A}}) =&\, \frac{n}{k}(\alpha + (k-1)\beta ) - \alpha \,,\\[4pt]

\lambda _i({\mathbb{E}}{\mathbf{A}}) =&\, \frac{n}{k}(\alpha - \beta ) -\alpha \,, \quad 2\leq i \leq k\,,\\[4pt]

\lambda _i({\mathbb{E}}{\mathbf{A}}) =&\, - \alpha \,, \,\quad \quad \quad k+1\leq i \leq n\,. \end{align*}

Lemma 2.3 can be verified via direct computation. Lemma 2.4 is used for approximately equi-partitions, meaning that eigenvalues of ![]() $\widetilde{{\mathbb{E}}{\mathbf{A}}}$ can be approximated by eigenvalues of

$\widetilde{{\mathbb{E}}{\mathbf{A}}}$ can be approximated by eigenvalues of ![]() ${\mathbb{E}}{\mathbf{A}}$ when

${\mathbb{E}}{\mathbf{A}}$ when ![]() $n$ is sufficiently large.

$n$ is sufficiently large.

Lemma 2.4. For any partition ![]() $(V_1, \dots, V_k)$ of

$(V_1, \dots, V_k)$ of ![]() $V$ where

$V$ where ![]() $n_i \,:\!=\, |V_i|$, consider the following matrix

$n_i \,:\!=\, |V_i|$, consider the following matrix

\begin{align*} \widetilde{{\mathbb{E}}{\mathbf{A}}} = \begin{bmatrix} \alpha{\mathbf{J}}_{n_1} & \quad \beta{\mathbf{J}}_{n_1 \times n_2} & \quad \cdots & \quad \beta{\mathbf{J}}_{n_1 \times n_{k-1}} & \quad \beta{\mathbf{J}}_{n_1 \times n_k} \\[4pt]

\beta{\mathbf{J}}_{n_2 \times n_1} & \quad \alpha{\mathbf{J}}_{n_2} & \quad \cdots & \quad \beta{\mathbf{J}}_{n_2 \times n_{k-1}} & \quad \beta{\mathbf{J}}_{n_2 \times n_k} \\[4pt]

\vdots & \quad \vdots & \quad \ddots & \quad \vdots & \quad \vdots \\[4pt]

\beta{\mathbf{J}}_{n_{k-1} \times n_1} & \quad \beta{\mathbf{J}}_{n_{k-1} \times n_2} & \quad \cdots & \quad \alpha{\mathbf{J}}_{n_{k-1}} & \quad \beta{\mathbf{J}}_{n_{k-1} \times n_k} \\[4pt]

\beta{\mathbf{J}}_{n_k \times n_1} & \quad \beta{\mathbf{J}}_{n_k \times n_2} & \quad \cdots & \quad \beta{\mathbf{J}}_{n_k \times n_{k-1}} & \quad \alpha{\mathbf{J}}_{n_k} \end{bmatrix} - \alpha{\mathbf{I}}_{n}\,. \end{align*}

\begin{align*} \widetilde{{\mathbb{E}}{\mathbf{A}}} = \begin{bmatrix} \alpha{\mathbf{J}}_{n_1} & \quad \beta{\mathbf{J}}_{n_1 \times n_2} & \quad \cdots & \quad \beta{\mathbf{J}}_{n_1 \times n_{k-1}} & \quad \beta{\mathbf{J}}_{n_1 \times n_k} \\[4pt]

\beta{\mathbf{J}}_{n_2 \times n_1} & \quad \alpha{\mathbf{J}}_{n_2} & \quad \cdots & \quad \beta{\mathbf{J}}_{n_2 \times n_{k-1}} & \quad \beta{\mathbf{J}}_{n_2 \times n_k} \\[4pt]

\vdots & \quad \vdots & \quad \ddots & \quad \vdots & \quad \vdots \\[4pt]

\beta{\mathbf{J}}_{n_{k-1} \times n_1} & \quad \beta{\mathbf{J}}_{n_{k-1} \times n_2} & \quad \cdots & \quad \alpha{\mathbf{J}}_{n_{k-1}} & \quad \beta{\mathbf{J}}_{n_{k-1} \times n_k} \\[4pt]

\beta{\mathbf{J}}_{n_k \times n_1} & \quad \beta{\mathbf{J}}_{n_k \times n_2} & \quad \cdots & \quad \beta{\mathbf{J}}_{n_k \times n_{k-1}} & \quad \alpha{\mathbf{J}}_{n_k} \end{bmatrix} - \alpha{\mathbf{I}}_{n}\,. \end{align*}

Assume that ![]() $n_i = \frac{n}{k} + O(\sqrt{n} \log n)$ for all

$n_i = \frac{n}{k} + O(\sqrt{n} \log n)$ for all ![]() $i\in [k]$. Then, for all

$i\in [k]$. Then, for all ![]() $1\leq i \leq k$,

$1\leq i \leq k$,

Note that both ![]() $(\,\widetilde{{\mathbb{E}}{\mathbf{A}}} + \alpha{\mathbf{I}}_{n})$ and

$(\,\widetilde{{\mathbb{E}}{\mathbf{A}}} + \alpha{\mathbf{I}}_{n})$ and ![]() $(\,{\mathbb{E}}{\mathbf{A}} + \alpha{\mathbf{I}}_{n})$ are rank

$(\,{\mathbb{E}}{\mathbf{A}} + \alpha{\mathbf{I}}_{n})$ are rank ![]() $k$ matrices, then

$k$ matrices, then ![]() $\lambda _i(\widetilde{{\mathbb{E}}{\mathbf{A}}}) = \lambda _i({\mathbb{E}}{\mathbf{A}}) = -\alpha$ for all

$\lambda _i(\widetilde{{\mathbb{E}}{\mathbf{A}}}) = \lambda _i({\mathbb{E}}{\mathbf{A}}) = -\alpha$ for all ![]() $(k+1) \leq i \leq n$. At the same time,

$(k+1) \leq i \leq n$. At the same time, ![]() $\mathrm{SNR}$ in (2) is related to the following quantity

$\mathrm{SNR}$ in (2) is related to the following quantity

\begin{equation*} \begin{aligned} &\, \frac{[\lambda _2({\mathbb{E}}{\mathbf{A}})]^{2}}{\lambda _1({\mathbb{E}}{\mathbf{A}})} = \frac{[(n-k)\alpha - n \beta ]^2}{k[(n - k)\alpha + n(k-1)\beta ]} = \frac{ \left [\sum _{m\in{\mathcal M}} (m-1)\left (\frac{a_m - b_m}{k^{m-1}} \right ) \right ]^2 }{\sum _{m\in{\mathcal M}} (m-1)\left (\frac{a_m - b_m}{k^{m-1}} + b_m \right )}(1+o(1)). \end{aligned} \end{equation*}

\begin{equation*} \begin{aligned} &\, \frac{[\lambda _2({\mathbb{E}}{\mathbf{A}})]^{2}}{\lambda _1({\mathbb{E}}{\mathbf{A}})} = \frac{[(n-k)\alpha - n \beta ]^2}{k[(n - k)\alpha + n(k-1)\beta ]} = \frac{ \left [\sum _{m\in{\mathcal M}} (m-1)\left (\frac{a_m - b_m}{k^{m-1}} \right ) \right ]^2 }{\sum _{m\in{\mathcal M}} (m-1)\left (\frac{a_m - b_m}{k^{m-1}} + b_m \right )}(1+o(1)). \end{aligned} \end{equation*}

When ![]() ${\mathcal M} = \{2\}$ and

${\mathcal M} = \{2\}$ and ![]() $k$ is fixed,

$k$ is fixed, ![]() $\mathrm{SNR}$ in (2) is equal to

$\mathrm{SNR}$ in (2) is equal to ![]() $\frac{(a - b)^2}{k[a + (k-1)b]}$, which corresponds to the

$\frac{(a - b)^2}{k[a + (k-1)b]}$, which corresponds to the ![]() $\mathrm{SNR}$ for the undirected graph in [Reference Chin, Rao and Van19], see also [Reference Abbe1, Section 6].

$\mathrm{SNR}$ for the undirected graph in [Reference Chin, Rao and Van19], see also [Reference Abbe1, Section 6].

3. Spectral norm concentration

The correctness of Algorithms 2 and 1 relies on the concentration of the adjacency matrix of ![]() $H$. The following two concentration results for general random hypergraphs are included, which are independent of HSBM model. The proofs are deferred to Section A.

$H$. The following two concentration results for general random hypergraphs are included, which are independent of HSBM model. The proofs are deferred to Section A.

Theorem 3.1. Let ![]() $H=\bigcup _{m =2}^{M} H_m$, where

$H=\bigcup _{m =2}^{M} H_m$, where ![]() $H_m = ([n], E_m)$ is an Erdős-Rényi inhomogeneous hypergraph of order

$H_m = ([n], E_m)$ is an Erdős-Rényi inhomogeneous hypergraph of order ![]() $m$ for each

$m$ for each ![]() $m\in \{2, \cdots, M\}$. Let

$m\in \{2, \cdots, M\}$. Let ![]() $\boldsymbol{\mathcal{T}}^{\,(m)}$ denote the probability tensor such that

$\boldsymbol{\mathcal{T}}^{\,(m)}$ denote the probability tensor such that ![]() $\boldsymbol{\mathcal{T}}^{(m)} ={\mathbb{E}} \boldsymbol{\mathcal{A}}^{(m)}$ and

$\boldsymbol{\mathcal{T}}^{(m)} ={\mathbb{E}} \boldsymbol{\mathcal{A}}^{(m)}$ and ![]() $\boldsymbol{\mathcal{T}}^{(m)}_{[i_1,\dots, i_m]} = d_{[i_1,\dots,i_m]}/ \binom{n}{m-1}$, denoting

$\boldsymbol{\mathcal{T}}^{(m)}_{[i_1,\dots, i_m]} = d_{[i_1,\dots,i_m]}/ \binom{n}{m-1}$, denoting ![]() $d_m=\max d_{[i_1,\dots, i_m]}$. Suppose for some constant

$d_m=\max d_{[i_1,\dots, i_m]}$. Suppose for some constant ![]() $c\gt 0$,

$c\gt 0$,

\begin{align} d\,:\!=\,\sum _{m =2}^{M} (m-1)\cdot d_m \geq c\log n\,. \end{align}

\begin{align} d\,:\!=\,\sum _{m =2}^{M} (m-1)\cdot d_m \geq c\log n\,. \end{align}

Then for any ![]() $K\gt 0$, there exists a constant

$K\gt 0$, there exists a constant ![]() $ C= 512M(M-1)(K+6)\left [ 2 + (M-1)(1+K)/c \right ]$ such that with probability at least

$ C= 512M(M-1)(K+6)\left [ 2 + (M-1)(1+K)/c \right ]$ such that with probability at least ![]() $1-2n^{-K}-2e^{-n}$, the adjacency matrix

$1-2n^{-K}-2e^{-n}$, the adjacency matrix ![]() ${\mathbf{A}}$ of

${\mathbf{A}}$ of ![]() $H$ satisfies

$H$ satisfies

The inequality (10) can be reduced to the result for graph case obtained in [Reference Feige and Ofek28, Reference Lei and Rinaldo48] by taking ![]() ${\mathcal M} = \{2\}$. The result for a uniform hypergraph is obtained in [Reference Lee, Kim and Chung46]. Note that

${\mathcal M} = \{2\}$. The result for a uniform hypergraph is obtained in [Reference Lee, Kim and Chung46]. Note that ![]() $d$ is a fixed constant in our community detection problem, thus the Assumption 3.1 does not hold and the inequality (9) cannot be directly applied. However, we can still prove a concentration bound for a regularised version of

$d$ is a fixed constant in our community detection problem, thus the Assumption 3.1 does not hold and the inequality (9) cannot be directly applied. However, we can still prove a concentration bound for a regularised version of ![]() ${\mathbf{A}}$, following the same strategy of the proof for Theorem 3.1.

${\mathbf{A}}$, following the same strategy of the proof for Theorem 3.1.

Definition 3.2 (Regularized matrix). Given any ![]() $n\times n$ matrix

$n\times n$ matrix ![]() ${\mathbf{A}}$ and an index set

${\mathbf{A}}$ and an index set ![]() $\mathcal{I}$, let

$\mathcal{I}$, let ![]() ${\mathbf{A}}_{\mathcal{I}}$ be the

${\mathbf{A}}_{\mathcal{I}}$ be the ![]() $n\times n$ matrix obtained from

$n\times n$ matrix obtained from ![]() ${\mathbf{A}}$ by zeroing out the rows and columns not indexed by

${\mathbf{A}}$ by zeroing out the rows and columns not indexed by ![]() $\mathcal{I}$. Namely,

$\mathcal{I}$. Namely,

Since every hyperedge of size ![]() $m$ containing

$m$ containing ![]() $i$ is counted

$i$ is counted ![]() $(m-1)$ times in the

$(m-1)$ times in the ![]() $i$-th row sum of

$i$-th row sum of ![]() ${\mathbf{A}}$, the

${\mathbf{A}}$, the ![]() $i$-th row sum of

$i$-th row sum of ![]() ${\mathbf{A}}$ is given by

${\mathbf{A}}$ is given by

\begin{align*} \mathrm{row}(i) \,:\!=\,\sum _{j}{\mathbf{A}}_{ij} \,:\!=\, \sum _{j} \unicode{x1D7D9}_{\{ i \neq j\} } \sum _{m\in{\mathcal M}}\,\,\sum _{\substack{e\in E_m\\

\{i,j\}\subset e} }\boldsymbol{\mathcal{A}}^{(m)}_{e} = \sum _{m\in{\mathcal M}}\,(m-1) \sum _{e\in E_m:\, i\in e} \boldsymbol{\mathcal{A}}_e^{(m)}. \end{align*}

\begin{align*} \mathrm{row}(i) \,:\!=\,\sum _{j}{\mathbf{A}}_{ij} \,:\!=\, \sum _{j} \unicode{x1D7D9}_{\{ i \neq j\} } \sum _{m\in{\mathcal M}}\,\,\sum _{\substack{e\in E_m\\

\{i,j\}\subset e} }\boldsymbol{\mathcal{A}}^{(m)}_{e} = \sum _{m\in{\mathcal M}}\,(m-1) \sum _{e\in E_m:\, i\in e} \boldsymbol{\mathcal{A}}_e^{(m)}. \end{align*}

Theorem 3.3 is the concentration result for the regularised ![]() ${\mathbf{A}}_{\mathcal{I}}$, by zeroing out rows and columns corresponding to vertices with high row sums.

${\mathbf{A}}_{\mathcal{I}}$, by zeroing out rows and columns corresponding to vertices with high row sums.

Theorem 3.3. Following all the notations in Theorem 3.1, for any constant ![]() $\tau \gt 1$, define

$\tau \gt 1$, define

Let ![]() ${\mathbf{A}}_{\mathcal{I}}$ be the regularised version of

${\mathbf{A}}_{\mathcal{I}}$ be the regularised version of ![]() ${\mathbf{A}}$, as in Definition 3.2. Then for any

${\mathbf{A}}$, as in Definition 3.2. Then for any ![]() $K\gt 0$, there exists a constant

$K\gt 0$, there exists a constant ![]() $C_{\tau }=2( (5M+1)(M-1)+\alpha _0\sqrt{\tau })$ with

$C_{\tau }=2( (5M+1)(M-1)+\alpha _0\sqrt{\tau })$ with ![]() $\alpha _0=16+\frac{32}{\tau }(1+e^2)+128M(M-1)(K+4)\left (1+\frac{1}{e^2}\right )$, such that

$\alpha _0=16+\frac{32}{\tau }(1+e^2)+128M(M-1)(K+4)\left (1+\frac{1}{e^2}\right )$, such that ![]() $ \| ({\mathbf{A}}-{\mathbb{E}}{\mathbf{A}})_{\mathcal{I}}\|\leq C_{\tau }\sqrt{d}$ with probability at least

$ \| ({\mathbf{A}}-{\mathbb{E}}{\mathbf{A}})_{\mathcal{I}}\|\leq C_{\tau }\sqrt{d}$ with probability at least ![]() $1-2(e/2)^{-n} -n^{-K}$.

$1-2(e/2)^{-n} -n^{-K}$.

Algorithm 3. Pre-processing

Algorithm 4. Spectral Partition

4. Algorithms blocks

In this section, we are going to present the algorithmic blocks constructing our main partition method (Algorithm 2): pre-processing (Algorithm 3), initial result by spectral method (Algorithm 4), correction of blemishes via majority rule (Algorithm 5), and merging (Algorithm 6).

Algorithm 5. Correction

Algorithm 6. Merging

4.1. Three or more blocks ( $k\geq 3$)

$k\geq 3$)

The proof of Theorem 1.7 is structured as follows.

Lemma 4.1. Under the assumptions of Theorem 1.7, Algorithm 4 outputs a ![]() $\nu$-correct partition

$\nu$-correct partition ![]() $U_1^{\prime }, \cdots, U_k^{\prime }$ of

$U_1^{\prime }, \cdots, U_k^{\prime }$ of ![]() $Z = (Z\cap V_{1}) \cup \cdots \cup (Z\cap V_{k})$ with probability at least

$Z = (Z\cap V_{1}) \cup \cdots \cup (Z\cap V_{k})$ with probability at least ![]() $1 - O(n^{-2})$.

$1 - O(n^{-2})$.

Lines ![]() $4$ and

$4$ and ![]() $6$ contribute most complexity in Algorithm 4, requiring

$6$ contribute most complexity in Algorithm 4, requiring ![]() $O(n^{3})$ and

$O(n^{3})$ and ![]() $O(n^{2}\log ^2(n))$ each (technically, one should be able to get away with

$O(n^{2}\log ^2(n))$ each (technically, one should be able to get away with ![]() $O(n^2 \log (1/\varepsilon ))$ in line 4, for some desired accuracy

$O(n^2 \log (1/\varepsilon ))$ in line 4, for some desired accuracy ![]() $\varepsilon$ to get the singular vectors). We will conservatively estimate the time complexity of Algorithm 4 as

$\varepsilon$ to get the singular vectors). We will conservatively estimate the time complexity of Algorithm 4 as ![]() $O(n^3)$.

$O(n^3)$.

Lemma 4.2. Under the assumptions of Theorem 1.7, for any ![]() $\nu$-correct partition

$\nu$-correct partition ![]() $U_1^{\prime }, \cdots, U_k^{\prime }$ of

$U_1^{\prime }, \cdots, U_k^{\prime }$ of ![]() $Z = (Z\cap V_{1}) \cup \cdots \cup (Z\cap V_{k})$ and the red hypergraph over

$Z = (Z\cap V_{1}) \cup \cdots \cup (Z\cap V_{k})$ and the red hypergraph over ![]() $Z$, Algorithm 5 computes a

$Z$, Algorithm 5 computes a ![]() $\gamma _{\mathrm{C}}$-correct partition

$\gamma _{\mathrm{C}}$-correct partition ![]() $\widehat{U}_1, \cdots, \widehat{U}_k$ with probability

$\widehat{U}_1, \cdots, \widehat{U}_k$ with probability ![]() $1 -O(e^{-n\rho })$, while

$1 -O(e^{-n\rho })$, while ![]() $\gamma _{\mathrm{C}} = \max \{\nu,\, 1 - k\rho \}$ with

$\gamma _{\mathrm{C}} = \max \{\nu,\, 1 - k\rho \}$ with ![]() $ \rho \,:\!=\, k\exp\!\left ({-}C_{{\mathcal M}}(k) \cdot \mathrm{SNR}_{{\mathcal M}}(k) \right )$ where

$ \rho \,:\!=\, k\exp\!\left ({-}C_{{\mathcal M}}(k) \cdot \mathrm{SNR}_{{\mathcal M}}(k) \right )$ where ![]() $\mathcal M$ is obtained from Algorithm 3, and

$\mathcal M$ is obtained from Algorithm 3, and ![]() $\mathrm{SNR}_{{\mathcal M}}(k)$ and

$\mathrm{SNR}_{{\mathcal M}}(k)$ and ![]() $C_{{\mathcal M}}(k)$ are defined in (2), (3).

$C_{{\mathcal M}}(k)$ are defined in (2), (3).

Lemma 4.3. Given any ![]() $\nu$-correct partition

$\nu$-correct partition ![]() $\widehat{U}_1, \cdots, \widehat{U}_k$ of

$\widehat{U}_1, \cdots, \widehat{U}_k$ of ![]() $Z = (Z\cap V_{1}) \cup \cdots \cup (Z\cap V_{k})$ and the blue hypergraph between

$Z = (Z\cap V_{1}) \cup \cdots \cup (Z\cap V_{k})$ and the blue hypergraph between ![]() $Y$ and

$Y$ and ![]() $Z$, with probability

$Z$, with probability ![]() $1 -O(e^{-n\rho })$, Algorithm 6 outputs a

$1 -O(e^{-n\rho })$, Algorithm 6 outputs a ![]() $\gamma$-correct partition

$\gamma$-correct partition ![]() $\widehat{V}_1, \cdots, \widehat{V}_{k}$ of

$\widehat{V}_1, \cdots, \widehat{V}_{k}$ of ![]() $V_1 \cup V_2 \cup \cdots \cup V_k$, while

$V_1 \cup V_2 \cup \cdots \cup V_k$, while ![]() $\gamma =\max \{\nu,\, 1 - k\rho \}$.

$\gamma =\max \{\nu,\, 1 - k\rho \}$.

The time complexities of Algorithms 5 and 6 are ![]() $O(n)$, since each vertex is adjacent to only constant many hyperedges.

$O(n)$, since each vertex is adjacent to only constant many hyperedges.

Algorithm 7. Spectral Partition

Algorithm 8. Correction

4.2. The binary case ( $k = 2$)

$k = 2$)

The spectral partition step is given in Algorithm 7, and the correction step is given in Algorithm 8.

Lemma 4.4. Under the conditions of Theorem 1.6, the Algorithm 7 outputs a ![]() $\nu$-correct partition

$\nu$-correct partition ![]() $V_1^{\prime }, V_2^{\prime }$ of

$V_1^{\prime }, V_2^{\prime }$ of ![]() $V = V_1 \cup V_2$ with probability at least

$V = V_1 \cup V_2$ with probability at least ![]() $1 - O(n^{-2})$.

$1 - O(n^{-2})$.

Lemma 4.5. Given any ![]() $\nu$-correct partition

$\nu$-correct partition ![]() $V_1^{\prime }, V_2^{\prime }$ of

$V_1^{\prime }, V_2^{\prime }$ of ![]() $V = V_1 \cup V_2$, with probability at least

$V = V_1 \cup V_2$, with probability at least ![]() $1 -O(e^{-n\rho })$, the Algorithm 8 computes a

$1 -O(e^{-n\rho })$, the Algorithm 8 computes a ![]() $\gamma$-correct partition

$\gamma$-correct partition ![]() $\widehat{V}_1, \widehat{V}_2$ with

$\widehat{V}_1, \widehat{V}_2$ with ![]() $\gamma = \{\nu,\, 1 - 2\rho \}$ and

$\gamma = \{\nu,\, 1 - 2\rho \}$ and ![]() $\rho = 2\exp ({-}C_{{\mathcal M}}(2) \cdot \mathrm{SNR}_{{\mathcal M}}(2))$, where

$\rho = 2\exp ({-}C_{{\mathcal M}}(2) \cdot \mathrm{SNR}_{{\mathcal M}}(2))$, where ![]() $\mathrm{SNR}_{{\mathcal M}}(2)$ and

$\mathrm{SNR}_{{\mathcal M}}(2)$ and ![]() $C_{{\mathcal M}}(2)$ are defined in (2), (3).

$C_{{\mathcal M}}(2)$ are defined in (2), (3).

5. Algorithm’s correctness

We are going to present the correctness of Algorithm 2 in this section. The correctness of Algorithm 1 is deferred to Section C. We first introduce some definitions.

Vertex set splitting and adjacency matrix

In Algorithm 2, we first randomly partition the vertex set ![]() $V$ into two disjoint subsets

$V$ into two disjoint subsets ![]() $Z$ and

$Z$ and ![]() $Y$ by assigning

$Y$ by assigning ![]() $+1$ and

$+1$ and ![]() $-1$ to each vertex independently with equal probability. Let

$-1$ to each vertex independently with equal probability. Let ![]() ${\mathbf{B}} \in{\mathbb{R}}^{|Z|\times |Y|}$ denote the submatrix of

${\mathbf{B}} \in{\mathbb{R}}^{|Z|\times |Y|}$ denote the submatrix of ![]() ${\mathbf{A}}$, while

${\mathbf{A}}$, while ![]() ${\mathbf{A}}$ was defined in (6), where rows and columns of

${\mathbf{A}}$ was defined in (6), where rows and columns of ![]() ${\mathbf{B}}$ correspond to vertices in

${\mathbf{B}}$ correspond to vertices in ![]() $Z$ and

$Z$ and ![]() $Y$ respectively. Let

$Y$ respectively. Let ![]() $n_i$ denote the number of vertices in

$n_i$ denote the number of vertices in ![]() $Z\cap V_i$, where

$Z\cap V_i$, where ![]() $V_i$ denotes the true partition with

$V_i$ denotes the true partition with ![]() $|V_i| = \frac{n}{k}$ for all

$|V_i| = \frac{n}{k}$ for all ![]() $i\in [k]$, then

$i\in [k]$, then ![]() $n_i$ can be written as a sum of independent Bernoulli random variables, i.e.,

$n_i$ can be written as a sum of independent Bernoulli random variables, i.e.,

and ![]() $|Y\cap V_i| = |V_i| - |Z\cap V_i| = \frac{n}{k} - n_i$ for each

$|Y\cap V_i| = |V_i| - |Z\cap V_i| = \frac{n}{k} - n_i$ for each ![]() $i \in [k]$.

$i \in [k]$.

Definition 5.1. The splitting ![]() $V = Z \cup Y$ is perfect if

$V = Z \cup Y$ is perfect if ![]() $|Z\cap V_i| = |Y\cap V_i| = n/(2k)$ for all

$|Z\cap V_i| = |Y\cap V_i| = n/(2k)$ for all ![]() $i\in [k]$. And the splitting

$i\in [k]$. And the splitting ![]() $Y = Y_1 \cup Y_2$ is perfect if

$Y = Y_1 \cup Y_2$ is perfect if ![]() $|Y_1\cap V_i| = |Y_2\cap V_i| = n/(4k)$ for all

$|Y_1\cap V_i| = |Y_2\cap V_i| = n/(4k)$ for all ![]() $i\in [k]$.

$i\in [k]$.

However, the splitting is imperfect in most cases since the size of ![]() $Z$ and

$Z$ and ![]() $Y$ would not be exactly the same under the independence assumption. The random matrix

$Y$ would not be exactly the same under the independence assumption. The random matrix ![]() ${\mathbf{B}}$ is parameterised by

${\mathbf{B}}$ is parameterised by ![]() $\{\boldsymbol{\mathcal{A}}^{(m)}\}_{m \in{\mathcal M}}$ and

$\{\boldsymbol{\mathcal{A}}^{(m)}\}_{m \in{\mathcal M}}$ and ![]() $\{n_i\}_{i=1}^{k}$. If we take expectation over

$\{n_i\}_{i=1}^{k}$. If we take expectation over ![]() $\{\boldsymbol{\mathcal{A}}^{(m)}\}_{m \in{\mathcal M}}$ given the block size information

$\{\boldsymbol{\mathcal{A}}^{(m)}\}_{m \in{\mathcal M}}$ given the block size information ![]() $\{n_i\}_{i=1}^{k}$, then it gives rise to the expectation of the imperfect splitting, denoted by

$\{n_i\}_{i=1}^{k}$, then it gives rise to the expectation of the imperfect splitting, denoted by ![]() $\widetilde{{\mathbf{B}}}$,

$\widetilde{{\mathbf{B}}}$,

\begin{equation*} \widetilde {{\mathbf{B}}} \,:\!=\, \begin {bmatrix} \alpha {\mathbf{J}}_{n_1 \times ( \frac {n}{k} - n_1)} & \quad \beta {\mathbf{J}}_{n_1 \times (\frac {n}{k} - n_2)} & \quad \dots & \quad \beta {\mathbf{J}}_{n_1 \times (\frac {n}{k}- n_k)} \\[4pt]

\beta {\mathbf{J}}_{n_2 \times (\frac {n}{k}- n_1)} & \quad \alpha {\mathbf{J}}_{n_2 \times (\frac {n}{k}- n_2)} & \quad\dots & \quad \beta {\mathbf{J}}_{n_2 \times (\frac {n}{k}- n_k)} \\[4pt]

\vdots & \quad \vdots & \quad \ddots & \quad \vdots \\[4pt]

\beta {\mathbf{J}}_{n_k \times (\frac {n}{k}- n_1)} & \quad \beta {\mathbf{J}}_{n_k \times (\frac {n}{k}- n_2)} & \quad \dots & \quad \alpha {\mathbf{J}}_{n_k \times (\frac {n}{k}- n_k)} \end {bmatrix}\,, \end{equation*}

\begin{equation*} \widetilde {{\mathbf{B}}} \,:\!=\, \begin {bmatrix} \alpha {\mathbf{J}}_{n_1 \times ( \frac {n}{k} - n_1)} & \quad \beta {\mathbf{J}}_{n_1 \times (\frac {n}{k} - n_2)} & \quad \dots & \quad \beta {\mathbf{J}}_{n_1 \times (\frac {n}{k}- n_k)} \\[4pt]

\beta {\mathbf{J}}_{n_2 \times (\frac {n}{k}- n_1)} & \quad \alpha {\mathbf{J}}_{n_2 \times (\frac {n}{k}- n_2)} & \quad\dots & \quad \beta {\mathbf{J}}_{n_2 \times (\frac {n}{k}- n_k)} \\[4pt]

\vdots & \quad \vdots & \quad \ddots & \quad \vdots \\[4pt]

\beta {\mathbf{J}}_{n_k \times (\frac {n}{k}- n_1)} & \quad \beta {\mathbf{J}}_{n_k \times (\frac {n}{k}- n_2)} & \quad \dots & \quad \alpha {\mathbf{J}}_{n_k \times (\frac {n}{k}- n_k)} \end {bmatrix}\,, \end{equation*}

where ![]() $\alpha$,

$\alpha$, ![]() $\beta$ are defined in (8). In the perfect splitting case, the dimension of each block is

$\beta$ are defined in (8). In the perfect splitting case, the dimension of each block is ![]() $n/(2k)\times n/(2k)$ since

$n/(2k)\times n/(2k)$ since ![]() ${\mathbb{E}} n_i = n/(2k)$ for all

${\mathbb{E}} n_i = n/(2k)$ for all ![]() $i \in [k]$, and the expectation matrix

$i \in [k]$, and the expectation matrix ![]() $\overline{{\mathbf{B}}}$ can be written as

$\overline{{\mathbf{B}}}$ can be written as

\begin{equation*} \overline {{\mathbf{B}}} \,:\!=\, \begin {bmatrix} \alpha {\mathbf{J}}_{\frac {n}{2k}} & \quad \beta {\mathbf{J}}_{\frac {n}{2k}} & \quad \dots & \quad \beta {\mathbf{J}}_{\frac {n}{2k}} \\[4pt]

\beta {\mathbf{J}}_{\frac {n}{2k}} & \quad \alpha {\mathbf{J}}_{\frac {n}{2k}} & \quad \dots & \quad \beta {\mathbf{J}}_{\frac {n}{2k}} \\[4pt]

\vdots & \quad \vdots & \quad \ddots & \quad \vdots \\[4pt]

\beta {\mathbf{J}}_{\frac {n}{2k}} & \quad \beta {\mathbf{J}}_{\frac {n}{2k}} & \quad\dots & \quad \alpha {\mathbf{J}}_{\frac {n}{2k}} \end {bmatrix}\,. \end{equation*}

\begin{equation*} \overline {{\mathbf{B}}} \,:\!=\, \begin {bmatrix} \alpha {\mathbf{J}}_{\frac {n}{2k}} & \quad \beta {\mathbf{J}}_{\frac {n}{2k}} & \quad \dots & \quad \beta {\mathbf{J}}_{\frac {n}{2k}} \\[4pt]

\beta {\mathbf{J}}_{\frac {n}{2k}} & \quad \alpha {\mathbf{J}}_{\frac {n}{2k}} & \quad \dots & \quad \beta {\mathbf{J}}_{\frac {n}{2k}} \\[4pt]

\vdots & \quad \vdots & \quad \ddots & \quad \vdots \\[4pt]

\beta {\mathbf{J}}_{\frac {n}{2k}} & \quad \beta {\mathbf{J}}_{\frac {n}{2k}} & \quad\dots & \quad \alpha {\mathbf{J}}_{\frac {n}{2k}} \end {bmatrix}\,. \end{equation*}

In Algorithm 4, ![]() $Y_1$ is a random subset of

$Y_1$ is a random subset of ![]() $Y$ obtained by selecting each element with probability

$Y$ obtained by selecting each element with probability ![]() $1/2$ independently, and

$1/2$ independently, and ![]() $Y_2 = Y\setminus Y_1$. Let

$Y_2 = Y\setminus Y_1$. Let ![]() $n^{\prime }_{i}$ denote the number of vertices in

$n^{\prime }_{i}$ denote the number of vertices in ![]() $Y_1\cap V_i$, then

$Y_1\cap V_i$, then ![]() $n^{\prime }_{i}$ can be written as a sum of independent Bernoulli random variables,

$n^{\prime }_{i}$ can be written as a sum of independent Bernoulli random variables,

and ![]() $|Y_2\cap V_i| = |V_i| - |Z\cap V_i| - |Y_1\cap V_i| = n/k - n_i - n^{\prime }_{i}$ for all

$|Y_2\cap V_i| = |V_i| - |Z\cap V_i| - |Y_1\cap V_i| = n/k - n_i - n^{\prime }_{i}$ for all ![]() $i \in [k]$.

$i \in [k]$.

Induced sub-hypergraph

Definition 5.2 (Induced Sub-hypergraph). Let ![]() $H = (V, E)$ be a non-uniform random hypergraph and

$H = (V, E)$ be a non-uniform random hypergraph and ![]() $S\subset V$ be any subset of the vertices of

$S\subset V$ be any subset of the vertices of ![]() $H$. Then the induced sub-hypergraph

$H$. Then the induced sub-hypergraph ![]() $H[S]$ is the hypergraph whose vertex set is

$H[S]$ is the hypergraph whose vertex set is ![]() $S$ and whose hyperedge set

$S$ and whose hyperedge set ![]() $E[S]$ consists of all of the edges in

$E[S]$ consists of all of the edges in ![]() $E$ that have all endpoints located in

$E$ that have all endpoints located in ![]() $S$.

$S$.

Let ![]() $H[Y_1 \cup Z]$(resp.

$H[Y_1 \cup Z]$(resp. ![]() $H[Y_2 \cup Z]$) denote the induced sub-hypergraph on vertex set

$H[Y_2 \cup Z]$) denote the induced sub-hypergraph on vertex set ![]() $Y_1\cup Z$ (resp.

$Y_1\cup Z$ (resp. ![]() $Y_2 \cup Z$), and

$Y_2 \cup Z$), and ![]() ${\mathbf{B}}_1 \in{\mathbb{R}}^{|Z|\times |Y_1|}$ (resp.

${\mathbf{B}}_1 \in{\mathbb{R}}^{|Z|\times |Y_1|}$ (resp. ![]() ${\mathbf{B}}_2 \in{\mathbb{R}}^{|Z|\times |Y_2|}$) denote the adjacency matrices corresponding to the sub-hypergraphs, where rows and columns of

${\mathbf{B}}_2 \in{\mathbb{R}}^{|Z|\times |Y_2|}$) denote the adjacency matrices corresponding to the sub-hypergraphs, where rows and columns of ![]() ${\mathbf{B}}_1$ (resp.

${\mathbf{B}}_1$ (resp. ![]() ${\mathbf{B}}_2$) are corresponding to elements in

${\mathbf{B}}_2$) are corresponding to elements in ![]() $Z$ and

$Z$ and ![]() $Y_1$ (resp.,

$Y_1$ (resp., ![]() $Z$ and

$Z$ and ![]() $Y_2$). Therefore,

$Y_2$). Therefore, ![]() ${\mathbf{B}}_1$ and

${\mathbf{B}}_1$ and ![]() ${\mathbf{B}}_2$ are parameterised by

${\mathbf{B}}_2$ are parameterised by ![]() $\{\boldsymbol{\mathcal{A}}^{(m)}\}_{m \in{\mathcal M}}$,

$\{\boldsymbol{\mathcal{A}}^{(m)}\}_{m \in{\mathcal M}}$, ![]() $\{n_i\}_{i=1}^{k}$ and

$\{n_i\}_{i=1}^{k}$ and ![]() $\{n^{\prime }_{i}\}_{i=1}^{k}$, and the entries in

$\{n^{\prime }_{i}\}_{i=1}^{k}$, and the entries in ![]() ${\mathbf{B}}_1$ are independent of the entries in

${\mathbf{B}}_1$ are independent of the entries in ![]() ${\mathbf{B}}_2$, due to the independence of hyperedges. If we take expectation over

${\mathbf{B}}_2$, due to the independence of hyperedges. If we take expectation over ![]() $\{\boldsymbol{\mathcal{A}}^{(m)}\}_{m \in{\mathcal M}}$ conditioning on

$\{\boldsymbol{\mathcal{A}}^{(m)}\}_{m \in{\mathcal M}}$ conditioning on ![]() $\{n_i\}_{i=1}^{k}$ and

$\{n_i\}_{i=1}^{k}$ and ![]() $\{n^{\prime }_{i}\}_{i=1}^{k}$, then it gives rise to the expectation of the imperfect splitting, denoted by

$\{n^{\prime }_{i}\}_{i=1}^{k}$, then it gives rise to the expectation of the imperfect splitting, denoted by ![]() $\widetilde{{\mathbf{B}}}_1$,

$\widetilde{{\mathbf{B}}}_1$,

\begin{equation} \widetilde{{\mathbf{B}}}_1 \,:\!=\, \begin{bmatrix} \widetilde{\alpha }_{11}{\mathbf{J}}_{n_1 \times n^{\prime }_{1}} & \quad \dots & \quad \widetilde{\beta }_{1k}{\mathbf{J}}_{n_1 \times n^{\prime }_{k}} \\[4pt]

\vdots & \quad \ddots & \quad \vdots \\[4pt]

\widetilde{\beta }_{k1}{\mathbf{J}}_{n_k \times n^{\prime }_{1}} & \quad \dots & \quad \widetilde{\alpha }_{kk}{\mathbf{J}}_{n_k \times n^{\prime }_{k}} \end{bmatrix}\,, \end{equation}

\begin{equation} \widetilde{{\mathbf{B}}}_1 \,:\!=\, \begin{bmatrix} \widetilde{\alpha }_{11}{\mathbf{J}}_{n_1 \times n^{\prime }_{1}} & \quad \dots & \quad \widetilde{\beta }_{1k}{\mathbf{J}}_{n_1 \times n^{\prime }_{k}} \\[4pt]

\vdots & \quad \ddots & \quad \vdots \\[4pt]

\widetilde{\beta }_{k1}{\mathbf{J}}_{n_k \times n^{\prime }_{1}} & \quad \dots & \quad \widetilde{\alpha }_{kk}{\mathbf{J}}_{n_k \times n^{\prime }_{k}} \end{bmatrix}\,, \end{equation}

where

\begin{align} \widetilde{\alpha }_{ii} \,:\!=\,&\, \sum _{m \in{\mathcal{M}}}\left \{ \binom{n_i + n_i^{\prime } - 2}{m-2} \frac{a_m - b_m}{\binom{n}{m-1}} + \binom{\sum _{l=1}^{k}(n_l + n_l^{\prime }) - 2}{m-2} \frac{b_m}{\binom{n}{m-1}} \right \}\,, \end{align}

\begin{align} \widetilde{\alpha }_{ii} \,:\!=\,&\, \sum _{m \in{\mathcal{M}}}\left \{ \binom{n_i + n_i^{\prime } - 2}{m-2} \frac{a_m - b_m}{\binom{n}{m-1}} + \binom{\sum _{l=1}^{k}(n_l + n_l^{\prime }) - 2}{m-2} \frac{b_m}{\binom{n}{m-1}} \right \}\,, \end{align}

\begin{align} \widetilde{\beta }_{ij} \,:\!=\,&\, \sum _{m \in{\mathcal{M}}} \binom{\sum _{l=1}^{k}(n_l + n_l^{\prime }) - 2}{m-2}\frac{b_m}{\binom{n}{m-1}}\,,\quad i\neq j, \, i, j\in [k]\,. \end{align}

\begin{align} \widetilde{\beta }_{ij} \,:\!=\,&\, \sum _{m \in{\mathcal{M}}} \binom{\sum _{l=1}^{k}(n_l + n_l^{\prime }) - 2}{m-2}\frac{b_m}{\binom{n}{m-1}}\,,\quad i\neq j, \, i, j\in [k]\,. \end{align}

The expectation of the perfect splitting, denoted by ![]() $\overline{{\mathbf{B}}}_1$, can be written as

$\overline{{\mathbf{B}}}_1$, can be written as

\begin{equation} \overline{{\mathbf{B}}}_1 \,:\!=\, \begin{bmatrix} \overline{\alpha }{\mathbf{J}}_{\frac{n}{2k} \times \frac{n}{4k}} & \quad \overline{\beta }{\mathbf{J}}_{\frac{n}{2k} \times \frac{n}{4k}} & \quad \dots & \quad \overline{\beta }{\mathbf{J}}_{\frac{n}{2k} \times \frac{n}{4k}} \\[4pt]

\overline{\beta }{\mathbf{J}}_{\frac{n}{2k} \times \frac{n}{4k}} & \quad \overline{\alpha }{\mathbf{J}}_{\frac{n}{2k} \times \frac{n}{4k}} & \quad \dots & \quad \overline{\beta }{\mathbf{J}}_{\frac{n}{2k} \times \frac{n}{4k}} \\[4pt]

\vdots & \quad \vdots & \quad \ddots & \quad \vdots \\[4pt]

\overline{\beta }{\mathbf{J}}_{\frac{n}{2k} \times \frac{n}{4k}} & \quad \overline{\beta }{\mathbf{J}}_{\frac{n}{2k} \times \frac{n}{4k}} & \quad\dots & \quad \overline{\alpha }{\mathbf{J}}_{\frac{n}{2k} \times \frac{n}{4k}} \end{bmatrix}\,, \end{equation}

\begin{equation} \overline{{\mathbf{B}}}_1 \,:\!=\, \begin{bmatrix} \overline{\alpha }{\mathbf{J}}_{\frac{n}{2k} \times \frac{n}{4k}} & \quad \overline{\beta }{\mathbf{J}}_{\frac{n}{2k} \times \frac{n}{4k}} & \quad \dots & \quad \overline{\beta }{\mathbf{J}}_{\frac{n}{2k} \times \frac{n}{4k}} \\[4pt]

\overline{\beta }{\mathbf{J}}_{\frac{n}{2k} \times \frac{n}{4k}} & \quad \overline{\alpha }{\mathbf{J}}_{\frac{n}{2k} \times \frac{n}{4k}} & \quad \dots & \quad \overline{\beta }{\mathbf{J}}_{\frac{n}{2k} \times \frac{n}{4k}} \\[4pt]

\vdots & \quad \vdots & \quad \ddots & \quad \vdots \\[4pt]

\overline{\beta }{\mathbf{J}}_{\frac{n}{2k} \times \frac{n}{4k}} & \quad \overline{\beta }{\mathbf{J}}_{\frac{n}{2k} \times \frac{n}{4k}} & \quad\dots & \quad \overline{\alpha }{\mathbf{J}}_{\frac{n}{2k} \times \frac{n}{4k}} \end{bmatrix}\,, \end{equation}

where

\begin{align} \overline{\alpha } \,:\!=\, \sum _{m \in{\mathcal M}}\left \{ \binom{ \frac{3n}{4k} - 2}{m-2} \frac{a_m - b_m}{\binom{n}{m-1}} + \binom{\frac{3n}{4} - 2}{m-2}\frac{b_m}{\binom{n}{m-1}} \right \}\,,\quad \overline{\beta } \,:\!=\, \sum _{m \in{\mathcal M}} \binom{\frac{3n}{4} - 2}{m-2}\frac{b_m}{\binom{n}{m-1}}\,. \end{align}

\begin{align} \overline{\alpha } \,:\!=\, \sum _{m \in{\mathcal M}}\left \{ \binom{ \frac{3n}{4k} - 2}{m-2} \frac{a_m - b_m}{\binom{n}{m-1}} + \binom{\frac{3n}{4} - 2}{m-2}\frac{b_m}{\binom{n}{m-1}} \right \}\,,\quad \overline{\beta } \,:\!=\, \sum _{m \in{\mathcal M}} \binom{\frac{3n}{4} - 2}{m-2}\frac{b_m}{\binom{n}{m-1}}\,. \end{align}

The matrices ![]() $\widetilde{{\mathbf{B}}}_2, \overline{{\mathbf{B}}}_2$ can be defined similarly, since dimensions of

$\widetilde{{\mathbf{B}}}_2, \overline{{\mathbf{B}}}_2$ can be defined similarly, since dimensions of ![]() $|Y_2\cap V_i|$ are also determined by

$|Y_2\cap V_i|$ are also determined by ![]() $n_i$ and

$n_i$ and ![]() $n^{\prime }_{i}$. Obviously,

$n^{\prime }_{i}$. Obviously, ![]() $\overline{{\mathbf{B}}}_2 = \overline{{\mathbf{B}}}_1$ since

$\overline{{\mathbf{B}}}_2 = \overline{{\mathbf{B}}}_1$ since ![]() ${\mathbb{E}} n^{\prime }_{i} ={\mathbb{E}} (n/k- n_{i} - n_i^{\prime }) = n/(4k)$ for all

${\mathbb{E}} n^{\prime }_{i} ={\mathbb{E}} (n/k- n_{i} - n_i^{\prime }) = n/(4k)$ for all ![]() $i\in [k]$.

$i\in [k]$.

Fixing Dimensions

The dimensions of ![]() $\widetilde{{\mathbf{B}}}_1$ and

$\widetilde{{\mathbf{B}}}_1$ and ![]() $\widetilde{{\mathbf{B}}}_2$, as well as blocks they consist of, are not deterministic – since

$\widetilde{{\mathbf{B}}}_2$, as well as blocks they consist of, are not deterministic – since ![]() $n_i$ and

$n_i$ and ![]() $n^{\prime }_i$, defined in (12) and (13) respectively, are sums of independent random variables. As such, we cannot directly compare them. In order to overcome this difficulty, we embed

$n^{\prime }_i$, defined in (12) and (13) respectively, are sums of independent random variables. As such, we cannot directly compare them. In order to overcome this difficulty, we embed ![]() ${\mathbf{B}}_1$ and

${\mathbf{B}}_1$ and ![]() ${\mathbf{B}}_2$ into the following

${\mathbf{B}}_2$ into the following ![]() $n\times n$ matrices:

$n\times n$ matrices:

\begin{equation} {\mathbf{A}}_1\,:\!=\, \begin{bmatrix} \mathbf{0}_{|Z|\times |Z|} & \quad{\mathbf{B}}_1 & \quad \mathbf{0}_{|Z|\times |Y_2|} \\[4pt]

\mathbf{0}_{|Y|\times |Z|} & \quad \mathbf{0}_{|Y|\times |Y_1|} & \quad \mathbf{0}_{|Y|\times |Y_2|} \end{bmatrix}\,,\quad{\mathbf{A}}_2\,:\!=\, \begin{bmatrix} \mathbf{0}_{|Z|\times |Z|} & \quad \mathbf{0}_{|Z|\times |Y_1|} & \quad{\mathbf{B}}_2 \\[4pt]

\mathbf{0}_{|Y|\times |Z|} & \quad \mathbf{0}_{|Y|\times |Y_1|} & \quad \mathbf{0}_{|Y|\times |Y_2|} \end{bmatrix}\,. \end{equation}

\begin{equation} {\mathbf{A}}_1\,:\!=\, \begin{bmatrix} \mathbf{0}_{|Z|\times |Z|} & \quad{\mathbf{B}}_1 & \quad \mathbf{0}_{|Z|\times |Y_2|} \\[4pt]

\mathbf{0}_{|Y|\times |Z|} & \quad \mathbf{0}_{|Y|\times |Y_1|} & \quad \mathbf{0}_{|Y|\times |Y_2|} \end{bmatrix}\,,\quad{\mathbf{A}}_2\,:\!=\, \begin{bmatrix} \mathbf{0}_{|Z|\times |Z|} & \quad \mathbf{0}_{|Z|\times |Y_1|} & \quad{\mathbf{B}}_2 \\[4pt]

\mathbf{0}_{|Y|\times |Z|} & \quad \mathbf{0}_{|Y|\times |Y_1|} & \quad \mathbf{0}_{|Y|\times |Y_2|} \end{bmatrix}\,. \end{equation}

Note that ![]() ${\mathbf{A}}_1$ and

${\mathbf{A}}_1$ and ![]() ${\mathbf{A}}_2$ have the same size. Also by definition, the entries in

${\mathbf{A}}_2$ have the same size. Also by definition, the entries in ![]() ${\mathbf{A}}_1$ are independent of the entries in

${\mathbf{A}}_1$ are independent of the entries in ![]() ${\mathbf{A}}_2$. If we take expectation over

${\mathbf{A}}_2$. If we take expectation over ![]() $\{\boldsymbol{\mathcal{A}}^{(m)}\}_{m\in{\mathcal M}}$ conditioning on

$\{\boldsymbol{\mathcal{A}}^{(m)}\}_{m\in{\mathcal M}}$ conditioning on ![]() $\{n_i\}_{i=1}^{k}$ and

$\{n_i\}_{i=1}^{k}$ and ![]() $\{n^{\prime }_{i}\}_{i=1}^{k}$, then we obtain the expectation matrices of the imperfect splitting, denoted by

$\{n^{\prime }_{i}\}_{i=1}^{k}$, then we obtain the expectation matrices of the imperfect splitting, denoted by ![]() $\widetilde{{\mathbf{A}}}_1$(resp.

$\widetilde{{\mathbf{A}}}_1$(resp. ![]() $\widetilde{{\mathbf{A}}}_2$), written as

$\widetilde{{\mathbf{A}}}_2$), written as

\begin{equation} \widetilde{{\mathbf{A}}}_1\,:\!=\, \begin{bmatrix} \mathbf{0}_{|Z|\times |Z|} & \quad \widetilde{{\mathbf{B}}}_1 & \quad \mathbf{0}_{|Z|\times |Y_2|} \\[4pt]

\mathbf{0}_{|Y|\times |Z|} & \quad \mathbf{0}_{|Y|\times |Y_1|} & \quad \mathbf{0}_{|Y|\times |Y_2|} \end{bmatrix}\,,\quad \widetilde{{\mathbf{A}}}_2\,:\!=\, \begin{bmatrix} \mathbf{0}_{|Z|\times |Z|} & \quad \mathbf{0}_{|Z|\times |Y_1|} & \quad \widetilde{{\mathbf{B}}}_2 \\[4pt]

\mathbf{0}_{|Y|\times |Z|} & \quad \mathbf{0}_{|Y|\times |Y_1|} & \quad \mathbf{0}_{|Y|\times |Y_2|} \end{bmatrix}\,. \end{equation}

\begin{equation} \widetilde{{\mathbf{A}}}_1\,:\!=\, \begin{bmatrix} \mathbf{0}_{|Z|\times |Z|} & \quad \widetilde{{\mathbf{B}}}_1 & \quad \mathbf{0}_{|Z|\times |Y_2|} \\[4pt]

\mathbf{0}_{|Y|\times |Z|} & \quad \mathbf{0}_{|Y|\times |Y_1|} & \quad \mathbf{0}_{|Y|\times |Y_2|} \end{bmatrix}\,,\quad \widetilde{{\mathbf{A}}}_2\,:\!=\, \begin{bmatrix} \mathbf{0}_{|Z|\times |Z|} & \quad \mathbf{0}_{|Z|\times |Y_1|} & \quad \widetilde{{\mathbf{B}}}_2 \\[4pt]

\mathbf{0}_{|Y|\times |Z|} & \quad \mathbf{0}_{|Y|\times |Y_1|} & \quad \mathbf{0}_{|Y|\times |Y_2|} \end{bmatrix}\,. \end{equation}

The expectation matrix of the perfect splitting, denoted by ![]() $\overline{{\mathbf{A}}}_1$(resp.

$\overline{{\mathbf{A}}}_1$(resp. ![]() $\overline{{\mathbf{A}}}_2$), can be written as

$\overline{{\mathbf{A}}}_2$), can be written as

\begin{equation} \overline{{\mathbf{A}}}_1\,:\!=\, \begin{bmatrix} \mathbf{0}_{ \frac{n}{2} \times \frac{n}{2}} & \quad \overline{{\mathbf{B}}}_1 & \quad \mathbf{0}_{\frac{n}{2}\times \frac{n}{4}} \\[4pt]

\mathbf{0}_{\frac{n}{2}\times \frac{n}{2}} & \quad \mathbf{0}_{\frac{n}{2}\times \frac{n}{4}} & \quad \mathbf{0}_{\frac{n}{2}\times \frac{n}{4}} \end{bmatrix}\,,\quad \overline{{\mathbf{A}}}_2\,:\!=\, \begin{bmatrix} \mathbf{0}_{ \frac{n}{2} \times \frac{n}{2}} & \quad \mathbf{0}_{\frac{n}{2}\times \frac{n}{4}} & \quad \overline{{\mathbf{B}}}_2\\[4pt]

\mathbf{0}_{\frac{n}{2}\times \frac{n}{2}} & \quad \mathbf{0}_{\frac{n}{2}\times \frac{n}{4}} & \quad \mathbf{0}_{\frac{n}{2}\times \frac{n}{4}} \end{bmatrix}\,. \end{equation}

\begin{equation} \overline{{\mathbf{A}}}_1\,:\!=\, \begin{bmatrix} \mathbf{0}_{ \frac{n}{2} \times \frac{n}{2}} & \quad \overline{{\mathbf{B}}}_1 & \quad \mathbf{0}_{\frac{n}{2}\times \frac{n}{4}} \\[4pt]

\mathbf{0}_{\frac{n}{2}\times \frac{n}{2}} & \quad \mathbf{0}_{\frac{n}{2}\times \frac{n}{4}} & \quad \mathbf{0}_{\frac{n}{2}\times \frac{n}{4}} \end{bmatrix}\,,\quad \overline{{\mathbf{A}}}_2\,:\!=\, \begin{bmatrix} \mathbf{0}_{ \frac{n}{2} \times \frac{n}{2}} & \quad \mathbf{0}_{\frac{n}{2}\times \frac{n}{4}} & \quad \overline{{\mathbf{B}}}_2\\[4pt]