1. Introduction

The model that we analyze in this paper is described as follows. We consider a queue in which there are

![]() $m\in{\mathbb N}$

arrivals, corresponding to independent and identically distributed (i.i.d.) arrival times that are sampled from a given distribution on the positive half-line; henceforth we let A denote a non-negative random variable distributed as a generic arrival time. The customers’ service times are i.i.d. as well (and in addition independent of the arrival times), distributed as the non-negative random variable B. With the queue starting empty at time 0, our main objective is to evaluate the resulting queue’s workload distribution, at any given point in time.

$m\in{\mathbb N}$

arrivals, corresponding to independent and identically distributed (i.i.d.) arrival times that are sampled from a given distribution on the positive half-line; henceforth we let A denote a non-negative random variable distributed as a generic arrival time. The customers’ service times are i.i.d. as well (and in addition independent of the arrival times), distributed as the non-negative random variable B. With the queue starting empty at time 0, our main objective is to evaluate the resulting queue’s workload distribution, at any given point in time.

Note that when considering this model, we depart from the classical queueing paradigm in which the interarrival times are assumed to be i.i.d., rather than the arrival times. This model with i.i.d. interarrival times (or, equivalently, with renewal arrivals) is the intensively studied GI/G/1 queue. There are various compelling reasons to consider our model with i.i.d. arrival times. In the first place, it is observed that renewal arrivals are conceptually problematic, as they require any newly arriving customer to have knowledge of the arrival epoch of the previous customer (except in the case of Poisson arrivals, due to the memoryless property). In the second place, our model could be used to study the situation in which customers decide independently of each other when they want to use a given service. An example could relate to a scenario in which clients choose independently of each other when to visit a shop; the distribution of the arrival time A could reflect the day profile.

We proceed by providing a brief account of the existing literature. Despite the fact that the above model provides a highly natural description of a broad range of service systems, it is considerably less well understood than the more conventional class of queues with renewal-type arrivals. As the ith interarrival time is

![]() $A^{(i)}-A^{(i-1)}=\!:\, \Delta^{(i)}$

, with

$A^{(i)}-A^{(i-1)}=\!:\, \Delta^{(i)}$

, with

![]() $A^{(i)}$

denoting the ith-order statistic of the m arrival times, Honnappa et al., in their influential paper [Reference Honnappa, Jain and Ward11], call the system an

$A^{(i)}$

denoting the ith-order statistic of the m arrival times, Honnappa et al., in their influential paper [Reference Honnappa, Jain and Ward11], call the system an

![]() $\Delta^{(i)}$

/G/1 queue. Other names have been used as well: in the terminology of the seminal paper by Glazer and Hassin [Reference Glazer and Hassin7] one would call the system a ?/G/1 queue, while Honnappa [Reference Honnappa10] later used RS/G/1 (RS standing for ‘randomly scattered’).

$\Delta^{(i)}$

/G/1 queue. Other names have been used as well: in the terminology of the seminal paper by Glazer and Hassin [Reference Glazer and Hassin7] one would call the system a ?/G/1 queue, while Honnappa [Reference Honnappa10] later used RS/G/1 (RS standing for ‘randomly scattered’).

We do not provide an exhaustive overview of the results available, but restrict ourselves to a few recent key references; a more detailed overview can be found in [Reference Haviv and Ravner9, Section 2.1]. So far, hardly any explicit results are available for the transient queue length (i.e. the number of customers present at a given time t). Under an appropriately chosen scaling of the service-time distribution, [Reference Honnappa, Jain and Ward11] succeeds in developing fluid and diffusion approximations for the limiting regime as

![]() $m\to\infty.$

In [Reference Bet3, Reference Bet, van der Hofstad and van Leeuwaarden4], it is shown that the queueing process converges in a specific heavy-traffic regime to a reflected Brownian motion with non-linear drift. Sample-path large-deviation results have been established in [Reference Honnappa10]. Under the assumption that the service times are exponentially distributed, a system of Kolmogorov backward equations can be set up, so as to describe the transient queue-length distribution; this method is due to [Reference Glazer and Hassin7] and was further generalized in [Reference Juneja and Shimkin14]. As described in great detail in [Reference Haviv and Ravner9], there is a strong relation to the strategic queueing game in which each customer has to decide when to arrive, without any coordination with the other customers.

$m\to\infty.$

In [Reference Bet3, Reference Bet, van der Hofstad and van Leeuwaarden4], it is shown that the queueing process converges in a specific heavy-traffic regime to a reflected Brownian motion with non-linear drift. Sample-path large-deviation results have been established in [Reference Honnappa10]. Under the assumption that the service times are exponentially distributed, a system of Kolmogorov backward equations can be set up, so as to describe the transient queue-length distribution; this method is due to [Reference Glazer and Hassin7] and was further generalized in [Reference Juneja and Shimkin14]. As described in great detail in [Reference Haviv and Ravner9], there is a strong relation to the strategic queueing game in which each customer has to decide when to arrive, without any coordination with the other customers.

We conclude this introduction by detailing this paper’s contributions and organization. As stated in [Reference Honnappa, Jain and Ward11], ‘exact analysis of this model is impossible for general service processes’. While this remains true, to date, for the transient queue-length distribution, the results of this paper show that one can provide a full analysis of the transient workload distribution, albeit in terms of transforms. Specifically, for the case of exponentially distributed arrival times, in Section 2 we develop a technique that provides the Laplace–Stieltjes transform of the workload at an exponentially distributed point in time. As we demonstrate, relying on the powerful computational techniques of [Reference Abate and Whitt1] and [Reference den Iseger12], this enables us to numerically evaluate various relevant workload-related performance metrics.

The second contribution concerns various extensions of this ‘base model’. (a) In the first place we consider in Section 3 the model in which the workload is not necessarily starting empty at time 0. The analysis relies on relations between the workload process and the associated non-reflected process and on a description of the corresponding first-passage time process as a Markov additive process [Reference D’Auria, Ivanovs, Kella and Mandjes5]. (b) Second, in Section 4 we allow an additional Poisson arrival stream, with i.i.d. service times (not necessarily distributed as the service time B of the finite pool of m customers). The analysis reduces to solving a recursion involving m unknown constants that can be identified using a result from [Reference Ivanovs, Boxma and Mandjes13]. (c) Then, in Section 5, we allow the arrival times to be of phase type; this class of distributions is particularly relevant as any random variable on the positive half-line can be approximated arbitrarily closely by a phase-type random variable [Reference Asmussen2, Section III.4]. Also, in the analysis of this case with phase-type arrivals, unknown constants appear, which can again be determined relying on [Reference Ivanovs, Boxma and Mandjes13]. (d) The last variant we consider, in Section 6, is the one where, based on the workload they face when arriving, customers decide whether or not to enter the system. This concept is often referred to as balking, and has been studied in various settings; see e.g. the seminal work [Reference Haight8].

2. Exponentially distributed arrival times

This section focuses on the ‘base model’, in which the generic arrival time A has an exponential distribution with parameter

![]() $\lambda>0$

. Our objective is to uniquely characterize the queue’s transient workload. We do so by identifying a closed-form expression for the Laplace–Stieltjes transform of the queue’s workload after an exponentially distributed time interval. Throughout, it is assumed that the system starts empty at time 0.

$\lambda>0$

. Our objective is to uniquely characterize the queue’s transient workload. We do so by identifying a closed-form expression for the Laplace–Stieltjes transform of the queue’s workload after an exponentially distributed time interval. Throughout, it is assumed that the system starts empty at time 0.

Let the cumulative distribution function of the service times be

![]() $B(\!\cdot\!)$

. Our focus lies on finding a probabilistic characterization of the workload process

$B(\!\cdot\!)$

. Our focus lies on finding a probabilistic characterization of the workload process

![]() $(W(t))_{t\geq 0}.$

With

$(W(t))_{t\geq 0}.$

With

![]() $N(t)\in\{0,\ldots,m\}$

denoting the number of clients that have not arrived by time

$N(t)\in\{0,\ldots,m\}$

denoting the number of clients that have not arrived by time

![]() $t\geq 0$

, the key object of study is the cumulative distribution function

$t\geq 0$

, the key object of study is the cumulative distribution function

It is noted that W(t) has an atom in 0, in that

![]() $\mathbb{P}(W(t) = 0) > 0$

for all

$\mathbb{P}(W(t) = 0) > 0$

for all

![]() $t>0$

. For

$t>0$

. For

![]() $x>0$

,

$x>0$

,

![]() $t>0$

, and

$t>0$

, and

![]() $n\in\{0,\ldots,m\}$

, we introduce the corresponding density

$n\in\{0,\ldots,m\}$

, we introduce the corresponding density

and for

![]() $t>0$

and

$t>0$

and

![]() $n\in\{0,\ldots,m\}$

the zero-workload probabilities

$n\in\{0,\ldots,m\}$

the zero-workload probabilities

Remark 2.1. When considering exponentially distributed arrival times, the overall arrival process considered in our model is equivalent to a non-homogeneous Poisson arrival process, conditioned on m arrivals in total, with arrival rate function

![]() $\lambda(t) = C\mathrm{e}^{-\lambda t}$

(where the constant

$\lambda(t) = C\mathrm{e}^{-\lambda t}$

(where the constant

![]() $C>0$

is arbitrary). This observation was made before in [Reference Maillardet and Taylor17].

$C>0$

is arbitrary). This observation was made before in [Reference Maillardet and Taylor17].

2.1. Setting up the differential equation

The distribution function

![]() $F_t(x,n)$

can be analyzed by a classical approach in which its value at time

$F_t(x,n)$

can be analyzed by a classical approach in which its value at time

![]() $t+\Delta t$

is related to its counterpart at time t. Indeed, observe that, as

$t+\Delta t$

is related to its counterpart at time t. Indeed, observe that, as

![]() $\Delta t\downarrow 0$

, for any

$\Delta t\downarrow 0$

, for any

![]() $x>0$

,

$x>0$

,

![]() $t>0$

, and

$t>0$

, and

![]() $n\in\{0,\ldots,m-1\}$

,

$n\in\{0,\ldots,m-1\}$

,

\begin{align}\notag F_{t+\Delta t}(x,n) &= (1-\lambda n\,\Delta t)\cdot F_t(x+\Delta t, n)+\lambda (n+1)\,\Delta t\int_{(0,x]} f_t(y,n+1) \,B(x-y)\,\mathrm{d}y\\[5pt] &\quad+ \lambda (n+1)\,\Delta t \,P_t(n+1) \,B(x) +{\mathrm{o}} (\Delta t).\end{align}

\begin{align}\notag F_{t+\Delta t}(x,n) &= (1-\lambda n\,\Delta t)\cdot F_t(x+\Delta t, n)+\lambda (n+1)\,\Delta t\int_{(0,x]} f_t(y,n+1) \,B(x-y)\,\mathrm{d}y\\[5pt] &\quad+ \lambda (n+1)\,\Delta t \,P_t(n+1) \,B(x) +{\mathrm{o}} (\Delta t).\end{align}

Equation (2.1) has the following straightforward interpretation: the first term on the right-hand side represents the scenario of no arrival between t and

![]() $t+\Delta t$

, the second term the scenario with one arrival in combination with the workload prior to the arrival being positive, and the third term the scenario with one arrival in combination with the workload prior to the arrival being zero; we remark that scenarios with more than one arrival are absorbed in the

$t+\Delta t$

, the second term the scenario with one arrival in combination with the workload prior to the arrival being positive, and the third term the scenario with one arrival in combination with the workload prior to the arrival being zero; we remark that scenarios with more than one arrival are absorbed in the

![]() ${\mathrm{o}} (\Delta t)$

term.

${\mathrm{o}} (\Delta t)$

term.

After subtracting

![]() $F_t(x,n)$

from both sides of (2.1), dividing the full equation by

$F_t(x,n)$

from both sides of (2.1), dividing the full equation by

![]() $\Delta t$

, and sending

$\Delta t$

, and sending

![]() $\Delta t$

to 0, we obtain the following partial differential equation: for any

$\Delta t$

to 0, we obtain the following partial differential equation: for any

![]() $x>0$

,

$x>0$

,

![]() $t>0$

, and

$t>0$

, and

![]() $n\in\{0,\ldots,m-1\}$

,

$n\in\{0,\ldots,m-1\}$

,

\begin{align}\notag\dfrac{\partial}{\partial t}F_t(x,n)-f_t(x,n) &=-\,\lambda n\, F_t(x, n) +\lambda (n+1)\int_{(0,x]} f_t(y,n+1) \,B(x-y)\,\mathrm{d}y\\[5pt] & \quad +\lambda (n+1)\,P_t(n+1) \,B(x).\end{align}

\begin{align}\notag\dfrac{\partial}{\partial t}F_t(x,n)-f_t(x,n) &=-\,\lambda n\, F_t(x, n) +\lambda (n+1)\int_{(0,x]} f_t(y,n+1) \,B(x-y)\,\mathrm{d}y\\[5pt] & \quad +\lambda (n+1)\,P_t(n+1) \,B(x).\end{align}

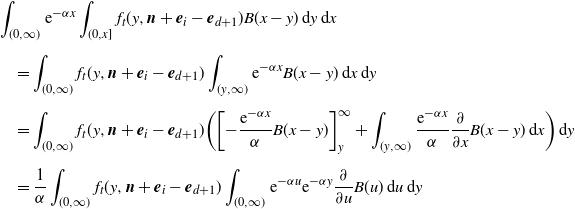

2.2. Double transform

In order to uniquely characterize the solution of this partial differential equation, we work with a double transform. To this end, we first multiply the full equation (2.2) by

![]() $\mathrm{e}^{-\alpha x}$

, for

$\mathrm{e}^{-\alpha x}$

, for

![]() $\alpha\geq 0$

, and integrate over

$\alpha\geq 0$

, and integrate over

![]() $x\in(0,\infty)$

, so as to convert the partial differential equation into an ordinary differential equation. In this analysis, we intensively work with the object

$x\in(0,\infty)$

, so as to convert the partial differential equation into an ordinary differential equation. In this analysis, we intensively work with the object

By applying integration by parts, it is readily verified that, for any

![]() $n\in\{0,\ldots,m-1\}$

,

$n\in\{0,\ldots,m-1\}$

,

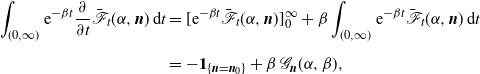

By applying Fubini, and denoting

![]() ${\mathscr{B}}(\alpha)\;:\!=\; {\mathbb E}\,\mathrm{e}^{-\alpha B}$

, again for any

${\mathscr{B}}(\alpha)\;:\!=\; {\mathbb E}\,\mathrm{e}^{-\alpha B}$

, again for any

![]() $n\in\{0,\ldots,m-1\}$

,

$n\in\{0,\ldots,m-1\}$

,

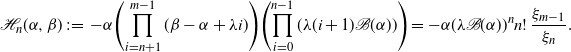

Upon combining the above identities, we readily arrive at the following (ordinary) differential equation:

\begin{align*} \dfrac{\partial}{\partial t}\dfrac{P_t(n)+{\mathscr{F}}_t(\alpha,n)}{\alpha} - {\mathscr{F}}_t(\alpha,n) &= -\lambda n\,\dfrac{P_t(n)+{\mathscr{F}}_t(\alpha,n)}{\alpha} \\[5pt] &\quad +\lambda (n+1)\,\dfrac{(P_t(n+1)+{\mathscr{F}}_t(\alpha,n+1))\,{\mathscr{B}}(\alpha)}{\alpha},\end{align*}

\begin{align*} \dfrac{\partial}{\partial t}\dfrac{P_t(n)+{\mathscr{F}}_t(\alpha,n)}{\alpha} - {\mathscr{F}}_t(\alpha,n) &= -\lambda n\,\dfrac{P_t(n)+{\mathscr{F}}_t(\alpha,n)}{\alpha} \\[5pt] &\quad +\lambda (n+1)\,\dfrac{(P_t(n+1)+{\mathscr{F}}_t(\alpha,n+1))\,{\mathscr{B}}(\alpha)}{\alpha},\end{align*}

which, with

![]() $\bar {\mathscr{F}}_t(\alpha,n)\;:\!=\; P_t(n)+{\mathscr{F}}_t(\alpha,n)$

, simplifies to

$\bar {\mathscr{F}}_t(\alpha,n)\;:\!=\; P_t(n)+{\mathscr{F}}_t(\alpha,n)$

, simplifies to

The next step is to transform once more: we multiply the full equation in the previous display by

![]() $\mathrm{e}^{-\beta t}$

, for

$\mathrm{e}^{-\beta t}$

, for

![]() $\beta>0$

, and integrate over

$\beta>0$

, and integrate over

![]() $t\in(0,\infty),$

with the objective of turning the ordinary differential equation of the previous display into an algebraic equation. We use the notation

$t\in(0,\infty),$

with the objective of turning the ordinary differential equation of the previous display into an algebraic equation. We use the notation

\begin{align*} {\mathscr{P}}_n(\beta)&\;:\!=\; \int_{(0,\infty)} \mathrm{e}^{-\beta t}\,P_t(n)\,\mathrm{d}t,\\[5pt] {\mathscr{G}}_n(\alpha,\beta) &\equiv {\mathscr{G}}_n(\alpha,\beta \mid m)\;:\!=\; \int_{(0,\infty)} \mathrm{e}^{-\beta t}\,\bar{\mathscr{F}}_t(\alpha,n)\,\mathrm{d}t.\end{align*}

\begin{align*} {\mathscr{P}}_n(\beta)&\;:\!=\; \int_{(0,\infty)} \mathrm{e}^{-\beta t}\,P_t(n)\,\mathrm{d}t,\\[5pt] {\mathscr{G}}_n(\alpha,\beta) &\equiv {\mathscr{G}}_n(\alpha,\beta \mid m)\;:\!=\; \int_{(0,\infty)} \mathrm{e}^{-\beta t}\,\bar{\mathscr{F}}_t(\alpha,n)\,\mathrm{d}t.\end{align*}

Using the same techniques as above, for

![]() $n\in\{0,\ldots,m-1\}$

,

$n\in\{0,\ldots,m-1\}$

,

so that we arrive at the recursion

The case

![]() $n=m$

can be dealt with explicitly. Indeed, observing that if

$n=m$

can be dealt with explicitly. Indeed, observing that if

![]() $N(t)=m$

no arrival can have occurred before time t, we find

$N(t)=m$

no arrival can have occurred before time t, we find

Remark 2.2. Note that the object

![]() $\beta\,{\mathscr{G}}_n(\alpha,\beta \mid m)$

has an appealing interpretation:

$\beta\,{\mathscr{G}}_n(\alpha,\beta \mid m)$

has an appealing interpretation:

with

![]() $T_\beta$

an exponentially distributed random variable with mean

$T_\beta$

an exponentially distributed random variable with mean

![]() $\beta^{-1}$

, independent of anything else. This observation will be intensively relied upon in Section 3.

$\beta^{-1}$

, independent of anything else. This observation will be intensively relied upon in Section 3.

2.3. Explicit solution

The recursion (2.4) can be readily solved, by repeated insertion. The eventual result is given in Theorem 2.1, but we first sketch the underlying approach, to explicitly identify all expressions involved.

Denoting the coefficients in the recursion by

we obtain the standard solution

\begin{equation}{\mathscr{G}}_n(\alpha,\beta)= {\mathscr{G}}_m(\alpha,\beta)\prod_{i=n}^{m-1}\gamma_i+\sum_{j=n}^{m-1}\delta_j\prod_{i=n}^{j-1}\gamma_i,\end{equation}

\begin{equation}{\mathscr{G}}_n(\alpha,\beta)= {\mathscr{G}}_m(\alpha,\beta)\prod_{i=n}^{m-1}\gamma_i+\sum_{j=n}^{m-1}\delta_j\prod_{i=n}^{j-1}\gamma_i,\end{equation}

following the convention that the empty product is defined as one. At this point, it is left to determine the unknown functions

![]() ${\mathscr{P}}_n(\beta)$

, for

${\mathscr{P}}_n(\beta)$

, for

![]() $n=0,\ldots,m-1$

. That can be done by noting that any root of the denominator should be a root of the numerator too. To this end, observe that we can rewrite the expression for

$n=0,\ldots,m-1$

. That can be done by noting that any root of the denominator should be a root of the numerator too. To this end, observe that we can rewrite the expression for

![]() ${\mathscr{G}}_0(\alpha,\beta)$

in the form

${\mathscr{G}}_0(\alpha,\beta)$

in the form

for appropriately chosen functions

![]() ${\mathscr{H}}_{n}(\alpha,\beta)$

, with

${\mathscr{H}}_{n}(\alpha,\beta)$

, with

![]() $n=0,1,\ldots,m$

. Note that the (distinct) roots of the denominator are

$n=0,1,\ldots,m$

. Note that the (distinct) roots of the denominator are

![]() $\alpha_j\;:\!=\; \beta+\lambda j>0$

, with

$\alpha_j\;:\!=\; \beta+\lambda j>0$

, with

![]() $j=0,\ldots,m-1$

. This means that the unknown functions

$j=0,\ldots,m-1$

. This means that the unknown functions

![]() ${\mathscr{P}}_n(\beta)$

can be found by solving the following linear equations: for

${\mathscr{P}}_n(\beta)$

can be found by solving the following linear equations: for

![]() $j=0,\ldots,m-1$

,

$j=0,\ldots,m-1$

,

\begin{equation} -{\mathscr{H}}_{m}(\alpha_j,\beta)=\sum_{n=0}^{m-1} {\mathscr{H}}_{n}(\alpha_j,\beta)\,{\mathscr{P}}_n(\beta).\end{equation}

\begin{equation} -{\mathscr{H}}_{m}(\alpha_j,\beta)=\sum_{n=0}^{m-1} {\mathscr{H}}_{n}(\alpha_j,\beta)\,{\mathscr{P}}_n(\beta).\end{equation}

Hence we can find the m unknowns from these m equations.

We proceed by explicitly identifying the above objects. In these derivations, we intensively use the compact notations

with empty products being defined as 1. We can rewrite (2.5) in the form of (2.6), as follows:

\begin{align*} \mathscr{G}_0(\alpha,\beta) &= {\mathscr{G}}_m(\alpha,\beta)\dfrac{\prod_{n=0}^{m-1}\lambda(n+1){\mathscr{B}}(\alpha)}{\prod_{n=0}^{m-1}(\beta - \alpha+ \lambda n)} + \sum_{j=0}^{m-1}\delta_j\dfrac{\prod_{n=0}^{j-1}\lambda(n+1){\mathscr{B}}(\alpha)}{\prod_{n=0}^{j-1}(\beta - \alpha+ \lambda n)} \\[5pt] &= \dfrac{\mathscr{H}_m(\alpha,\beta) + \sum_{j=0}^{m-1}\delta_j(\xi_{m-1}/\xi_{j-1})\eta_{j-1}}{\xi_{m-1}} \\[5pt] &= \dfrac{\mathscr{H}_m(\alpha,\beta) - \sum_{j=0}^{m-1}\alpha(\xi_{m-1}/\xi_j)\eta_{j-1}\,\mathscr{P}_j(\beta)}{\xi_{m-1}} \\[5pt] &= \dfrac{\mathscr{H}_m(\alpha,\beta) + \sum_{n=0}^{m-1} \mathscr{H}_n(\alpha,\beta) \mathscr{P}_n(\beta)}{\xi_{m-1}},\end{align*}

\begin{align*} \mathscr{G}_0(\alpha,\beta) &= {\mathscr{G}}_m(\alpha,\beta)\dfrac{\prod_{n=0}^{m-1}\lambda(n+1){\mathscr{B}}(\alpha)}{\prod_{n=0}^{m-1}(\beta - \alpha+ \lambda n)} + \sum_{j=0}^{m-1}\delta_j\dfrac{\prod_{n=0}^{j-1}\lambda(n+1){\mathscr{B}}(\alpha)}{\prod_{n=0}^{j-1}(\beta - \alpha+ \lambda n)} \\[5pt] &= \dfrac{\mathscr{H}_m(\alpha,\beta) + \sum_{j=0}^{m-1}\delta_j(\xi_{m-1}/\xi_{j-1})\eta_{j-1}}{\xi_{m-1}} \\[5pt] &= \dfrac{\mathscr{H}_m(\alpha,\beta) - \sum_{j=0}^{m-1}\alpha(\xi_{m-1}/\xi_j)\eta_{j-1}\,\mathscr{P}_j(\beta)}{\xi_{m-1}} \\[5pt] &= \dfrac{\mathscr{H}_m(\alpha,\beta) + \sum_{n=0}^{m-1} \mathscr{H}_n(\alpha,\beta) \mathscr{P}_n(\beta)}{\xi_{m-1}},\end{align*}

where

and, for

![]() $n\in\{0,\ldots,m-1\}$

,

$n\in\{0,\ldots,m-1\}$

,

\begin{align*} \mathscr{H}_n(\alpha,\beta) &\;:\!=\; -\alpha\Biggl(\prod_{i=n+1}^{m-1}(\beta - \alpha + \lambda i)\Biggr)\Biggl(\prod_{i=0}^{n-1}(\lambda(i+1)\mathscr{B}(\alpha))\Biggr) = -\alpha(\lambda \mathscr{B}(\alpha))^{n}n!\,\dfrac{\xi_{m-1}}{\xi_n}.\end{align*}

\begin{align*} \mathscr{H}_n(\alpha,\beta) &\;:\!=\; -\alpha\Biggl(\prod_{i=n+1}^{m-1}(\beta - \alpha + \lambda i)\Biggr)\Biggl(\prod_{i=0}^{n-1}(\lambda(i+1)\mathscr{B}(\alpha))\Biggr) = -\alpha(\lambda \mathscr{B}(\alpha))^{n}n!\,\dfrac{\xi_{m-1}}{\xi_n}.\end{align*}

Given these expressions for

![]() $\mathscr{H}_n(\alpha,\beta)$

, we can now identify expressions for

$\mathscr{H}_n(\alpha,\beta)$

, we can now identify expressions for

![]() $\mathscr{P}_n(\beta)$

as well. To this end, note that

$\mathscr{P}_n(\beta)$

as well. To this end, note that

![]() $\mathscr{H}_n(\alpha_j,\beta) = 0$

for

$\mathscr{H}_n(\alpha_j,\beta) = 0$

for

![]() $j\in\{n+1,\ldots, m-1\}$

, because

$j\in\{n+1,\ldots, m-1\}$

, because

![]() $\alpha_j$

can be a root of the first product in the definition of

$\alpha_j$

can be a root of the first product in the definition of

![]() $\mathscr{H}_n(\alpha_j,\beta)$

.

$\mathscr{H}_n(\alpha_j,\beta)$

.

We first determine

![]() ${\mathscr{P}}_{m-1}(\beta)$

. Substituting

${\mathscr{P}}_{m-1}(\beta)$

. Substituting

![]() $\alpha_{m-1}$

into (2.7) gives

$\alpha_{m-1}$

into (2.7) gives

\begin{equation*} -{\mathscr{H}}_{m}(\alpha_{m-1},\beta)=\sum_{n=0}^{m-1} {\mathscr{H}}_{n}(\alpha_{m-1},\beta)\,{\mathscr{P}}_n(\beta) = {\mathscr{H}}_{m-1}(\alpha_{m-1},\beta)\,{\mathscr{P}}_{m-1}(\beta),\end{equation*}

\begin{equation*} -{\mathscr{H}}_{m}(\alpha_{m-1},\beta)=\sum_{n=0}^{m-1} {\mathscr{H}}_{n}(\alpha_{m-1},\beta)\,{\mathscr{P}}_n(\beta) = {\mathscr{H}}_{m-1}(\alpha_{m-1},\beta)\,{\mathscr{P}}_{m-1}(\beta),\end{equation*}

so that

For

![]() $n\in\{0,\ldots,m-1\}$

we then have

$n\in\{0,\ldots,m-1\}$

we then have

This means that the linear system (2.7) can be solved recursively: when plugging

![]() $n= m-2$

into equation (2.8), we obtain

$n= m-2$

into equation (2.8), we obtain

![]() ${\mathscr{P}}_{m-2}(\beta)$

in terms of

${\mathscr{P}}_{m-2}(\beta)$

in terms of

![]() ${\mathscr{P}}_{m-1}(\beta)$

, after which

${\mathscr{P}}_{m-1}(\beta)$

, after which

![]() ${\mathscr{P}}_{m-3}(\beta)$

can be expressed in terms of

${\mathscr{P}}_{m-3}(\beta)$

can be expressed in terms of

![]() ${\mathscr{P}}_{m-2}(\beta)$

and

${\mathscr{P}}_{m-2}(\beta)$

and

![]() ${\mathscr{P}}_{m-1}(\beta)$

, and so on. The next theorem summarizes our findings thus far.

${\mathscr{P}}_{m-1}(\beta)$

, and so on. The next theorem summarizes our findings thus far.

Theorem 2.1. (Base model.) For any

![]() $\alpha\geq 0$

and

$\alpha\geq 0$

and

![]() $\beta>0$

, and

$\beta>0$

, and

![]() $n\in\{0,\ldots,m-1\}$

, the transform

$n\in\{0,\ldots,m-1\}$

, the transform

![]() ${\mathscr{G}}_n(\alpha,\beta \mid m)$

is given by (2.5), where the transforms

${\mathscr{G}}_n(\alpha,\beta \mid m)$

is given by (2.5), where the transforms

![]() ${\mathscr{P}}_{0}(\beta),\ldots,{\mathscr{P}}_{m-1}(\beta)$

follow from the recursion (2.8), and

${\mathscr{P}}_{0}(\beta),\ldots,{\mathscr{P}}_{m-1}(\beta)$

follow from the recursion (2.8), and

![]() ${\mathscr{G}}_m(\alpha,\beta \mid m)={\mathscr{P}}_m(\beta)=({\beta+\lambda m})^{-1}$

.

${\mathscr{G}}_m(\alpha,\beta \mid m)={\mathscr{P}}_m(\beta)=({\beta+\lambda m})^{-1}$

.

Remark 2.3. There is a related way to derive, for

![]() $n\in\{0,\ldots,m-1\}$

, expressions for the transforms

$n\in\{0,\ldots,m-1\}$

, expressions for the transforms

![]() $\mathscr{P}_n(\beta)$

. To this end, note that for the root of the denominator in (2.4), i.e.

$\mathscr{P}_n(\beta)$

. To this end, note that for the root of the denominator in (2.4), i.e.

![]() $\alpha_n = \beta+\lambda n$

, the numerator must also equal zero. This leads to the relation

$\alpha_n = \beta+\lambda n$

, the numerator must also equal zero. This leads to the relation

which rewritten gives

Together with equation (2.4) and

![]() ${\mathscr{G}}_m(\alpha,\beta)={\mathscr{P}}_m(\beta)=({\beta+\lambda m})^{-1}$

, (2.9) can be solved recursively as well. Specifically, equations (2.4) and (2.9) are to be applied alternately: from the known expression for

${\mathscr{G}}_m(\alpha,\beta)={\mathscr{P}}_m(\beta)=({\beta+\lambda m})^{-1}$

, (2.9) can be solved recursively as well. Specifically, equations (2.4) and (2.9) are to be applied alternately: from the known expression for

![]() ${\mathscr{G}}_m(\alpha,\beta)$

we find

${\mathscr{G}}_m(\alpha,\beta)$

we find

![]() $\mathscr{P}_{m-1}(\beta)$

by (2.9), then

$\mathscr{P}_{m-1}(\beta)$

by (2.9), then

![]() ${\mathscr{G}}_{m-1}(\alpha,\beta)$

(for any

${\mathscr{G}}_{m-1}(\alpha,\beta)$

(for any

![]() $\alpha\geq 0$

) follows from (2.4), then

$\alpha\geq 0$

) follows from (2.4), then

![]() $\mathscr{P}_{m-2}(\beta)$

again by (2.9), and so on.

$\mathscr{P}_{m-2}(\beta)$

again by (2.9), and so on.

2.4. Alternative approach

We now detail an alternative procedure by which the transforms

![]() ${\mathscr{P}}_0(\beta),\ldots,{\mathscr{P}}_{m-1}(\beta)$

can be determined. The main reason why we include it here is that in Section 4 we will intensively rely on the underlying argumentation; the account below serves to introduce the concepts in an elementary setting.

${\mathscr{P}}_0(\beta),\ldots,{\mathscr{P}}_{m-1}(\beta)$

can be determined. The main reason why we include it here is that in Section 4 we will intensively rely on the underlying argumentation; the account below serves to introduce the concepts in an elementary setting.

Denote

![]() ${\boldsymbol{V}}(\alpha,\beta)=({\mathscr{V}}_0(\alpha,\beta),\ldots,{\mathscr{V}}_{m-1}(\alpha,\beta))^{\top}$

, where the ith component is given by

${\boldsymbol{V}}(\alpha,\beta)=({\mathscr{V}}_0(\alpha,\beta),\ldots,{\mathscr{V}}_{m-1}(\alpha,\beta))^{\top}$

, where the ith component is given by

In addition,

![]() ${\boldsymbol{G}}(\alpha,\beta)=({\mathscr{G}}_0(\alpha,\beta),\ldots,{\mathscr{G}}_{m-1}(\alpha,\beta))^{\top}$

. Then it is easily checked that the system of equations (2.3) can be rewritten in matrix–vector notation as

${\boldsymbol{G}}(\alpha,\beta)=({\mathscr{G}}_0(\alpha,\beta),\ldots,{\mathscr{G}}_{m-1}(\alpha,\beta))^{\top}$

. Then it is easily checked that the system of equations (2.3) can be rewritten in matrix–vector notation as

Here the

![]() $(i,i+1)$

th entry (for

$(i,i+1)$

th entry (for

![]() $i\in\{0,\ldots,m-2\}$

) of the

$i\in\{0,\ldots,m-2\}$

) of the

![]() $m\times m$

matrix

$m\times m$

matrix

is given by

![]() $\lambda(i+1)\,{\mathscr{B}}(\alpha)$

, and the (i, i)th entry (for

$\lambda(i+1)\,{\mathscr{B}}(\alpha)$

, and the (i, i)th entry (for

![]() $i\in\{0,\ldots,m-1\}$

) by

$i\in\{0,\ldots,m-1\}$

) by

![]() $\alpha-\beta-\lambda i$

.

$\alpha-\beta-\lambda i$

.

The next observation is that the transpose of

![]() $M(\alpha,\beta)$

is the so-called matrix exponent of a Markov additive process (MAP) [Reference Asmussen2, Section XI.2]. This can be seen as follows.

$M(\alpha,\beta)$

is the so-called matrix exponent of a Markov additive process (MAP) [Reference Asmussen2, Section XI.2]. This can be seen as follows.

-

• A MAP is defined by a dimension

$d\in{\mathbb N}$

, a possibly defective

$d\in{\mathbb N}$

, a possibly defective

$d\times d$

transition rate matrix Q governing a background process, jump sizes

$d\times d$

transition rate matrix Q governing a background process, jump sizes

$J_{ij}$

corresponding to transitions by the background process from state i to state j (with

$J_{ij}$

corresponding to transitions by the background process from state i to state j (with

$i,j\in\{1,\ldots,d\}$

with

$i,j\in\{1,\ldots,d\}$

with

$i\not=j$

), and Lévy processes

$i\not=j$

), and Lévy processes

$Y_i(t)$

with Laplace exponents

$Y_i(t)$

with Laplace exponents

$\varphi_i(\!\cdot\!)$

that are active when the background process is in state i (with

$\varphi_i(\!\cdot\!)$

that are active when the background process is in state i (with

$i\in\{1,\ldots,d\}$

). When the jumps are non-negative and the Lévy processes spectrally positive, and when imposing killing at rate

$i\in\{1,\ldots,d\}$

). When the jumps are non-negative and the Lévy processes spectrally positive, and when imposing killing at rate

$\beta>0$

, the matrix exponent of this MAP, for

$\beta>0$

, the matrix exponent of this MAP, for

$\alpha\geq 0$

, is given by (2.10)with

$\alpha\geq 0$

, is given by (2.10)with \begin{equation} \operatorname{diag}\{\varphi_1(\alpha)-\beta,\ldots,\varphi_d(\alpha)-\beta\}+ Q\circ {\mathscr{J}}(\alpha),\end{equation}

\begin{equation} \operatorname{diag}\{\varphi_1(\alpha)-\beta,\ldots,\varphi_d(\alpha)-\beta\}+ Q\circ {\mathscr{J}}(\alpha),\end{equation}

$\circ$

denoting the Hadamard product, and the (i, j)th entry of

$\circ$

denoting the Hadamard product, and the (i, j)th entry of

${\mathscr{J}}(\alpha)$

defined by

${\mathscr{J}}(\alpha)$

defined by

${\mathbb E}\,\mathrm{e}^{-\alpha J_{ij}}.$

${\mathbb E}\,\mathrm{e}^{-\alpha J_{ij}}.$

-

• Then one can directly verify that the transpose of our matrix

$M(\alpha,\beta)$

is of the form (2.10); recall that

$M(\alpha,\beta)$

is of the form (2.10); recall that

$\alpha$

is the Laplace exponent of a deterministic drift of rate 1.

$\alpha$

is the Laplace exponent of a deterministic drift of rate 1.

We proceed by studying the roots of

![]() $\det M(\alpha,\beta)$

(for any given

$\det M(\alpha,\beta)$

(for any given

![]() $\beta>0$

), which evidently coincide with the roots of

$\beta>0$

), which evidently coincide with the roots of

![]() $\det M(\alpha,\beta)^\top$

. Applying the machinery developed in [Reference Ivanovs, Boxma and Mandjes13] and [Reference van Kreveld, Mandjes and Dorsman15] for matrix exponents of Markov additive processes, we conclude that it has m roots in the right-half of the complex

$\det M(\alpha,\beta)^\top$

. Applying the machinery developed in [Reference Ivanovs, Boxma and Mandjes13] and [Reference van Kreveld, Mandjes and Dorsman15] for matrix exponents of Markov additive processes, we conclude that it has m roots in the right-half of the complex

![]() $\alpha$

-plane, say

$\alpha$

-plane, say

![]() $\alpha_0,\ldots,\alpha_{m-1}$

. Technically, our instance fits into the framework of [Reference van Kreveld, Mandjes and Dorsman15, Proposition 2], in that the underlying background process is not irreducible (with state 0 being an absorbing state).

$\alpha_0,\ldots,\alpha_{m-1}$

. Technically, our instance fits into the framework of [Reference van Kreveld, Mandjes and Dorsman15, Proposition 2], in that the underlying background process is not irreducible (with state 0 being an absorbing state).

The next step is to observe that, by Cramer’s rule, for

![]() $n\in\{0,\ldots,m-1\}$

,

$n\in\{0,\ldots,m-1\}$

,

where

![]() $M_n(\alpha,\beta)$

is defined as

$M_n(\alpha,\beta)$

is defined as

![]() $M(\alpha,\beta)$

, with the nth column replaced by

$M(\alpha,\beta)$

, with the nth column replaced by

![]() ${\boldsymbol{V}}(\alpha,\beta)$

. This means that, for

${\boldsymbol{V}}(\alpha,\beta)$

. This means that, for

![]() $j\in\{0,\ldots,m-1\}$

,

$j\in\{0,\ldots,m-1\}$

,

![]() $\det M_n(\alpha_j,\beta)=0$

for

$\det M_n(\alpha_j,\beta)=0$

for

![]() $n\in\{0,\ldots,m-1\}$

. This seemingly leads to

$n\in\{0,\ldots,m-1\}$

. This seemingly leads to

![]() $m^2$

(linear) equations in the m unknowns

$m^2$

(linear) equations in the m unknowns

![]() ${\mathscr{P}}_0(\beta),\ldots,{\mathscr{P}}_{m-1}(\beta)$

, but it turns out that all equations corresponding to the same

${\mathscr{P}}_0(\beta),\ldots,{\mathscr{P}}_{m-1}(\beta)$

, but it turns out that all equations corresponding to the same

![]() $\alpha_j$

effectively contain the same information: if

$\alpha_j$

effectively contain the same information: if

![]() $\det M(\alpha,\beta)=\det M_n(\alpha,\beta)=0$

, then also

$\det M(\alpha,\beta)=\det M_n(\alpha,\beta)=0$

, then also

![]() $\det M_{n'}(\alpha,\beta)=0$

for

$\det M_{n'}(\alpha,\beta)=0$

for

![]() $n'\not =n$

; to see this, exactly the same reasoning as in [Reference van Kreveld, Mandjes and Dorsman15, Section 3.3.1] can be followed.

$n'\not =n$

; to see this, exactly the same reasoning as in [Reference van Kreveld, Mandjes and Dorsman15, Section 3.3.1] can be followed.

In our specific case the roots

![]() $\alpha_j=\beta+\lambda j$

, for

$\alpha_j=\beta+\lambda j$

, for

![]() $j\in\{0,\ldots,m-1\}$

, are distinct. We thus end up with m linear equations in equally many unknowns. We conclude this section by applying the above method, so as to recover our previous result (2.8).

$j\in\{0,\ldots,m-1\}$

, are distinct. We thus end up with m linear equations in equally many unknowns. We conclude this section by applying the above method, so as to recover our previous result (2.8).

Lemma 2.1. The determinant of the matrix

![]() $M_n(\alpha,\beta)$

defined above is given by, for

$M_n(\alpha,\beta)$

defined above is given by, for

![]() $n\in\{0,\ldots,m-1\}$

,

$n\in\{0,\ldots,m-1\}$

,

\begin{equation*} \det M_n(\alpha,\beta) = C_n(\alpha,\beta)\prod_{i=0,\, i\neq n}^{m-1} (\alpha - \beta - \lambda i), \end{equation*}

\begin{equation*} \det M_n(\alpha,\beta) = C_n(\alpha,\beta)\prod_{i=0,\, i\neq n}^{m-1} (\alpha - \beta - \lambda i), \end{equation*}

with

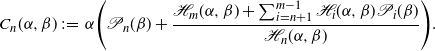

\begin{equation*} C_n(\alpha,\beta) \;:\!=\; \alpha \Biggl(\mathscr{P}_n(\beta) + \dfrac{\mathscr{H}_m(\alpha,\beta) + \sum_{i=n+1}^{m-1}\mathscr{H}_i(\alpha,\beta)\mathscr{P}_i(\beta)}{\mathscr{H}_n(\alpha,\beta)}\Biggr). \end{equation*}

\begin{equation*} C_n(\alpha,\beta) \;:\!=\; \alpha \Biggl(\mathscr{P}_n(\beta) + \dfrac{\mathscr{H}_m(\alpha,\beta) + \sum_{i=n+1}^{m-1}\mathscr{H}_i(\alpha,\beta)\mathscr{P}_i(\beta)}{\mathscr{H}_n(\alpha,\beta)}\Biggr). \end{equation*}

Proof. See Appendix A.

According to the above recipe, for all

![]() $j\in\{0,\ldots, m-1\}$

we necessarily have

$j\in\{0,\ldots, m-1\}$

we necessarily have

![]() $\det M_n(\alpha_j, \beta) = 0$

. From Lemma 2.1 we find that this is indeed the case for all

$\det M_n(\alpha_j, \beta) = 0$

. From Lemma 2.1 we find that this is indeed the case for all

![]() $\alpha_j = \beta + \lambda j$

with

$\alpha_j = \beta + \lambda j$

with

![]() $j\neq n$

, as they are the roots of the product term appearing in

$j\neq n$

, as they are the roots of the product term appearing in

![]() $\det M_n(\alpha,\beta)$

. Inserting

$\det M_n(\alpha,\beta)$

. Inserting

![]() $j=n$

into

$j=n$

into

![]() $\det M_n(\alpha_j, \beta) = 0$

, we conclude that

$\det M_n(\alpha_j, \beta) = 0$

, we conclude that

![]() $C_n(\alpha_n, \beta) = 0$

, from which it follows that (2.8) applies.

$C_n(\alpha_n, \beta) = 0$

, from which it follows that (2.8) applies.

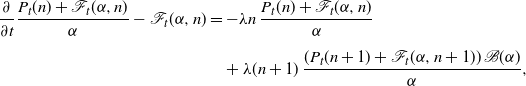

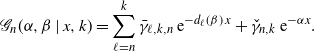

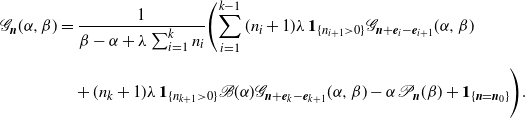

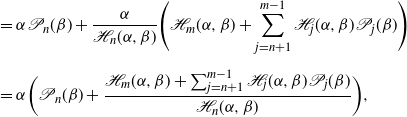

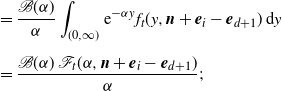

Figure 1. Mean workload (a), variance of the workload (b), and empty-buffer probability (c) in the base model, as functions of time, for different values of m.

In Figure 1 we plot, for different values of the number of customers m, the mean workload

![]() ${\mathbb E}\,W(t)$

, its variance

${\mathbb E}\,W(t)$

, its variance

![]() $\operatorname{Var} W(t)$

, and the probability of an empty buffer

$\operatorname{Var} W(t)$

, and the probability of an empty buffer

![]() ${\mathbb P}(W(t)=0)$

, as functions of time. These are numerically obtained by converting, in the obvious manner, the recursion for the double transform into recursions involving

${\mathbb P}(W(t)=0)$

, as functions of time. These are numerically obtained by converting, in the obvious manner, the recursion for the double transform into recursions involving

\begin{gather*}{\int_0^\infty}\mathrm{e}^{-\beta t} {\mathbb E}\bigl(W(t) \,{\textbf{1}}_{\{N(t)=n\}}\bigr)\,\mathrm{d}t,\quad {\int_0^\infty}\mathrm{e}^{-\beta t} {\mathbb E}\bigl(W(t)^2 \,{\textbf{1}}_{\{N(t)=n\}}\bigr)\,\mathrm{d}t \\[5pt] \text{and}\quad \int_0^\infty \mathrm{e}^{-\beta t}{\mathbb P}(W(t)=0,N(t)=n)\,\mathrm{d}t,\end{gather*}

\begin{gather*}{\int_0^\infty}\mathrm{e}^{-\beta t} {\mathbb E}\bigl(W(t) \,{\textbf{1}}_{\{N(t)=n\}}\bigr)\,\mathrm{d}t,\quad {\int_0^\infty}\mathrm{e}^{-\beta t} {\mathbb E}\bigl(W(t)^2 \,{\textbf{1}}_{\{N(t)=n\}}\bigr)\,\mathrm{d}t \\[5pt] \text{and}\quad \int_0^\infty \mathrm{e}^{-\beta t}{\mathbb P}(W(t)=0,N(t)=n)\,\mathrm{d}t,\end{gather*}

and then perform numerical Laplace inversion [Reference Abate and Whitt1] with respect to

![]() $\beta.$

The service times are exponentially distributed, and we have used

$\beta.$

The service times are exponentially distributed, and we have used

![]() $\lambda=\mu=1.$

$\lambda=\mu=1.$

3. Starting at arbitrary initial workload

So far we have assumed that at time zero the workload level equals zero. In this section we generalize this to cover the case in which we start at any initial workload level

![]() $x\geq 0$

. The object of our interest is

$x\geq 0$

. The object of our interest is

here

![]() $T_\beta$

again denotes an exponentially distributed random variable with mean

$T_\beta$

again denotes an exponentially distributed random variable with mean

![]() $\beta^{-1}$

, independent of anything else. Henceforth we alternatively denote the right-hand side of (3.1) by

$\beta^{-1}$

, independent of anything else. Henceforth we alternatively denote the right-hand side of (3.1) by

that is, we systematically use subscripts to indicate the initial conditions.

3.1. Derivation of the transform

The workload process W(t) is often referred to as the reflected process. The process cannot drop below zero; when there is no work in the system and there is service capacity available, the workload level remains 0. In the present section, we intensively work with the process

![]() $Y(\!\cdot\!)$

, to be interpreted as the position of the associated free process (or non-reflected process). In particular, this means that, for any given

$Y(\!\cdot\!)$

, to be interpreted as the position of the associated free process (or non-reflected process). In particular, this means that, for any given

![]() $t\geq 0$

, Y(t) represents the the amount of work that has arrived in (0,t] minus what could potentially have been served (i.e. t).

$t\geq 0$

, Y(t) represents the the amount of work that has arrived in (0,t] minus what could potentially have been served (i.e. t).

We further define the stopping time

![]() $\sigma(x)\;:\!=\; \inf\{t\colon Y(t)<-x\}$

, which is the first time that the buffer is empty given that the initial workload is x. Observe that

$\sigma(x)\;:\!=\; \inf\{t\colon Y(t)<-x\}$

, which is the first time that the buffer is empty given that the initial workload is x. Observe that

![]() $Y(\sigma(x))=-x$

almost surely, as the process Y(t) has no negative jumps.

$Y(\sigma(x))=-x$

almost surely, as the process Y(t) has no negative jumps.

We also work with the counterpart of (3.1) for the free process:

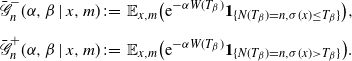

So as to analyze the quantity under study, we distinguish between two disjoint scenarios: the scenario in which the workload has idled before

![]() $T_\beta$

and its complement. This means that we split

$T_\beta$

and its complement. This means that we split

![]() $ \bar {\mathscr{G}}_{n}(\alpha,\beta \mid x,m)= \bar {\mathscr{G}}_{n}^-(\alpha,\beta \mid x,m)+ \bar {\mathscr{G}}_{n}^+(\alpha,\beta \mid x,m)$

, with, in self-evident notation,

$ \bar {\mathscr{G}}_{n}(\alpha,\beta \mid x,m)= \bar {\mathscr{G}}_{n}^-(\alpha,\beta \mid x,m)+ \bar {\mathscr{G}}_{n}^+(\alpha,\beta \mid x,m)$

, with, in self-evident notation,

\begin{align*} \bar {\mathscr{G}}_{n}^-(\alpha,\beta \mid x,m)&\;:\!=\;{\mathbb E}_{x,m}\bigl(\mathrm{e}^{-\alpha W(T_\beta)}{\textbf{1}}_{\{N(T_\beta)=n,\sigma(x)\leq T_\beta\}}\bigr),\\[5pt] \bar {\mathscr{G}}_{n}^+(\alpha,\beta \mid x,m)&\;:\!=\; {\mathbb E}_{x,m}\bigl(\mathrm{e}^{-\alpha W(T_\beta)}{\textbf{1}}_{\{N(T_\beta)=n,\sigma(x)>T_\beta\}}\bigr).\end{align*}

\begin{align*} \bar {\mathscr{G}}_{n}^-(\alpha,\beta \mid x,m)&\;:\!=\;{\mathbb E}_{x,m}\bigl(\mathrm{e}^{-\alpha W(T_\beta)}{\textbf{1}}_{\{N(T_\beta)=n,\sigma(x)\leq T_\beta\}}\bigr),\\[5pt] \bar {\mathscr{G}}_{n}^+(\alpha,\beta \mid x,m)&\;:\!=\; {\mathbb E}_{x,m}\bigl(\mathrm{e}^{-\alpha W(T_\beta)}{\textbf{1}}_{\{N(T_\beta)=n,\sigma(x)>T_\beta\}}\bigr).\end{align*}

We evaluate the objects

![]() $\bar {\mathscr{G}}_{n}^-(\alpha,\beta \mid x,m)$

and

$\bar {\mathscr{G}}_{n}^-(\alpha,\beta \mid x,m)$

and

![]() $\bar {\mathscr{G}}_{n}^+(\alpha,\beta \mid x,m)$

separately.

$\bar {\mathscr{G}}_{n}^+(\alpha,\beta \mid x,m)$

separately.

Observe that, by the strong Markov property in combination with the memoryless property of

![]() $T_\beta$

and the arrival times,

$T_\beta$

and the arrival times,

where we already know from Theorem 2.1 how we can evaluate

![]() $\bar {\mathscr{G}}_n(\alpha,\beta \mid 0,k)=$

$\bar {\mathscr{G}}_n(\alpha,\beta \mid 0,k)=$

![]() $\beta \,{\mathscr{G}}_n(\alpha,\beta \mid k)$

; see Remark 2.2. The interpretation underlying the decomposition on the right-hand side of (3.2) is that the queue starts empty at time

$\beta \,{\mathscr{G}}_n(\alpha,\beta \mid k)$

; see Remark 2.2. The interpretation underlying the decomposition on the right-hand side of (3.2) is that the queue starts empty at time

![]() $\sigma(x)$

.

$\sigma(x)$

.

We proceed by analyzing the remaining quantity,

![]() $\bar {\mathscr{G}}_{n}^+(\alpha,\beta \mid x,m)$

. To this end, we first observe that

$\bar {\mathscr{G}}_{n}^+(\alpha,\beta \mid x,m)$

. To this end, we first observe that

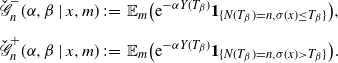

![]() $ \check {\mathscr{G}}_{n}(\alpha,\beta \mid m)= \check {\mathscr{G}}_{n}^-(\alpha,\beta \mid x,m)+ \check {\mathscr{G}}_{n}^+(\alpha,\beta \mid x,m)$

, where, in self-evident notation,

$ \check {\mathscr{G}}_{n}(\alpha,\beta \mid m)= \check {\mathscr{G}}_{n}^-(\alpha,\beta \mid x,m)+ \check {\mathscr{G}}_{n}^+(\alpha,\beta \mid x,m)$

, where, in self-evident notation,

\begin{align*} \check {\mathscr{G}}_{n}^-(\alpha,\beta \mid x,m)&\;:\!=\;{\mathbb E}_{m}\bigl(\mathrm{e}^{-\alpha Y(T_\beta)}{\textbf{1}}_{\{N(T_\beta)=n,\sigma(x)\leq T_\beta\}}\bigr),\\[5pt] \check {\mathscr{G}}_{n}^+(\alpha,\beta \mid x,m)&\;:\!=\; {\mathbb E}_{m}\bigl(\mathrm{e}^{-\alpha Y(T_\beta)}{\textbf{1}}_{\{N(T_\beta)=n,\sigma(x)>T_\beta\}}\bigr).\end{align*}

\begin{align*} \check {\mathscr{G}}_{n}^-(\alpha,\beta \mid x,m)&\;:\!=\;{\mathbb E}_{m}\bigl(\mathrm{e}^{-\alpha Y(T_\beta)}{\textbf{1}}_{\{N(T_\beta)=n,\sigma(x)\leq T_\beta\}}\bigr),\\[5pt] \check {\mathscr{G}}_{n}^+(\alpha,\beta \mid x,m)&\;:\!=\; {\mathbb E}_{m}\bigl(\mathrm{e}^{-\alpha Y(T_\beta)}{\textbf{1}}_{\{N(T_\beta)=n,\sigma(x)>T_\beta\}}\bigr).\end{align*}

The crucial step is that on the event

![]() $\{\sigma(x)>T_\beta\}$

it holds that

$\{\sigma(x)>T_\beta\}$

it holds that

![]() $W(T_\beta) = x+Y(T_\beta)$

. As a consequence,

$W(T_\beta) = x+Y(T_\beta)$

. As a consequence,

The second term on the right-hand side of the previous display can be further evaluated, using

![]() $Y(\sigma(x))=-x$

in combination with the memoryless property. Using the same reasoning as before, we thus find

$Y(\sigma(x))=-x$

in combination with the memoryless property. Using the same reasoning as before, we thus find

From the above, we conclude that it suffices to be able to evaluate the object, for

![]() $k\in\{0,\ldots,m\}$

and

$k\in\{0,\ldots,m\}$

and

![]() $n\in\{0,\ldots,k\}$

,

$n\in\{0,\ldots,k\}$

,

The latter quantity can be evaluated by solving a system of linear equations, while the former is slightly harder to analyze.

-

• It is readily verified that, for

$n\in\{0,\ldots,k\}$

, (3.3)Writing this system in the usual matrix–vector form, it is readily seen that it is diagonally dominant, ensuring the system has a unique solution. Alternatively, one can solve the system recursively (starting at

$n\in\{0,\ldots,k\}$

, (3.3)Writing this system in the usual matrix–vector form, it is readily seen that it is diagonally dominant, ensuring the system has a unique solution. Alternatively, one can solve the system recursively (starting at \begin{equation}\check {\mathscr{G}}_n(\alpha,\beta \mid k) =\dfrac{\lambda k}{\lambda k + \beta -\alpha}{\mathscr{B}}(\alpha) \,\check{\mathscr{G}}_n(\alpha,\beta \mid k-1)\,{\textbf{1}}_{\{k>n\}} +\dfrac{\beta}{\lambda k + \beta -\alpha}\,{\textbf{1}}_{\{k=n\}}.\end{equation}

\begin{equation}\check {\mathscr{G}}_n(\alpha,\beta \mid k) =\dfrac{\lambda k}{\lambda k + \beta -\alpha}{\mathscr{B}}(\alpha) \,\check{\mathscr{G}}_n(\alpha,\beta \mid k-1)\,{\textbf{1}}_{\{k>n\}} +\dfrac{\beta}{\lambda k + \beta -\alpha}\,{\textbf{1}}_{\{k=n\}}.\end{equation}

$k=n$

).

$k=n$

).

-

• We apply results from [Reference D’Auria, Ivanovs, Kella and Mandjes5] to identify the probabilities

$p_{m,k}(x,\beta)$

for

$p_{m,k}(x,\beta)$

for

$k\in\{0,\ldots,m\}$

. We first observe that

$k\in\{0,\ldots,m\}$

. We first observe that

$(\sigma(x),N(\sigma(x)))$

is a MAP in

$(\sigma(x),N(\sigma(x)))$

is a MAP in

$x\geq 0$

[Reference D’Auria, Ivanovs, Kella and Mandjes5, Section 1.2]; note that this MAP does not have any non-decreasing subordinator states.

$x\geq 0$

[Reference D’Auria, Ivanovs, Kella and Mandjes5, Section 1.2]; note that this MAP does not have any non-decreasing subordinator states.To identify its characteristics, we first define the MAP corresponding to the free process Y(t). To this end, we introduce a matrix

$K(\alpha,\beta)$

with the (i, i)th entry given by

$K(\alpha,\beta)$

with the (i, i)th entry given by

$\alpha-\lambda i -\beta$

(for

$\alpha-\lambda i -\beta$

(for

$i\in\{0,\ldots,m\}$

), and the

$i\in\{0,\ldots,m\}$

), and the

$(i,i-1)$

th entry given by

$(i,i-1)$

th entry given by

$\lambda i \,{\mathscr{B}}(\alpha)$

(for

$\lambda i \,{\mathscr{B}}(\alpha)$

(for

$i\in\{1,\ldots,m\}$

), and all other entries equal to 0. The roots, for a given value of

$i\in\{1,\ldots,m\}$

), and all other entries equal to 0. The roots, for a given value of

$\beta>0$

, of

$\beta>0$

, of

$\det K(\alpha,\beta) =0$

are

$\det K(\alpha,\beta) =0$

are

${\boldsymbol{d}}(\beta)\;:\!=\; (\beta,\lambda +\beta,\ldots,\lambda m +\beta)^{\top}$

. The corresponding eigenvectors, solving

${\boldsymbol{d}}(\beta)\;:\!=\; (\beta,\lambda +\beta,\ldots,\lambda m +\beta)^{\top}$

. The corresponding eigenvectors, solving

$K({\alpha})\,{\boldsymbol{v}} = 0$

, can be evaluated recursively; calling these

$K({\alpha})\,{\boldsymbol{v}} = 0$

, can be evaluated recursively; calling these

${\boldsymbol{v}}_0,\ldots,{\boldsymbol{v}}_m$

, we let V be a matrix of which the columns are these vectors. Then, by [Reference D’Auria, Ivanovs, Kella and Mandjes5, equation (2)], in combination with [Reference D’Auria, Ivanovs, Kella and Mandjes5, Theorem 1] and the fact that Y(t) does not have any non-decreasing subordinator states, we obtain (3.4)with

${\boldsymbol{v}}_0,\ldots,{\boldsymbol{v}}_m$

, we let V be a matrix of which the columns are these vectors. Then, by [Reference D’Auria, Ivanovs, Kella and Mandjes5, equation (2)], in combination with [Reference D’Auria, Ivanovs, Kella and Mandjes5, Theorem 1] and the fact that Y(t) does not have any non-decreasing subordinator states, we obtain (3.4)with \begin{align} p_{m,k}(x,\beta)& = \bigl(\exp\!(\!-V D(\beta)\,V^{-1}\,x)\bigr)_{m,k}=\bigl(V\exp\!(\!-D(\beta)\,x)V^{-1}\bigr)_{m,k},\end{align}

\begin{align} p_{m,k}(x,\beta)& = \bigl(\exp\!(\!-V D(\beta)\,V^{-1}\,x)\bigr)_{m,k}=\bigl(V\exp\!(\!-D(\beta)\,x)V^{-1}\bigr)_{m,k},\end{align}

$D(\beta)\;:\!=\; \operatorname{diag}\{{\boldsymbol{d}}(\beta)\}$

.

$D(\beta)\;:\!=\; \operatorname{diag}\{{\boldsymbol{d}}(\beta)\}$

.

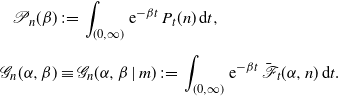

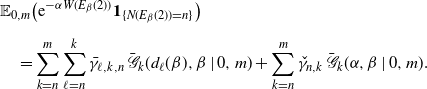

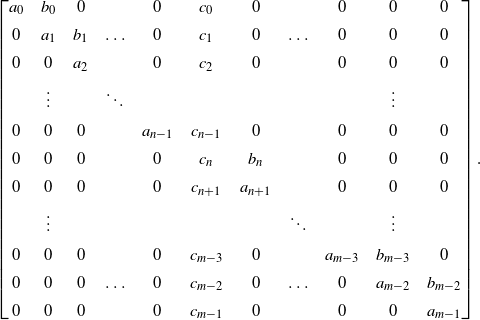

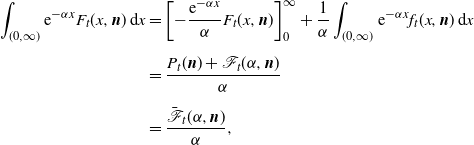

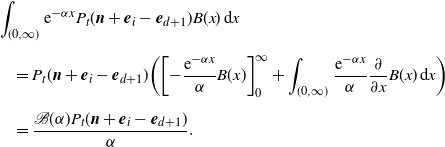

Combining the above findings, we have established the following result, which is numerically illustrated in Figure 2 (where the same instance is considered as in Figure 1).

Figure 2. Mean workload (a) and empty-buffer probability (b) in the model with initial workload x, as functions of time, for different values of m and for

![]() $x=1$

. The lines in light gray denote the base model, in which case

$x=1$

. The lines in light gray denote the base model, in which case

![]() $x=0$

.

$x=0$

.

Theorem 3.1. (Model with arbitrary initial workload.) For any

![]() $\alpha\geq 0$

and

$\alpha\geq 0$

and

![]() $\beta>0$

, and

$\beta>0$

, and

![]() $n\in\{0,\ldots,m\}$

, the transform

$n\in\{0,\ldots,m\}$

, the transform

![]() $\bar{\mathscr{G}}_n(\alpha,\beta \mid x,m)$

is given by

$\bar{\mathscr{G}}_n(\alpha,\beta \mid x,m)$

is given by

\begin{align*} \bar{\mathscr{G}}_n(\alpha,\beta \mid x,m)& =\sum_{k=n}^m p_{m,k}(x,\beta)\,\bar{\mathscr{G}}_n(\alpha,\beta \mid 0,k)\\[5pt] &\quad + \mathrm{e}^{-\alpha x}\,\check {\mathscr{G}}_n(\alpha,\beta \mid m) - \sum_{k=n}^{m} p_{m,k}(x,\beta)\,\check {\mathscr{G}}_n(\alpha,\beta \mid k), \end{align*}

\begin{align*} \bar{\mathscr{G}}_n(\alpha,\beta \mid x,m)& =\sum_{k=n}^m p_{m,k}(x,\beta)\,\bar{\mathscr{G}}_n(\alpha,\beta \mid 0,k)\\[5pt] &\quad + \mathrm{e}^{-\alpha x}\,\check {\mathscr{G}}_n(\alpha,\beta \mid m) - \sum_{k=n}^{m} p_{m,k}(x,\beta)\,\check {\mathscr{G}}_n(\alpha,\beta \mid k), \end{align*}

where (i)

![]() $\bar {\mathscr{G}}_n(\alpha,\beta \mid 0,k)=\beta \,{\mathscr{G}}_n(\alpha,\beta \mid k)$

follows from Theorem 2.1, (ii)

$\bar {\mathscr{G}}_n(\alpha,\beta \mid 0,k)=\beta \,{\mathscr{G}}_n(\alpha,\beta \mid k)$

follows from Theorem 2.1, (ii)

![]() $\check {\mathscr{G}}_n(\alpha,\beta \mid k)$

can be found recursively from the equations (3.3), and (iii)

$\check {\mathscr{G}}_n(\alpha,\beta \mid k)$

can be found recursively from the equations (3.3), and (iii)

![]() $p_{m,k}(x,\beta)$

is given by (3.4).

$p_{m,k}(x,\beta)$

is given by (3.4).

In the remainder of this section we present two immediate consequences of Theorem 3.1, both pertaining to the system starting empty at time 0: the first one describes the distribution of the first busy period, and the second the workload at an Erlang-distributed time epoch.

3.2. Busy period

In this subsection, we analyze the workload process’s first busy period

![]() $\sigma$

, which is distributed as

$\sigma$

, which is distributed as

![]() $\sigma(B)$

, with

$\sigma(B)$

, with

![]() $m-1$

clients still to arrive from that point on.

$m-1$

clients still to arrive from that point on.

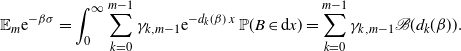

The results of the previous subsection entail that

\begin{align*} {\mathbb E}_m\mathrm{e}^{-\beta \sigma(x)} &=\mathbb{P}_m(\sigma(x)\leq T_\beta)\\[5pt] &= \sum_{k=0}^m {\mathbb P}_m(N(\sigma(x))=k, \sigma(x)\leq T_\beta)\\[5pt] &=\sum_{k=0}^m\bigl(\exp\!(\!-V D(\beta)\,V^{-1}\,x)\bigr)_{m,k}\\[5pt] &=\sum_{k=0}^m (V\exp\!(\!-D(\beta)\,x)V^{-1})_{m,k}\\[5pt] &=\sum_{k=0}^m \gamma_{k,m} \mathrm{e}^{-d_k(\beta)\,x},\end{align*}

\begin{align*} {\mathbb E}_m\mathrm{e}^{-\beta \sigma(x)} &=\mathbb{P}_m(\sigma(x)\leq T_\beta)\\[5pt] &= \sum_{k=0}^m {\mathbb P}_m(N(\sigma(x))=k, \sigma(x)\leq T_\beta)\\[5pt] &=\sum_{k=0}^m\bigl(\exp\!(\!-V D(\beta)\,V^{-1}\,x)\bigr)_{m,k}\\[5pt] &=\sum_{k=0}^m (V\exp\!(\!-D(\beta)\,x)V^{-1})_{m,k}\\[5pt] &=\sum_{k=0}^m \gamma_{k,m} \mathrm{e}^{-d_k(\beta)\,x},\end{align*}

for suitably chosen coefficients

![]() $\gamma_{k,m}$

, with

$\gamma_{k,m}$

, with

![]() $k\in\{0,\ldots,m\}$

. As a consequence, recognizing the Laplace–Stieltjes transform of B, we find that

$k\in\{0,\ldots,m\}$

. As a consequence, recognizing the Laplace–Stieltjes transform of B, we find that

\begin{align*} {\mathbb E}_m \mathrm{e}^{-\beta\sigma}&= \int_0^\infty \sum_{k=0}^{m-1} \gamma_{k,m-1} \mathrm{e}^{-d_k(\beta)\,x}\,{\mathbb P}(B\in\mathrm{d}x)= \sum_{k=0}^{m-1} \gamma_{k,m-1} {\mathscr{B}}(d_k(\beta)).\end{align*}

\begin{align*} {\mathbb E}_m \mathrm{e}^{-\beta\sigma}&= \int_0^\infty \sum_{k=0}^{m-1} \gamma_{k,m-1} \mathrm{e}^{-d_k(\beta)\,x}\,{\mathbb P}(B\in\mathrm{d}x)= \sum_{k=0}^{m-1} \gamma_{k,m-1} {\mathscr{B}}(d_k(\beta)).\end{align*}

3.3. Erlang horizon

In this subsection we point out how to compute the transform of

![]() $W(E_\beta(2))$

conditional on

$W(E_\beta(2))$

conditional on

![]() $W(0)=0$

, where

$W(0)=0$

, where

![]() $E_\beta(2)$

is an Erlang random variable with two phases and scale parameter

$E_\beta(2)$

is an Erlang random variable with two phases and scale parameter

![]() $\beta$

(or, put differently,

$\beta$

(or, put differently,

![]() $E_\beta(2)$

is distributed as the sum of two independent exponentially distributed random variables with mean

$E_\beta(2)$

is distributed as the sum of two independent exponentially distributed random variables with mean

![]() $\beta^{-1}$

).

$\beta^{-1}$

).

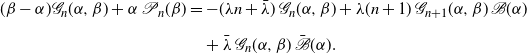

Note that the quantity of our interest can be rewritten as

for

![]() $n\in\{0,\ldots,m\}$

. For suitably chosen coefficients

$n\in\{0,\ldots,m\}$

. For suitably chosen coefficients

![]() $\bar \gamma_{\ell,k,n}$

(with

$\bar \gamma_{\ell,k,n}$

(with

![]() $k\in\{n,\ldots,m\}$

and

$k\in\{n,\ldots,m\}$

and

![]() $\ell\in\{n,\ldots,k\}$

) and

$\ell\in\{n,\ldots,k\}$

) and

![]() $\check \gamma_{n,k}$

(with

$\check \gamma_{n,k}$

(with

![]() $k\in\{n,\ldots,m\}$

), by virtue of Theorem 3.1,

$k\in\{n,\ldots,m\}$

), by virtue of Theorem 3.1,

\[{\mathscr{G}}_n(\alpha,\beta \mid x,k) = \sum_{\ell=n}^k\bar \gamma_{\ell,k,n} \, \mathrm{e}^{-d_\ell(\beta)\,x}+ \check \gamma_{n,k}\,\mathrm{e}^{-\alpha x}. \]

\[{\mathscr{G}}_n(\alpha,\beta \mid x,k) = \sum_{\ell=n}^k\bar \gamma_{\ell,k,n} \, \mathrm{e}^{-d_\ell(\beta)\,x}+ \check \gamma_{n,k}\,\mathrm{e}^{-\alpha x}. \]

Upon combining the above observations,

\begin{align*}& {\mathbb E}_{0,m}\bigl(\mathrm{e}^{-\alpha W(E_\beta(2))}{\textbf{1}}_{\{N(E_\beta(2))=n\}}\bigr)\\[5pt] &\quad = \sum_{k=n}^m\sum_{\ell=n}^k \bar \gamma_{\ell,k,n}\,\bar {\mathscr{G}}_k(d_\ell(\beta),\beta \mid 0,m)+\sum_{k=n}^m \check\gamma_{n,k}\,\bar {\mathscr{G}}_k(\alpha,\beta \mid 0,m).\end{align*}

\begin{align*}& {\mathbb E}_{0,m}\bigl(\mathrm{e}^{-\alpha W(E_\beta(2))}{\textbf{1}}_{\{N(E_\beta(2))=n\}}\bigr)\\[5pt] &\quad = \sum_{k=n}^m\sum_{\ell=n}^k \bar \gamma_{\ell,k,n}\,\bar {\mathscr{G}}_k(d_\ell(\beta),\beta \mid 0,m)+\sum_{k=n}^m \check\gamma_{n,k}\,\bar {\mathscr{G}}_k(\alpha,\beta \mid 0,m).\end{align*}

This procedure extends in the obvious way to an Erlang horizon with more than two phases. Along the same lines, the joint distribution at time

![]() $T_{\beta_1}$

and

$T_{\beta_1}$

and

![]() $T_{\beta_1}+T_{\beta_2}$

, with

$T_{\beta_1}+T_{\beta_2}$

, with

![]() $T_{\beta_1}$

and

$T_{\beta_1}$

and

![]() $T_{\beta_2}$

independent exponentially distributed random variables with means

$T_{\beta_2}$

independent exponentially distributed random variables with means

![]() $\beta_1^{-1}$

and

$\beta_1^{-1}$

and

![]() $\beta_2^{-1}$

, respectively, can be established.

$\beta_2^{-1}$

, respectively, can be established.

4. External Poisson arrival stream

In this section we consider the case of an external Poisson stream of customers with i.i.d. service times. As before, our objective is to characterize the distribution of the transient workload in terms of Laplace–Stieltjes transforms.

The arrival rate of these external arrivals is

![]() $\bar\lambda \geq 0$

, and the i.i.d. service times are distributed as the generic non-negative random variable

$\bar\lambda \geq 0$

, and the i.i.d. service times are distributed as the generic non-negative random variable

![]() $\bar B$

with cumulative distribution function

$\bar B$

with cumulative distribution function

![]() $\bar B(\!\cdot\!)$

and Laplace–Stieltjes transform

$\bar B(\!\cdot\!)$

and Laplace–Stieltjes transform

![]() $\bar{\mathscr{B}}(\alpha)\;:\!=\; {\mathbb E}\,\mathrm{e}^{-\alpha \bar B}$

. Picking

$\bar{\mathscr{B}}(\alpha)\;:\!=\; {\mathbb E}\,\mathrm{e}^{-\alpha \bar B}$

. Picking

![]() $\lambda=0$

or

$\lambda=0$

or

![]() $m=0$

, we have a conventional M/G/1 queue, whereas for

$m=0$

, we have a conventional M/G/1 queue, whereas for

![]() $\bar\lambda=0$

we recover the model of Section 2.

$\bar\lambda=0$

we recover the model of Section 2.

4.1. Recursion for the double transform

The counterpart of the partial differential equation (2.2) for this model with external Poisson arrivals is, for

![]() $x>0$

,

$x>0$

,

![]() $t>0$

and

$t>0$

and

![]() $n\in\{0,\ldots,m-1\}$

,

$n\in\{0,\ldots,m-1\}$

,

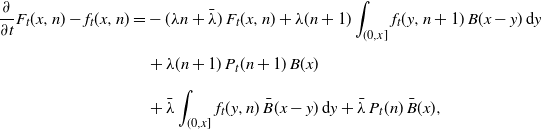

\begin{align}\notag\dfrac{\partial}{\partial t}F_t(x,n)-f_t(x,n) &=-\,(\lambda n+\bar\lambda)\, F_t(x, n) +\lambda (n+1)\int_{(0,x]} f_t(y,n+1) \,B(x-y)\,\mathrm{d}y\\[5pt] & \quad +\lambda (n+1)\,P_t(n+1) \,B(x)\notag \\[5pt] & \quad +\bar\lambda \int_{(0,x]} f_t(y,n) \,\bar B(x-y)\,\mathrm{d}y+\bar\lambda \,P_t(n) \,\bar B(x),\end{align}

\begin{align}\notag\dfrac{\partial}{\partial t}F_t(x,n)-f_t(x,n) &=-\,(\lambda n+\bar\lambda)\, F_t(x, n) +\lambda (n+1)\int_{(0,x]} f_t(y,n+1) \,B(x-y)\,\mathrm{d}y\\[5pt] & \quad +\lambda (n+1)\,P_t(n+1) \,B(x)\notag \\[5pt] & \quad +\bar\lambda \int_{(0,x]} f_t(y,n) \,\bar B(x-y)\,\mathrm{d}y+\bar\lambda \,P_t(n) \,\bar B(x),\end{align}

while for

![]() $n=m$

we have

$n=m$

we have

Multiplying (4.1) by

![]() $\mathrm{e}^{-\alpha x}\mathrm{e}^{-\beta t}$

and integrating over positive x and t, we obtain the algebraic equation

$\mathrm{e}^{-\alpha x}\mathrm{e}^{-\beta t}$

and integrating over positive x and t, we obtain the algebraic equation

\begin{align}({\beta}-{\alpha}){\mathscr{G}}_n(\alpha,\beta)+\alpha \,{\mathscr{P}}_n(\beta)& = -(\lambda n+\bar\lambda)\,{{\mathscr{G}}_n(\alpha,\beta)}+\lambda (n+1)\,{{\mathscr{G}}_{n+1}(\alpha,\beta)\,{\mathscr{B}}(\alpha)}\notag\\[5pt] &\quad +\bar\lambda \,{\mathscr{G}}_n(\alpha,\beta)\,\bar{\mathscr{B}}(\alpha).\end{align}

\begin{align}({\beta}-{\alpha}){\mathscr{G}}_n(\alpha,\beta)+\alpha \,{\mathscr{P}}_n(\beta)& = -(\lambda n+\bar\lambda)\,{{\mathscr{G}}_n(\alpha,\beta)}+\lambda (n+1)\,{{\mathscr{G}}_{n+1}(\alpha,\beta)\,{\mathscr{B}}(\alpha)}\notag\\[5pt] &\quad +\bar\lambda \,{\mathscr{G}}_n(\alpha,\beta)\,\bar{\mathscr{B}}(\alpha).\end{align}

Along the same lines,

4.2. Solving the double transform

As in Section 2, we start by finding an expression for

![]() ${\mathscr{G}}_m(\alpha,\beta)$

. To this end, we isolate

${\mathscr{G}}_m(\alpha,\beta)$

. To this end, we isolate

![]() ${\mathscr{G}}_m(\alpha,\beta)$

in (4.3), so as to obtain

${\mathscr{G}}_m(\alpha,\beta)$

in (4.3), so as to obtain

The next step is to identify the unknown

![]() ${\mathscr{P}}_m(\beta)$

. Define

${\mathscr{P}}_m(\beta)$

. Define

![]() $\Phi(\alpha)\;:\!=\; \alpha-\bar\lambda(1-\bar{\mathscr{B}}(\alpha))$

, in which we recognize the Laplace exponent of a compound Poisson process with drift, and

$\Phi(\alpha)\;:\!=\; \alpha-\bar\lambda(1-\bar{\mathscr{B}}(\alpha))$

, in which we recognize the Laplace exponent of a compound Poisson process with drift, and

![]() $\Psi(\!\cdot\!)$

its right-inverse. Observing that the denominator of (4.4) vanishes when inserting

$\Psi(\!\cdot\!)$

its right-inverse. Observing that the denominator of (4.4) vanishes when inserting

![]() $\alpha=\Psi(\beta+\lambda m)$

, we conclude that

$\alpha=\Psi(\beta+\lambda m)$

, we conclude that

![]() ${\mathscr{P}}_m(\beta)=1/\Psi(\beta+\lambda m)$

. This means that we have identified

${\mathscr{P}}_m(\beta)=1/\Psi(\beta+\lambda m)$

. This means that we have identified

![]() ${\mathscr{G}}_m(\alpha,\beta)$

as well:

${\mathscr{G}}_m(\alpha,\beta)$

as well:

This is a familiar expression (see e.g. [Reference Dębicki and Mandjes6, Theorem 4.1]); compare the transform of the workload in an M/G/1 queue with arrival rate

![]() $\bar\lambda$

and service times distributed as

$\bar\lambda$

and service times distributed as

![]() $\bar B$

, at an exponentially distributed time with mean

$\bar B$

, at an exponentially distributed time with mean

![]() $(\beta+\lambda m)^{-1}$

.

$(\beta+\lambda m)^{-1}$

.

We proceed by pointing out how

![]() ${\mathscr{G}}_0(\alpha,\beta),\ldots,{\mathscr{G}}_{m-1}(\alpha,\beta)$

can be found. We adopt the approach presented in Remark 2.3. For

${\mathscr{G}}_0(\alpha,\beta),\ldots,{\mathscr{G}}_{m-1}(\alpha,\beta)$

can be found. We adopt the approach presented in Remark 2.3. For

![]() $n\in\{0,\ldots,m-1\}$

, equation (4.2) leads to

$n\in\{0,\ldots,m-1\}$

, equation (4.2) leads to

Observe that

![]() $\alpha_n\;:\!=\; \Psi(\beta+\lambda n)$

is a root of the denominator, and hence a root of the numerator as well, leading to the equation

$\alpha_n\;:\!=\; \Psi(\beta+\lambda n)$

is a root of the denominator, and hence a root of the numerator as well, leading to the equation

cf. equation (2.9). Now the key idea, as in Remark 2.3, is to apply equations (4.7) and (4.6) alternately: inserting

![]() $n=m-1$

in (4.7) yields

$n=m-1$

in (4.7) yields

![]() ${\mathscr{P}}_{m-1}(\beta)$

, then inserting

${\mathscr{P}}_{m-1}(\beta)$

, then inserting

![]() $n=m-1$

in (4.6) yields

$n=m-1$

in (4.6) yields

![]() ${\mathscr{G}}_{m-1}(\alpha,\beta)$

, and so on. We have established the following result.

${\mathscr{G}}_{m-1}(\alpha,\beta)$

, and so on. We have established the following result.

Theorem 4.1. (Model with external Poisson arrival stream.) For any

![]() $\alpha\geq 0$

and

$\alpha\geq 0$

and

![]() $\beta>0$

, and

$\beta>0$

, and

![]() $n\in\{0,\ldots,m-1\}$

, the transform

$n\in\{0,\ldots,m-1\}$

, the transform

![]() ${\mathscr{G}}_n(\alpha,\beta \mid m)$

is given by (4.6), where the transforms

${\mathscr{G}}_n(\alpha,\beta \mid m)$

is given by (4.6), where the transforms

![]() ${\mathscr{P}}_{0}(\beta),\ldots,{\mathscr{P}}_{m-1}(\beta)$

follow from recursion (4.7), with

${\mathscr{P}}_{0}(\beta),\ldots,{\mathscr{P}}_{m-1}(\beta)$

follow from recursion (4.7), with

![]() ${\mathscr{P}}_m(\beta)=1/\Psi(\beta+\lambda m)$

, and

${\mathscr{P}}_m(\beta)=1/\Psi(\beta+\lambda m)$

, and

![]() ${\mathscr{G}}_m(\alpha,\beta \mid m)$

is given by (4.5).

${\mathscr{G}}_m(\alpha,\beta \mid m)$

is given by (4.5).

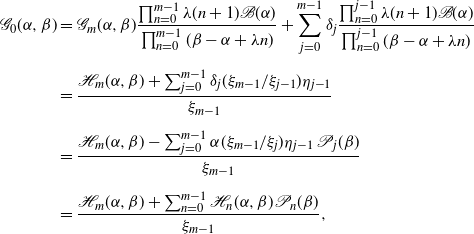

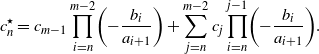

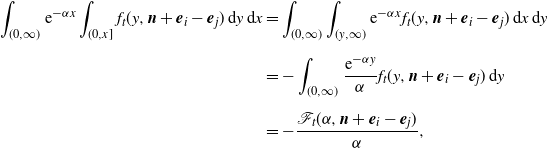

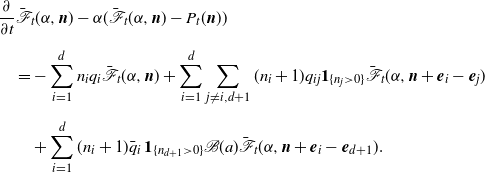

This result is numerically illustrated in Figure 3. As in the previous numerical experiments, we worked with exponentially distributed service times and

![]() $\lambda=\mu=1$

. The service times of the external Poisson stream are exponentially distributed as well, with parameter

$\lambda=\mu=1$

. The service times of the external Poisson stream are exponentially distributed as well, with parameter

![]() $\bar\mu=5.$

$\bar\mu=5.$

Figure 3. Mean workload (a) and empty-buffer probability (b), in the model with an external Poisson arrival stream, as functions of time, for different values of m. Here, the external Poisson arrival stream has rate parameter

![]() $\bar\lambda=1$

and the service times of the customers are exponentially distributed with rate

$\bar\lambda=1$

and the service times of the customers are exponentially distributed with rate

![]() ${\bar{\mu}} = {5}$

. The lines in light gray denote the base model, in which case

${\bar{\mu}} = {5}$

. The lines in light gray denote the base model, in which case

![]() $\bar{\lambda}=0$

.

$\bar{\lambda}=0$

.

5. Phase-type distributed arrival times

In Section 2 we saw that for exponentially distributed arrivals our model provides a closed-form solution, so it is a natural question whether the approach can be generalized to a more general class of arrival-time distributions. This class of distributions is particularly relevant, as any non-negative random variable can be approximated arbitrarily closely by a phase-type random variable [Reference Asmussen2, Section III.4]; the ‘denseness’ proof of [Reference Asmussen2, Theorem III.4.2] actually reveals that we can even restrict ourselves to a subclass of the phase-type distributions, namely the class of mixtures of Erlang distributions with different shape parameters but the same scale parameter. The proof of [Reference Asmussen2, Theorem III.4.2] also shows an intrinsic drawback of working with this specific class of phase-type distributions: one may need distributions of large dimension to get an accurate fit. This has motivated working with a low-dimensional two-moment fit, such as the one presented in [Reference Tijms18]. In this fit, for distributions with a coefficient of variation less than 1, a mixture of two Erlang random variables (with the same scale parameter) is used, and for distributions with a coefficient of variation larger than 1, a hyperexponential random variable; for details see e.g. [Reference Kuiper, Mandjes, de Mast and Brokkelkamp16, Section 3.1].

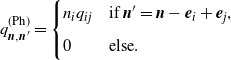

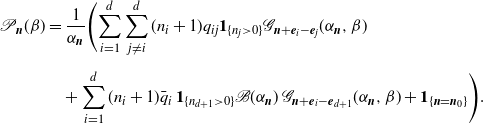

This section discusses the distribution of the transient workload in the case when the arrival times A are of phase type. An explicit expression in terms of transforms is still possible, albeit at the price of working with a large state space.

5.1. Set-up

We define the phase-type distribution of the generic arrival time A via the initial probability distribution

![]() $\boldsymbol{\gamma}\in{\mathbb R}^{d+1}$

(with

$\boldsymbol{\gamma}\in{\mathbb R}^{d+1}$

(with

![]() $\color{black} \gamma_i \geq 0$

for all phases i and

$\color{black} \gamma_i \geq 0$

for all phases i and

![]() $\boldsymbol{\gamma}^\top{\textbf{1}}=1$

) and the transition matrix

$\boldsymbol{\gamma}^\top{\textbf{1}}=1$

) and the transition matrix

![]() $Q=(q_{ij})_{i,j}^{d+1}$

(with non-negative off-diagonal elements and

$Q=(q_{ij})_{i,j}^{d+1}$

(with non-negative off-diagonal elements and

![]() $Q{\textbf{1}}={\textbf{0}}$

); define

$Q{\textbf{1}}={\textbf{0}}$

); define

![]() $q_i\;:\!=\; -q_{ii}$

and

$q_i\;:\!=\; -q_{ii}$

and

![]() $\bar q_i\;:\!=\; q_{i,d+1}$

. The states 1 up to

$\bar q_i\;:\!=\; q_{i,d+1}$

. The states 1 up to

![]() $d+1$

are commonly referred to as the phases underlying the phase-type distribution. We assume that phase

$d+1$

are commonly referred to as the phases underlying the phase-type distribution. We assume that phase

![]() $d+1$

is absorbing; from phase

$d+1$

is absorbing; from phase

![]() $i\in\{1,\ldots,d\}$

the process jumps to this phase with rate

$i\in\{1,\ldots,d\}$

the process jumps to this phase with rate

![]() $\bar q_i$

, after which the arrival takes place. As before, the m arrivals are independent of each other (and of the service times).

$\bar q_i$

, after which the arrival takes place. As before, the m arrivals are independent of each other (and of the service times).

Let

![]() ${\boldsymbol{N}}(t)$

denote the state at time

${\boldsymbol{N}}(t)$

denote the state at time

![]() $t\geq 0$

, in the sense that the ith component of

$t\geq 0$

, in the sense that the ith component of

![]() ${\boldsymbol{N}}(t)$

denotes the number of the m clients that are in phase i at time t. It is clear that

${\boldsymbol{N}}(t)$

denotes the number of the m clients that are in phase i at time t. It is clear that

![]() ${\boldsymbol{N}}_0$

has a multinomial distribution with parameters m and

${\boldsymbol{N}}_0$

has a multinomial distribution with parameters m and

![]() $\gamma_1,\ldots,\gamma_{d+1}$

, i.e. for any vector

$\gamma_1,\ldots,\gamma_{d+1}$

, i.e. for any vector

![]() ${\boldsymbol{n}}_0\in{\mathbb N}^{d+1}_0$

such that

${\boldsymbol{n}}_0\in{\mathbb N}^{d+1}_0$

such that

![]() ${\boldsymbol{n}}_0^\top {\textbf{1}}= m$

,

${\boldsymbol{n}}_0^\top {\textbf{1}}= m$

,

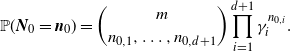

\[{\mathbb P}({\boldsymbol{N}}_0={\boldsymbol{n}}_0)= \binom{m}{n_{0,1},\ldots,n_{0,d+1}} \prod_{i=1}^{d+1} \gamma_i^{n_{0,i}}.\]

\[{\mathbb P}({\boldsymbol{N}}_0={\boldsymbol{n}}_0)= \binom{m}{n_{0,1},\ldots,n_{0,d+1}} \prod_{i=1}^{d+1} \gamma_i^{n_{0,i}}.\]

Henceforth we therefore condition, without loss of generality, on the event

![]() $\{{\boldsymbol{N}}_0={\boldsymbol{n}}_0\}$

; the transform of interest can be evaluated by deconditioning.

$\{{\boldsymbol{N}}_0={\boldsymbol{n}}_0\}$

; the transform of interest can be evaluated by deconditioning.

The key object of interest is the cumulative distribution function

with

![]() ${\boldsymbol{n}} = (n_1, \dots, n_{d+1})\in{\mathbb N}^{d+1}_0$

such that

${\boldsymbol{n}} = (n_1, \dots, n_{d+1})\in{\mathbb N}^{d+1}_0$

such that

![]() ${\boldsymbol{n}}^\top {\textbf{1}}= m$

. In this section we work with the usual notation: the corresponding density is denoted by

${\boldsymbol{n}}^\top {\textbf{1}}= m$

. In this section we work with the usual notation: the corresponding density is denoted by

![]() $f_t(x, {\boldsymbol{n}})$

for

$f_t(x, {\boldsymbol{n}})$

for

![]() $x>0$

, while

$x>0$

, while

![]() $P_t({\boldsymbol{n}})$

is used to denote

$P_t({\boldsymbol{n}})$

is used to denote

![]() $\mathbb{P}(W(t)=0, {\boldsymbol{N}}(t) = {\boldsymbol{n}})$

. Mimicking the steps followed in Section 2, for any

$\mathbb{P}(W(t)=0, {\boldsymbol{N}}(t) = {\boldsymbol{n}})$

. Mimicking the steps followed in Section 2, for any

![]() $x>0$

, up to

$x>0$

, up to

![]() ${\mathrm{o}} (\Delta t)$

terms,

${\mathrm{o}} (\Delta t)$

terms,

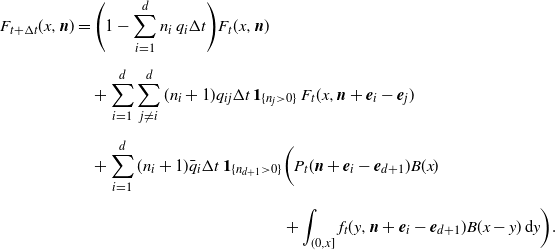

\begin{align}\notag F_{t+\Delta t}(x,{\boldsymbol{n}})& = \Biggl(1 - \sum_{i=1}^dn_i\,q_i\Delta t\Biggr)F_t(x,{\boldsymbol{n}}) \\[5pt] &\quad + \sum_{i=1}^{d}\sum_{j\not =i}^d(n_i+1)q_{ij}\Delta t \,{\textbf{1}}_{\{n_j>0\}}\, F_t(x,{\boldsymbol{n}} + {\boldsymbol{e}}_i- {\boldsymbol{e}}_j) \notag \\[5pt] &\quad + \sum_{i=1}^d (n_i+1)\bar q_i\Delta t \,{\textbf{1}}_{\{n_{d+1} > 0\}}\biggl( P_t({\boldsymbol{n}} + {\boldsymbol{e}}_i - {\boldsymbol{e}}_{d+1})B(x) \notag \\[5pt] &\hspace{44mm} + \int_{(0,x]} f_t(y,{\boldsymbol{n}}+{\boldsymbol{e}}_i - {\boldsymbol{e}}_{d+1})B(x-y)\,\mathrm{d}y \biggr).\end{align}

\begin{align}\notag F_{t+\Delta t}(x,{\boldsymbol{n}})& = \Biggl(1 - \sum_{i=1}^dn_i\,q_i\Delta t\Biggr)F_t(x,{\boldsymbol{n}}) \\[5pt] &\quad + \sum_{i=1}^{d}\sum_{j\not =i}^d(n_i+1)q_{ij}\Delta t \,{\textbf{1}}_{\{n_j>0\}}\, F_t(x,{\boldsymbol{n}} + {\boldsymbol{e}}_i- {\boldsymbol{e}}_j) \notag \\[5pt] &\quad + \sum_{i=1}^d (n_i+1)\bar q_i\Delta t \,{\textbf{1}}_{\{n_{d+1} > 0\}}\biggl( P_t({\boldsymbol{n}} + {\boldsymbol{e}}_i - {\boldsymbol{e}}_{d+1})B(x) \notag \\[5pt] &\hspace{44mm} + \int_{(0,x]} f_t(y,{\boldsymbol{n}}+{\boldsymbol{e}}_i - {\boldsymbol{e}}_{d+1})B(x-y)\,\mathrm{d}y \biggr).\end{align}

This equation can be understood as follows. As in the exponential case dealt with in Section 2, the first term on the right-hand side corresponds to the scenario that no transitions between phases take place between t and

![]() $t + \Delta t$

. The second term represents the transitions between two phases but not to the final phase. Finally, the third term covers a transition from one phase to the final phase, in which case the customer has arrived.

$t + \Delta t$