1. TECHNOLOGY AS NORMS

1.1 The Conditional Normativity of Knowledge Systems

Technology has always had inherent normative consequences. Human artefacts—products of human activity—have a decisive influence on people’s behaviour and social relations, such as soap, television sets, computers, and mobile phones have had consequences in different respects. With the intended use of a product, normative instructions follow on how to behave in different situations. It has always been like this. However, with the emergence of digital technology, it has become highly apparent. We talk about digital technology as a disruptive technology.Footnote 1 It violates previous ways of looking at and doing different things. The technical norms have, through digital technology, gained an increasingly strong inherent normativity represented not least by artificial intelligence (AI) and the use of algorithms.

Norms are built on expectations. These expectations do not have to emanate from a specific norm-sender. They can arise out of the underlying rationality of a system. Norms are invisible and unknown before articulated within professional work. Knowledge systems give rise to a sort of conditional normativity. If one wants to accomplish a certain task, one has to figure out the normative recommendations that follow from the knowledge of how the specific system is constructed. The rationality of the system can be seen as the normative core from which different normative claims (norms) are deduced depending on the conditions that apply in a particular case. Technical prescriptions from natural or other sciences are based on conditional propositions/clauses—if a given condition is present, then a given effect will occur. For instance, in the domain of aviation maintenance, conditional propositions have the character of general clauses. Knowledge in these cases works, and has the same function in interpreting and understanding technical norms as the preparatory work of a law has for lawyers when they seek to understand the content of a law.Footnote 2 The difference lies in the fact that the conditions for the legal proposition/clause are decided by the rule’s open normative property, while the normative standpoints in technical and similar knowledge systems are derived from the causal(ly oriented) relations of the knowledge system.

These systems are knowledge systems, not explicitly normative systems. Nonetheless, they play a crucial normative role, exploring the “objective” knowledge in order to arrive at the most rational way of behaving in a certain situation. It is a question of going from a purely descriptive activity to making normative prescriptions.

Normativity is even pronounced in systems that are associated with the laws of nature. Phenomena like gravity, thermodynamics, photosynthesis, etc. give rise to knowledge systems about how nature operates in various areas. By understanding how these phenomena operate, one can also give advice about how to act to attain various ends.Footnote 3 These norms are invisible and unknown before they are articulated through science. Systems thus give rise to a sort of conditional normativity. If one wants to accomplish something, one has to pay attention to the laws of nature that determine how one should act to attain a certain goal or secure a given value. When it comes to algorithms and AI, they are normative in themselves. They are, as is every norm, made for certain purposes. However, these purposes are not openly declared or transparent. Algorithms provide the instructions for almost any AI system that you can think of.

1.2 Norms as Freestanding Imperatives

AI bears within it the potential for producing free-standing imperatives.Footnote 4 A neural network or artificial neural network is a collective name for a number of self-learning algorithms that attempt to mimic the function of biological neuron networks (such as the brain). Algorithms that emulate neural networks can often cope with problems that are difficult to solve with conventional computer-science methods. It is the unpredictable solutions to problems and the self-programming aspect of deep-learning techniques that raise new questions for the law to consider.Footnote 5 The problem of emergence is the problem of who will be held responsible for what the code does.Footnote 6 Examples of applications are data mining, pattern recognition, signal processing, control technology, computer games, forecasts, self-organization, medical diagnosis, non-linear optimization, optimization problems with many constraints (e.g. scheduling), and more. These applications make use of machine learning. In machine learning, an algorithm is a set of rules or instructions given to an AI program, neural network, or other machine to help it to learn on its own. Thus, in these situations, AI via algorithms produces its own norms; normativity becomes an integral part of technology. In the paradigmatic shift from mechanics to digital techniques, the normativity changes from being connected to the use of technology to becoming an integral part of the technology itself.Footnote 7

A neuron network must be trained before it can be built up and used for certain purposes. Most neural networks therefore work in two phases: first a learning phase in which the network is trained on the task to be performed; then follows an application phase in which the network only uses what it has learned. It is also possible to let the network continue to learn even when it is used. Neuron-net has a wide range of applications and new applications are constantly being created.

Algorithms are as norms affecting people in their everyday life but without people having the democratic opportunity to affect them.Footnote 8 Being products of the ongoing digital transformation of society, they have far-reaching, yet under-researched consequences for what we do, how we do it, and what can be done. This revolutionary technology, which has taken hold of society in recent decades, has been characterized as “disruptive,”Footnote 9 meaning that it makes earlier modes of producing and living outdated. This means that existing norms are affected. During the latest decades, digitization has emerged as a new revolutionary technology.Footnote 10 We face a digital transformation with huge implications, including the relationship between states and individuals, and it will drive changes in today’s laws, institutions, and values.

The accumulated knowledge about algorithms has advanced and developed into AI.Footnote 11 Algorithms, together with big data, protocols/rules, interfaces, and service default settings, called platforms, encode social values into the digital architecture that surrounds us and co-create social and cultural patterns of action.Footnote 12 It is only through the capacity to see and comprehend expectations emanating from technological systems that algorithms as norms become visible and it becomes possible to understand them as part of the societal consequences of digitization.Footnote 13 Knowledge about technological development alone, however, does not tell us anything about societal changes; neither do the algorithms and AI. The missing link is the concept of social norms. The direct normative effect of algorithms is a question of technical instructions. However, the interesting aspect from a social-science perspective is to locate and understand the societal implications of AI. There is a need for the development of the sociology of algorithmsFootnote 14 in the same way as the sociology of law as a discipline was invented at the beginning of the last century in order to complement and compete with legal dogmatics regarding knowledge about functions and consequences.Footnote 15

2. AI AS NORMS

2.1 Different Orders of Normativity: The Algo Norms

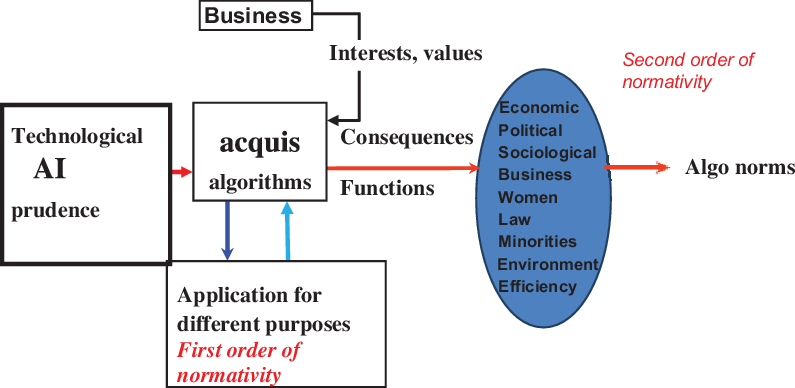

There is a crucial difference between algorithms in a technical sense and algorithms from a social-science perspective. Both are normative but covering different fields of knowledge. A parallel are legal norms. They can be understood from a strict legal point of view, telling us about the correct interpretation and application of the legal rule as an instruction of how to act or how to judge in a certain situation. However, legal norms have also—from a social-science perspective—a broader scope. Legal norms are not neutral, but do also affect societal functions and come with their own consequences in society. I claim that the point is that there are different orders of normativity—the first related to the algorithm as a technical instruction and the second to the consequences springing from the first order. To illustrate using an example from the legal field: it is one thing to know when a person should be sentenced to imprisonment and another to understand what this means for society, the perpetrator, or for the victims of the crime. These are distinct spheres of knowledge, which require their own different methodological approaches. The normativity layers associated with algorithms are special and to understand the second order calls for a separate concept—what I call algo norms. They are an indirect effect of the algorithms and it is this indirect effect that is of interest from a sociology-of-law perspective.Footnote 16 Thus, algo norms are those norms related to the societal consequences that follow the use of algorithms in different respects.

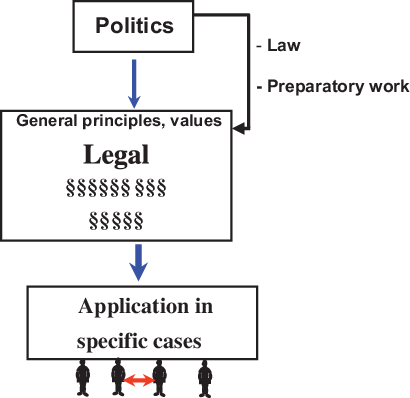

Let me explain using a parallel between law and AI. In graphical terms, the similarities and differences between legal norms and algo norms could be illustrated in the following way. Starting with the legal-knowledge field shown in Figure 1 might give an understanding.

Figure 1 Legal decision-making

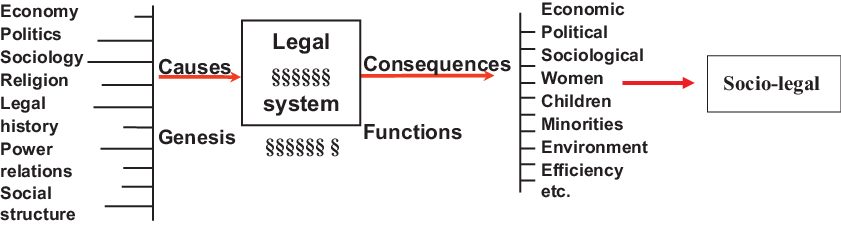

Legal dogmatics can be illustrated from a vertical perspective, since it, as an ideal type, is built on the logics of subsumption and deduction.Footnote 17 This process is a question of the technical application of normative standpoints in law on factual situations, which may require more or less sophisticated reasoning. We can also extend the legal-knowledge field to include socio-legal aspects covering the causes and consequences of law, namely looking at the genesis and the functions of law, which is the focus for sociology of lawFootnote 18 (see Figure 2).

Figure 2 Sociology-of-law perspective on law and the legal system

This horizontal problem area represents something other than the legal-dogmatics knowledge field, although it is of great relevance for understanding law. It is just another perspective on law that is not regarded as relevant for legal dogmatics. Sociology of law has not so far invented a proper concept that covers these normativities related to the genesis and consequences of law when it is applied to and confronted with societal realities. The concept law in action is not adequate, and nor is the concept living law. Perhaps the concept of socio-legal norms is the most appropriate. Maybe the parallel to the digital world can help us. If we translate the two illustrations to the algo norm context, we get Figure 3.

Figure 3 Different orders of normativity

Algo norms can be regarded as a subcategory of technical norms. The theoretical perspective lying behind the concept of algo norms builds on norm-science theory and method.Footnote 19 Norm science is about identifying and understanding the driving forces behind human action on a societal level.Footnote 20 The study of norms tends to be divided into two perspectives: one descriptive and one injunctive.Footnote 21 Banakar uses also the parallel terminology of external and internal perspectives on norms. However, there is a third possible understanding of the norm concept that is ignored in social sciences, and that is the analytical perspective.Footnote 22 In this, the norm concept and the empirical study of norms help us to understand causalities on a collective level lying behind human behaviour. Through the study of norms, human motives for collective action can be captured.Footnote 23 This approach is going beyond Max Weber’s Verstehen method. Weber was a methodological individualist and this meant that we can only understand social phenomena and historical processes by studying how individuals experience the world and what individuals find as meaningful.Footnote 24 By dissecting existing norms in a descriptive way, it is possible to get hold of the preferences and motives that lie behind human behaviour on a collective level.

To fully grasp the social-norm concept, it is helpful to distinguish between three different ontological levels: a normative, a sociological, and a sociopsychological.Footnote 25 Each has its own epistemological implications.Footnote 26 The identification of algo norms as the normative outcome of the algorithm has its own challenges, since the normativity is an indirect, external effect and not an explicit one. The primary objective of the algorithms is not to produce its own set of norms. The latter are hidden effects that need to be made visible by studying their societal effects. The normative dimension is hidden behind cognitively based instructions on how to act.Footnote 27

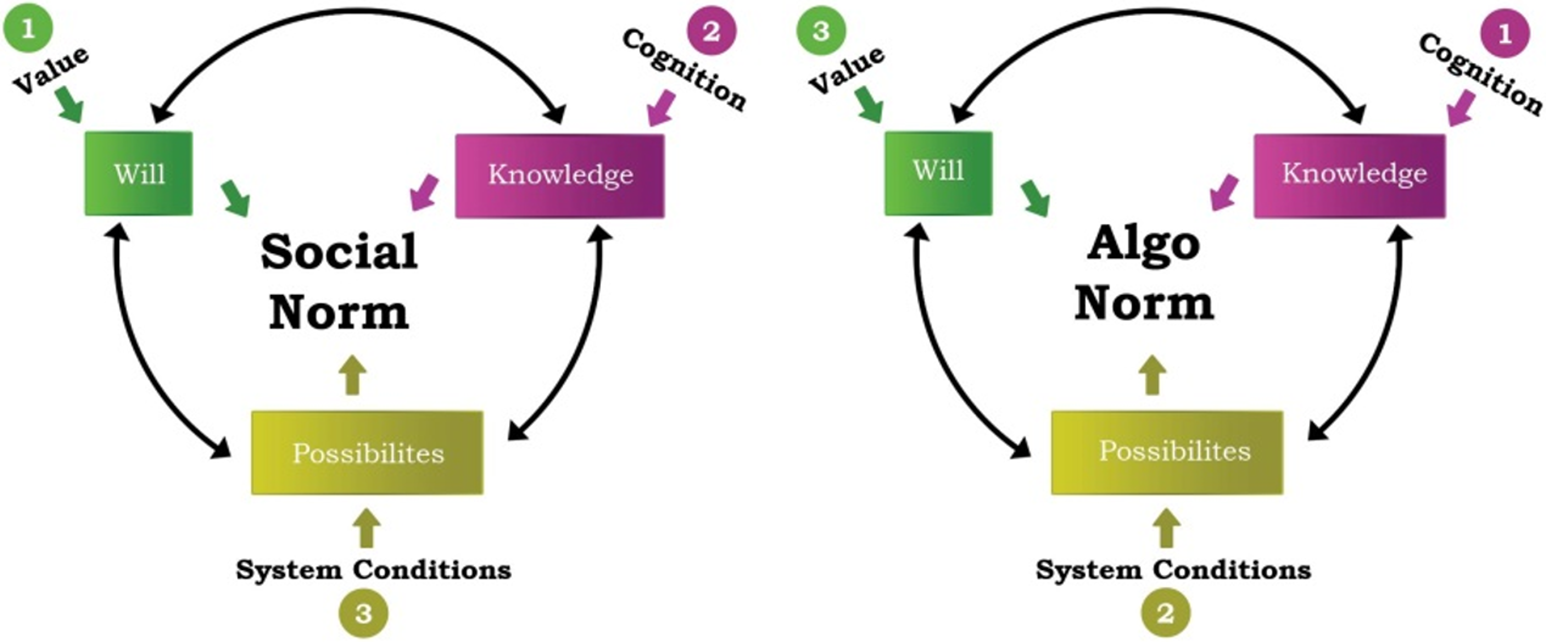

In order to help us to dissect the norm in terms of motives, one has to distinguish three dimensions of the norm: (1) will and values; (2) knowledge and cognition; and (3) systems and possibilities.Footnote 28 Typically, social norms are initiated by human will or values, which require knowledge for implementation, including cognitive references to the norm’s addressees. The outcome of a norm application is finally dependent on the possibilities of carrying out what the norm prescribes. Systems that humans have created for various purposes set the limits of these possibilities.

The interesting thing about algo norms—compared to social normsFootnote 29 —is that their genesis is related to new knowledge, digitization, which generates its own systems with different purposes. These systems influence in their turn will and values. Thus, the algo norms move from dimension (2) to (3) to (1), as illustrated in the following figures. The difference between social and algo norms is important because, wherever systemic factors are the independent variables, the scope for human will and values is contained and may become a threat to democracy. See Figure 4, showing the difference between social norms and algo norms.

Figure 4 Comparisons between social norms and algo norms

Algo norms are thus something that emerge when the algorithms meet and collide with the surrounding society. Different consequences occur when the technological solutions and design are applied in reality; some can be seen as intended, but many are without intentions. They are external effects of the algorithms. There is a kind of two steps of causality. First, the algorithm, as a technical instruction, performs a certain service. This service is, in turn, meant to fulfil a specific purpose, which goes beyond the mere technical aspects of algorithms. The relationship between the two steps is many times not visible.

Algorithms as norms are unique. The normative consequences are embedded in the technology and determined by the design of the AI. The outcome is an empirical question.Footnote 30 They are, from the perspective of the addressee, structurally conditioned and they cannot be avoided. Algorithms, as Melvin Kranzberg,Footnote 31 the historian of technology, expresses it in his first law of technology: they are neither good nor bad; nor are they neutral. What he suggests is that technology’s interaction with the social ecology is such that technical developments frequently have environmental, social, and human consequences that go far beyond the immediate purposes of the technical devices and practices themselves, and the same technology can have quite different results when introduced into different contexts or under different circumstances.

In this way, Kranzberg confirms the idea of a first and second order of normativity. The first is precise and about techniques, while the other is diversified and multi-normative. As Adrian Mackenzie has further observed: “[a]n algorithm selects and reinforces one ordering at the expense of others,”Footnote 32 often in ways that were not intended or possible to foresee. Algo norms, therefore, are norms to which people are subordinate—but in ways that largely lie outside their control. Algo norms are a question neither of free will, nor of coercion. The design of the technique, and thereby its normative implications, is made by people with technical expertise. From this perspective, the engineers become our new norm setters, at least as long as AI is logical and in the hands of humans and not determined by the technology as such.

From a social-science point of view, algo norms are problematic in two interconnected ways. One is the democratic deficit that arises when norms in society are introduced and decided upon by technicians or by the system of algorithms itself. They are neither the result of political decision-making in a democratic order nor an outcome of social or public discourses. Sociology of law’s knowledge interest is about how decisions are taken and with what normative implications. Even with the best intentions to create algorithms that make life better for people, the values and prejudices among those who feed the algorithm with in-data and the design of the code will affect how the algorithms are constructed.Footnote 33 Furthermore, algorithms to a larger and larger extent reproduce themselves. The opportunities for public accountability shrink and citizens stand the risk of increasingly becoming captives of technical fixes over which they have little, if any, control.

The second related problem concerns manipulation in different respects. One is about the market. Algo norms challenge the ideal role of the market as a tool for the consumer to find goods and services. They confront us with a paradox. Our choices are determined by the algorithms and those who have programmed them in order to figure out what we like best and thereby seem to want more of. We face a situation in which the norm provider determines the content. Whenever one visits the Internet and buys products, listens to the news, uses social media, or browses the web, algorithms decide what one finds. This is an in-built effect of the technique, actually—its raison d’être. The market is in itself an algorithm (supply and demand meet in a computer system), but the actors remain mostly human beings; they interact with the market via computer screen, keyboard, and mouse.Footnote 34 However, the algo norms are so seductive that we do not even notice that information filters in ways that affect us. Not even the programmers are aware of what is going on.Footnote 35 Tracing the result from personalized searches, a website algorithm selectively guesses what information a user would like to have and encapsulates us in a filter bubble.Footnote 36 As a result, users become separate from information that disagrees with their viewpoints, effectively isolating them in their own cultural or ideological bubbles.Footnote 37 The choices made by these algorithms are not transparent and it is difficult to see how they affect our own and other citizens’ worldview and/or preferences.

2.2 Methodological Implications of Algo Norms

Previous social-science research on digital development has been primarily concentrated on its technical innovations and its contributions to the emergence of new business models. This includes, for example, technological advances related to Hypertext Transfer Protocol (HTTP), peer-to-peer computing (P2P), and blockchain technology diffusion.Footnote 38 These digital technologies have given rise to revolutionary changes regarding economic and social institutions and norms.Footnote 39 The literature on algorithms is quite extensive.Footnote 40 Most publications are in the science and technology fields, but there is also an increasing number within the social sciences, economics, and the humanities.Footnote 41 In 2019, the AI Sustainability CentreFootnote 42 published an inventory of the state of knowledge of ethical, social, and legal challenges associated with AI.Footnote 43 Social Media Collective at Microsoft Research New England has produced a relevant compilation of the literature in the field.Footnote 44 There is a nascent sociological literature on algorithms.Footnote 45 The sociology-of-law study of algorithms will expand the social-science-research frontier by exploring from a normative perspective the societal effects of the new technology. The social sciences have so far looked at digitization primarily from an economic point of view and most of it has had a descriptive preponderance. One example of the attempt of research to use algorithms as a scientific tool studying norms is an article by Sara Morics.Footnote 46

We have no systematic knowledge about how algorithms and AI affect us as, namely their effects on individuals, on society at large, as well as on law. As discussed above, digitization entails hidden normative effects on society and law. AI and algo norms as research objects are moving targets. To explore an ongoing process of change in real time, a novel scientific approach that relates to advanced practice has to be initiated. The research front is not located at the researchers’ desk, but in practice. As a result, more information is available in sources linked to websites and links from different commercial actors and among writers, journalists, and bloggers than in scientific literature.Footnote 47 This might have a negative effect on the scientific image of the research. In this context, a researcher’s task is to discover and articulate advanced practice, validate it, and scientifically systematize it in such a way that it can be communicated and advanced.Footnote 48 The validity of the information given is extra-sensitive from a methodological point of view.

Another problem in relation to this kind of study is the empirical approach. You cannot expect to gain knowledge by conducting empirical studies. They mirror either the old, mechanical solutions to societal problems related to the industrial society or a reality that has changed before the scientific results are published.Footnote 49 Due to the cyclical development of society, lessons can be learned from history, nota bene from that part of history that corresponds to the similar stage of societal development.Footnote 50 That does not exclude that using the technology for certain purposes requires social engineering that might gain from existing knowledge within social sciences.Footnote 51 Parallels may be drawn and experiences made from similar situations related to societal development.Footnote 52

Furthermore, there are problems in relation to the identification of algo norms. One is the practical problem of finding the algorithms in use.Footnote 53 Companies are not very willing to display which algorithms they apply and how they look, and this is not necessarily a good thing.Footnote 54 The other, somewhat more complicated problem is finding and analyzing the algo norms. That is foremost a theoretical challenge. Algo norms can only be seen by their consequencesFootnote 55 and it is only when a pattern emerges that it becomes possible to talk about the existence of a norm. Here, big-data analysis comes into play.Footnote 56 With a sociological parallel, the algorithms can, like norms, be regarded as the triggering factor,Footnote 57 thus setting in motion certain activities or even laying the foundation for a certain business model. The researcher has to reconstruct the content of the algo norm by using the end result of the process as a starting point and, from there, disentangling underlying factors.Footnote 58 When the end result displays a pattern among big data, there are conditions for identifying algo norms.

This analysis does not require a full or correct understanding of the algorithm. As in sociology of law, you do not need to have full insight into the legal construction and interpretation of the law in order to be able to identify and map the law’s societal implications. Compared to that for legal norms, the logic for understanding algo norms is inverted. In the legal sphere, the search starts with knowledge about what constitutes the legal norm in terms of prerequisites and/or precedents. You are expected from there to be able to draw conclusions about what kind of actions the legal norm covers in reality. To understand algo norms, you have to go the other way around. You start with the actions, namely the outcome of the algorithms, and, from there, you search for indicators regarding underlying motives among the actors and how these relate to the algorithms and the context they create.

Thus, big data and pattern recognition become important methodological tools. Here, the digital technique using algorithms may be helpful when tracing the algo norms. Through surveys via a web panel, the identification of normative changes in general becomes possible, such as, for instance, in the transition from money to information, from customer to user, or such changes that follow on from the change in transactions based on the industrial-based money economy to the sharing economy related to the network society or on open source.Footnote 59 Pattern recognition becomes an important part of the research. Machine learning becomes a tool. This kind of research using big data depends on the availability of exceptionally large computational resources that necessitate similarly substantial energy consumption. As a result, these models are costly to train and develop due to the cost of hardware and electricity or cloud-computing time. This makes research increasingly challenging for people working in academia to contributing to research—something that further underlines the need for studying advanced practice.Footnote 60

3. NORMS AND LAW

3.1 Trial and Error

Legal regulation requires knowledge about what is going to be regulated. This makes the normative problems in relation to AI seem just as philosophical; there is no firm answer. We can only wait and see after we have gained some experience.Footnote 61 This turns the problems into empirical questions and the answers socio-legal—that is, the advanced practice will provide us with different tentative practical solutions, which can lay the foundation for normative assessments.

AI is used in relation to decision-making, learning, and performing tasks based on data where that data often are complex, ambivalent, and difficult to interpret. Areas that require forms of stability, clear goals, measurability, and long-term vision can be expected to use AI in the future, such as fully or partially automated banks (both private and national banks) as well as automated diagnostic and treatment robots (e.g. for diabetics). Also, some state administrations such as customs, police, fire brigades, and roads and transport administrations could be more data-driven and use AI to better make the right decision to optimize, for example, budgets and efforts for maintenance and expansion, and what kind of skills and measures one needs to build up to achieve political goals.

Common denominators regarding the regulation of AI are the uncertainty that prevails and the ambivalence characterizing policies. Therefore, regularity problems are in these cases mostly referred to and discussed in terms of ethics.Footnote 62 AI represents a new regulatory phenomenon. In the transition from the industrial era built on mechanics to a digital society, the related problems change. The legal principles will not be different, but substratum (the reality) changes, which makes it necessary to adjust and reformulate the legal regulation. Note the comparison below between the fatal accident with the first automobile in the late nineteenth century and the first fatal accidents with autonomous cars 120 years later. The difference is the driver or the lack of driver in the latter case, but the legal problems are otherwise the same. The new technology might give rise to a paradigmatic shift in regulation strategies. It seems to follow cycles of societal development. In the industrial society, the demand for regulation was mainly due to the external effects of production, distribution, and consumption. Overproduction leads to, among other things, overconsumption and energy wastefulness against which the political system, via the law, has intervened. In this situation, regulation is built on compromises between contradictory goals. You will both eat the cake and keep it at the same time. This kind of regulation necessitates public authorities for implementation and controlling purposes, which characterizes the industrial world of today.

In the digital era, the problems related to societal development have changed in character. The new technology opens up hitherto unknown possibilities. However, you can never know anything about the future. We tend to think in straight lines. When we think about the extent to which the world will change in the twenty-first century, we just take the twentieth-century progress and add it to the year 2000. Linearly thinking is the most natural despite the fact that we should be thinking exponentially.Footnote 63 According to McKinsey Global Institute, compared to the Industrial Revolution, the AI revolution is “happening ten times fast and at 300 times the scale, or roughly 3,000 times the impact.”Footnote 64 However, exponential growth is not totally smooth and uniform. Kurzweil explains that progress happens in “S-curves.”Footnote 65 Our own experience makes us blind about the future.Footnote 66

These factors block us from seeing that a totally new horizon is coming up. New phenomena emerge incrementally. In this situation, mankind has to use the strategy that always has been used: trial and error. The regulation problem turns into a question of either/or instead of both/but. Regulation becomes aimed not any longer at external effects. Rather, a choice has to be made between different application areas. For what purposes should we accept the use of the new technique?

Surveillance may be a case in point. Common today both in open and closed ecosystems is facial recognition and the monitoring of deviant behaviour based on algorithms, such as privately owned cameras that recognize family and friends but alert to unknown visitors, and those that customs and police use in public places to monitor and in some cases ensure identity. However, the technology gives rise to various opportunities. It can be used for different purposes.

In the digital era, societal problems are more a matter of choice of future options than compromises within the framework of an alternative. We stand with surveillance as an example before a qualitatively new regulatory phenomenon as result of AI. It is the Internet itself. The basic principle for the Internet is that it should be open and free to everyone. Lawrence Lessig talks about “the norm of open code.”Footnote 67 This view of the use of the Internet competes with another use—the one that is about the surveillance and limitation of the Internet for different purposes. The technology development that lies behind the emergence of what can be called the information society tends to be adopted and used in the first stage within the framework of the earlier logic and power centres of society. Legal regulation, both on a national level (the Constitution) and on an international level, such as the European Convention on Human Rights (ECHR), is protecting breaches of privacy from the state and public authorities. These rules are not applicable in relation to violations from large private corporations, which are those primarily producing threats from the AI perspective.

The main argument for introducing different kinds of surveillance is security for ordinary people. There are many tools in daily life that help the individual to protect property and private integrity. This is regarded as a positive effect of the technological digital development. However, when these means get into the wrong hands, they can turn into something evil. That is what takes place to a large extent.

In the industrial era, development is a question of prolongation within the same technology: mechanics. We can talk about many small incremental changes instead of a qualitative shift. The development and refinement of this technology created external effects, which were addressed using one and the same governance strategy, namely intervening law and controlling public authorities. When mechanical technology characterized by physical production turns into a digital technique working with virtual reality, there is a lack of reference points. This becomes a problem in relation to regulation, where adequate knowledge regarding what is going to be regulated is a prerequisite.

In this situation, a process of trial and error appears. Since we do not really know the potential and consequences of new technology, this has to be developed in connection with ethics. The use of new technology appears not to be a practical issue, but a value problem. Discourses based on values become the forerunner to legal regulation. The ethical and political problem becomes a question of a decision on where the limits shall be drawn for different activities. What kind of outcome will we accept? This puts us in a dilemma, since we lack experience. In the industrial era, the external-effects function is a triggering factor for intervention—a strategy built on evaluations ex post, which is problematic to use in relation to phenomena such as AI and algorithms. We do not yet know on which leg we will stand. This is reflected in a newly conducted survey called “AI through the Eyes of the Consumers in the Nordic Countries.”Footnote 68 On the question, “To what extent do you think the following sectors would benefit from utilizing AI more?” the respondents were split. Half agreed partly or to a large extent, while the other half disagreed. The result was more or less the same on the question, “To what extent do you think an AI would make better/worse and more/less unbiased decisions than a human?” Around half of the respondents thought that the AI would take a decision that was just as good/bad or better, and in some cases even a much better decision. The strongest support for AI is in areas such as industry, banking, accountants, and public government, while, in roles based on human-to-human relations, such as nurses, doctors, and lawyers, the trust in the human is greater.

3.2 Legal Implications

New technology in general emerges without political decisions and needs no support from the legal system.Footnote 69 Quite the opposite: it often demands the deregulation of present legislation. The growing digital technology is a case in point.Footnote 70 It is self-promoting in a way, which might collide with laws in the affected legal field. The state can stimulate and promote certain solutions by setting up special zones for empirical testing and development. For example, the Japanese government has, in the field of AI, initiated a kind of living lab, called Tokku.Footnote 71 In the field of autonomous vehicles, several EU countries have endorsed similar experimentation. Sweden has sponsored the world’s largest-scale autonomous-driving pilot project, in which self-driving cars use public roads in everyday driving conditions.

Law actualizes primarily for preventive reasons in relation to negative aspects of new technology. No major regulatory scheme for AI existsFootnote 72 and will probably not arise. When a need for legislative action and a law for AI is expressed, it is primarily in relation to injuries caused by AI.Footnote 73 The new technique, with its changes in the substratum, will be subsumed existing legal paradigms and adopted in prevailing legal principles and rules.Footnote 74 Karl Renner pointed out, in his study of the institutions of private property and its social functions, that the legal institutes might be combined differently during the specific phases of legal development from the Roman-law until the modern-law eras. The first step in such a transitional process is to make analogies.Footnote 75 The question to be asked is “what is similar” to the issue at stake for legal decision-making?

Common law has an advantage compared to statutory law, since it is based on judge-made laws and the legal doctrines are established by judicial precedents rather than by statutes. The system is also known as case-law. This leads to the common-law legal system being confronted by new societal phenomena much earlier than the statutory-based legal systems, such as those in continental European.Footnote 76 In statutory legal systems, a legal issue has to pass the legislative body, namely the political system. This means that statutory legal systems are characterized by more inertia than common-law systems.Footnote 77 The judges have to take a stand in a legal matter even if the problem is unknown and without precedents, while it takes longer for politicians who have different views and opinions to come to a decision, especially in democratic processes. It takes some time before they formulate their political will, which requires experiences of the new phenomenon.

AI and algorithms give rise to interpretation problems and legal-policy considerations when the substrata of law undergo great changes as a consequence of the new conditions for regulation.Footnote 78 These new conditions that the judge and/or the legislator face are primarily an effect of three factorsFootnote 79 : the autonomic function of AI, the complexity and transparency problem, and finally the need for huge amounts of data, namely big data. I will just point out some areas that have already been subject to legal regulation due to AIFootnote 80 : (1) data protection; (2) security and liability rules; (3) robots; (4) antitrust law; and (5) consumer protection. These are areas in which the problems already exist but, under the influence of digitization, are scaled up and therefore require certain precautionary measures. Furthermore, I will comment on discrimination—a phenomenon that also exists since before but, as an indirect effect of machine learning and big data, has causes other than before. Finally, I will comment on a certain area, namely politics, in order to illuminate how AI and algo norms change the game rules of certain parts of society.

3.2.1 Data Protection

Those amounts of data that are needed for machine learning can many times be based on personal data, which have to be treated according to data-protection legislation.Footnote 81 Here, we will find a need for balancing between fundamental rights and principles for processing data and rules for transparency, on the one hand, and the acceptance of a huge amount of data as a valuable resource, which can be used in a flexible way, on the other. According to Article 22 of the GDPR,Footnote 82 the data subject shall have the right not to be subject to a decision based solely on automated processing, including profiling, which produces legal effects concerning him or her or similarly significantly affects him or her.

3.2.2 Security and Liability Rules

As an example of security legislation, the regulation of autonomous cars can be mentioned. Experts have defined five levels in the evolution of autonomous driving. Each level describes the extent to which a car takes over tasks and responsibilities from its driver, and how the car and driver interact. On the first level, you have driver assistance. Level 2 provides an automated driving system monitoring the driving environment. Level 3 is about “highly automated driving,” Level 4 is about “fully automated driving,” and Level 5 is about “full automation.” The last two levels are still in the testing phase. Today, the driver-assistance systems of Level 1 are very common. The biggest leap is from Levels 3 and above, starting at Level 3, when the vehicle itself controls all monitoring of the environment. The driver’s attention is still critical at this level, but can disengage from “safety-critical” functions such as braking and leave them to the technology when conditions are safe. Many current Level-3 vehicles require no human attention on the road at speeds under 37 miles per hour. At Levels 4 and 5, the vehicle is capable of steering, braking, accelerating, and monitoring the vehicle and roadway, as well as responding to events and determining when to change lanes, turn, and use signals.

The race by automakers and technology firms to develop self-driving cars has been fuelled by the belief that computers can operate a vehicle more safely than human drivers can. But that view has partly been in question after two fatal accidents involving self-driving cars. The first case was the driver of a Tesla Model S electric sedan who was killed in an accident when the car was in self-driving mode. Federal US regulators are in the stages of setting guidelines for autonomous vehicles. In a statement, the National Highway Traffic Safety Administration said that reports indicated that the crash occurred when a tractor trailer made a left turn in front of the Tesla and the car failed to apply the brakes. The second recorded case was of a pedestrian fatality involving a self-driving (autonomous) car, following a collision that occurred late in the evening of 18 March 2018. A woman was pushing a bicycle across a four-lane road in Tempe, Arizona, US, when she was struck by an Uber test vehicle, which was operating in self-drive mode with a human safety-backup driver sitting in the driving seat.

These cases actualize safety regulation directly in connection with these specific AI incidents. The second case has also put forward the question of liability. Who is responsible if you remove the driver? Is it the producer, those who provide the training data serving as in-date for the vehicle, the person who uses the car, the driver, or is it the owner of the vehicle? As of April 2019, 33 states in the US have enacted legislation pertaining to autonomous vehicles.Footnote 83 In Sweden, a law that applied from 1 July 2019 introduced new rules for drivers, owners, and legal persons on prerequisites for crime. Among other things, there is a discussion of introducing vicarious liability and strict liability in relation to AI. Since neither statutory law nor common law accepts AI status as a legal person, it cannot serve as a principal or agent.Footnote 84

One can compare these fatal accidents with that of Bridget Driscoll, who, in the UK in 1896, was the first pedestrian to be killed by an automobile.Footnote 85 The situations are similar to each other. In 1896, it was a question of introducing a new means of transportation based on inventions in mechanics, which can be compared to the Uber accident, as that was related to the introduction of a new digital innovation to a means of transportation. The crash in Arizona seemed just as startling—and perhaps as likely, given the pioneering technology of autonomous driving and Uber’s reputation for pushing the limits—as the car crash that killed Driscoll in south-east London on a summer’s day more than a century ago. This case can serve to illustrate how the technique affects society. As in many motor-vehicle accidents, there were conflicting accounts of what happened on 17 August 1896.Footnote 86 Testimony focused on the vehicle’s speed, the driver’s abilities, and whether the public had been given enough warning about the demonstration vehicles.Footnote 87 To conclude, there are lessons to be learned from similar situations and corresponding phases in the history of societal development.

3.2.3 Robots

Robots cover broad aspects that are numerous, from smart assistants on mobile phones to eventually being alternatives to certain health-care professionals and therapists. They can be autonomous or semi-autonomous, and range from humanoids to industrial robots, medical operating robots, patient-assist robots, therapy robots, collectively programmed drones, and even microscopic nanorobots. By mimicking a lifelike appearance or automating movements, a robot is using AI technology. Autonomous things are expected to proliferate in the coming decade, with home robotics and the autonomous car as some of the main drivers. Robots are facing the same kind of problems as autonomous cars. They are constructed for certain purposes and they operate “on their own”—that is, without a human being involved except in the construction and instructions. This means that responsibility for actions becomes important in the same way as it does for autonomous vehicles. Problems with robots are related to the development and training. Big data comes into play in order to teach and train the robot everything from natural language processing (NLP) tasks to movements in different respects.Footnote 88 This aspect of robotics is not helped by legal regulation. It is when the robot is in action that problems in need of legal solutions arise.Footnote 89 The importance of regulation increases when the robot takes the form of an embodied medium for AI agents, thus allowing the agent to physically interact with human beings.Footnote 90

However, a proactive strategy is hard to apply, since the technique is constantly developing. The design and manufacture of advanced robotics are difficult to regulate in depth because of the speed of technical development and the character of the relevant domain knowledge of AI.Footnote 91 The legal regulation of robots in a proactive way requires—as does every advanced technology—a highly technical language in combination with normative standpoints. In these situations, there is a risk both that the law will become obsolete rather quickly and that the technological development will be hampered and thereby the benefits do not appear.

The regulation of robots has therefore to fall back on a reactive strategy, namely a question of wait and see what the consequences will be of the new technology. So far, some guiding principles have emerged. More or less all of them fall back on the “Three Laws of Robotics,” devised by the science-fiction author Isaac Asimov and introduced in 1942.Footnote 92 One of the first countries to formulate ethical principles was South Korea, with a Robot Ethics Charter from 2012. This charter was drafted in order to prevent social ills that may arise out of inadequate social measures to deal with robots in society. The Korean charter recognizes that robots may require legal protection from abusive humans, just as animals sometimes need legal protection from their owners. The charter is setting up some manufacturing standards. For example, robot manufacturers must ensure that the autonomy of the robots that they design is limited; in the event that it becomes necessary, it must always be possible for a human being to assume control over a robot. Furthermore, robot manufacturers must maintain strict standards of quality control, taking all reasonable steps to ensure that the risk of death or injury to the user is minimized and the safety of the community guaranteed. The robots are granted certain rights and responsibilities.Footnote 93 Thus, a robot may not injure a human being or, through inaction, allow a human being to come to harm. A robot must obey any orders. Under Korean law, robots are afforded the following fundamental rights: (1) the right to exist without fear of injury or death; and (2) the right to live an existence free from systematic abuse.

Second among the states interested in the issue of robots is the UK. In April 2016, the British Standards Institute published a document entitled “Robots and Robotic Devices: Guide to the Ethical Design and Application of Robots and Robotic Systems.” This text has no legal value as such but sets out recommendations for robot creators, emphasizing the ethical risks associated with the development of robots—something that indirectly may have legal consequences for assessing negligence.

In the US, robots play a dominant role in the economy, particularly in the job market, to such an extent that they already pose a threat to low-skilled jobs. However, there is virtually no US law regarding the legal status of robots. The European Commission is working towards the establishment of ethical rules concerning robotics and AI “in order to exploit their full economic potential and to guarantee a standard level of safety and security.”Footnote 94 According to the report, an electronic person would potentially be any robot that makes autonomous decisions intelligently or interacts independently with third parties. However, the Commission has not yet adopted this notion of electronic personality. Furthermore, the European Economic and Social Committee (EESC) has opposed the recognition of any form of legal personality for robots. In an opinion of 4 May 2017, it considered that this would be a “threat to an open and equitable democracy.”

On February 2017, the European Parliament adopted a resolution on civil-law rules of robotics. This forms the first step in the EU law-making process. It is an official request for the Commission to submit to the European Parliament an official proposal for civil-law rules on robotics. The report contains a detailed list of recommendations on what the final civil rules should contain, including norms regarding a common official definition for various things including autonomous systems and autonomous smart robots: a standard for manufacturing quality, for liability regarding robots, and laws on how robots should be studied, developed, and used.

3.2.4 Antitrust Law

AI tends to attract more and more data as it grows. At the same time, algorithms are becoming increasingly sophisticated. Those service providers who manage to take a lead in such a process tend to give rise to de facto monopolies. This is something that might collide with antitrust legislation.Footnote 95 Remedies in terms of data sharing and the division of companies have been discussed. Another challenge in relation to antitrust law is that algorithms used by net platforms may contribute to preferential treatment of specific companiesFootnote 96 through the ranking of search results on market platforms.Footnote 97 This last-mentioned example is a form of discrimination. There are many situations in which there is a risk that AI will treat individuals different according to gender, skin colour, or ethnic background.Footnote 98 In relation to machine learning, it has become crucial not to discriminate against these variables.

Another similar example could be about the development of platforms. Algorithms have also disruptive implications in commercial contexts. During the last few decade, we have seen how old models are becoming outdated and replaced. Numerous contemporary studies have described how new business models develop as result of digital innovations using algorithms, such as sharing economyFootnote 99 and different platforms such as Uber,Footnote 100 Airbnb,Footnote 101 Kickstarter, Homeaway, and Booking, etc. KellyFootnote 102 has listed the 12 technological forcesFootnote 103 that are built on algorithms that, in his opinion, are likely to shape our future normative landscape. These are mainly regarded as positive for people and by people.Footnote 104

Previous research has shown how the digital technique creates new socioeconomic conditions. However, we lack knowledge on how these changes take place and, above all, we do not know the content or what will be the consequences in different respects. Knowledge about the causes requires understanding of digitization, while understanding content and consequences is related to the algorithms and what norms—algo norms—they give rise to in terms of expectation. Undeniably, these new platforms produce consumer benefits, but there is a risk following the logic of algorithms that those platforms that manage to conquer a market segment will strengthen its position over time. There is a strong monopoly tendency in connection with the use of algorithms.

Platforms might produce networked “market-place platforms” that, in turn, make available opportunities to buy and sell—skimming a percentage of each transaction as a provider.Footnote 105 They have thereby access to more data, more skills, and more resources than even most nation-states and can use them to perform some of the services that were once the prerogative solely of states.Footnote 106 This is particularly striking in probably the most sensitive sovereign domains: defence and security.Footnote 107 Intermediation platforms are now powerful economic and political entities, which sometimes act as though they are political powers that compete with governments.Footnote 108 Such intermediation platforms could soon outperform states in providing essential public services, especially in a context of shrinking public budgets. New business models and regulatory techniques have emerged, none more significant than what has been happening in the field of information technology in which the “Big Five”—Apple, Google, Amazon, Facebook, and Microsoft—have driven the rise of algorithms in society. Facebook can see what people share, Google can see what they search for, Amazon can see what they buy, Microsoft can see where they work, and Apple can see which apps people are using and other interesting data from the Internet.

These are all examples of how the digital technique via platforms provides services that benefit consumers while at the same time controlling them and influencing their preferences. This is not an external side effect, but a direct consequence of the technique.

3.2.5 Consumer Protection

Another area that calls for regulation is consumer protection. Here, the Internet of things (IoT) represents another phenomenon with tendencies favouring consumers while also being a threat against privacy and security concerns.Footnote 109 Some scholars claim that the IoT offers immense potential for empowering citizens, making governments transparent and broadening information access, while others at the same time argue that privacy threats are enormous, as is the potential for social control and political manipulation.Footnote 110

The IoT is the extension of Internet connectivity into physical devices and everyday objects. Embedded with electronics, Internet connectivity, and other forms of hardware (such as sensors), these devices can communicate and interact with others over the Internet, and they can be remotely monitored and controlled. In the consumer market, IoT technology is most synonymous with products pertaining to the concept of the “smart home,” covering devices and appliances (such as lighting fixtures, thermostats, home-security systems and cameras, and other home appliances) that support one or more common ecosystems and can be controlled via devices associated with that ecosystem, such as smartphones and smart speakers. The IoT and the related AI-based services strengthen the tendency towards providing services instead of selling things.Footnote 111

The IoT concept has faced prominent criticism, especially in regard to privacy and security concerns related to these devices and their intention of pervasive presence.Footnote 112 One of the key drivers of the IoT is data. The success of the idea of connecting devices to make them more efficient is dependent upon access to and storage and processing of data. For this purpose, companies working on the IoT collect data from multiple sources and store them in their cloud network for further processing. This leaves the door wide open for privacy and security dangers and a single-point vulnerability of multiple systems. Though still in their infancy, regulations and governance regarding these issues of privacy, security, and data ownership continue to develop.Footnote 113

3.3 Politics

Research areas relevant to address in relation to politics ought to be the questions of changed societal conditions for norm-setting power and for democracy.Footnote 114 One well-known illustrative example is Cambridge Analytica Ltd (CA), created in 2013—a privately held company that provided strategic communication to influence electoral processes. CA was a British political consulting firm that combined data mining, data brokerage, and data analysis with strategic communication during electoral processes.Footnote 115

In 2016, CA worked for Donald Trump’s presidential campaign as well as for Leave.EU (one of the organizations campaigning in the UK’s referendum on EU membership). CA’s role in those campaigns has been controversial and the subject of ongoing criminal investigations in both countries. Facebook banned CA from advertising on its platform, saying that it had been deceived.Footnote 116 On 23 March 2018, the British high court granted the Information Commissioner’s Office a warrant to search CA’s London offices.Footnote 117

The personal data of approximately 87 million Facebook users were acquired via the 270,000 Facebook users who used a Facebook app called “This Is Your Digital Life.” By giving this third-party app permission to acquire their data, back in 2015, this also gave the app access to information on the user’s friends network; this resulted in the data of about 87 million users, the majority of whom had not explicitly given CA permission to access their data, being collected. The app developer breached Facebook’s terms of service by giving the data to CA.Footnote 118 On 1 May 2018, CA and its parent company filed for insolvency proceedings and closed operations.

The software made by CA is now perhaps one of the most controversial and yet most desired in the world. It collects personal data from different sources, then analyses them through the algorithms of software and predicts the behaviour of the people in advance. There is also more advanced AI that predicts and then tests with new content, evaluates reactions/interactions (reposts, likes, etc.), and measures again until confidence is achieved in screening or set interactions are achieved (e.g. a purchase). Based on that analysis, it has the potential of working with governments, militaries, intelligence agencies, or politicians to run social campaigns, plot defence strategies, or spread propaganda about the desired outcome.

The capacity of a democracy to determine upon public matters effectively will depend critically upon the architecture of media and the capacity of norms to direct the public’s attention to political issues to the extent that the business model of private media favours polarization that presents a challenge for democratic deliberation. The information and culture that we take part in today are determined to a large extent by algorithms designed with the help of big data. The relationship between media- and Internet-based algorithms will be crucial to understanding exposure to political questions.Footnote 119

The role that technology plays as the new norm setter in relation to the traditional norm-setting powers within politics and law is an important research area, as well as how democracy is affected by digital technology. In the survey mentioned earlier, more than half of the respondents (54%) answered that they think that AI would make decisions that are as good as or better than those of politicians, which must be regarded as an interesting result, and something that has to be followed up on in further and deeper research. The transfer of regulatory power from politics and law to digital techniques and algorithms pushes in this direction in a double way: directly by the increased use of AI and algorithms as norms and indirectly by the weakening of the nation-state due to digital technology from a broader perspective.Footnote 120 The nation-state is exposed to challenges from private companies.Footnote 121 Both these aspects ought to be taken into account in empirical work.

3.4 Future Challenges

New technology seems at the same time scary and promising. At the outset of the industrial society and the development of the physical means of communication, the railways, and trains, a man with a red flag had in certain situations to walk in front of the train in order to prevent accidents.Footnote 122 The current technological development will probably leave similar kinds of experiences, which, in light of history, will appear as equally remarkable and backwards.

Using norm science and the study of algo norms, there is a potential to get hold of the consequences of digital technology and algorithms and how these increasingly regulate people’s everyday lives. In this way, it is possible to lay the foundation for an assessment of digital technology and AI in different contexts, whether they are regarded as good or bad, whether they are regarded as providing people with a better life or not. One specific research task is to study how digital technology and the algorithms seem to have a tendency resulting in weakening of the nation-state. From a long-term perspective, both the direct and indirect effects of digitization contribute to the regulatory power in society moving from politics and law to technicians and AI. Highlighting these tendencies in society from a global perspective seems to be an important research task. This requires scientific innovations, which can be actualized through the development of norm science.

There are three main challenges for digital development in relation to AI and algorithms. These are (1) the energy costs, (2) the singularity point, and (3) the governance problems. The first two challenges are of a general character and they will briefly be mentioned, since they affect the governance problems. This third challenge is of a socio-legal character, which I have elaborated on above in different contexts and summarized here.

(1) A huge problem with AI, which has to be solved in the near future in one way or another—if possible at all—is the energy costs and carbon emissions from machine learning. Training a single AI model can emit as much carbon as five cars in their lifetimes.Footnote 123 Recent progress in the hardware and methodology for training neural networks has ushered in a new generation of large networks trained on abundant data. These models have obtained notable gains in accuracy, for instance across many NLP tasks. However, these accuracy improvements depend on the availability of exceptionally large computational resources that necessitate similarly substantial energy consumption. As a result, these models are costly to train and develop, both financially, due to the cost of hardware and electricity or cloud-compute time, and environmentally, due to the carbon footprint required to fuel modern processing hardware.Footnote 124

Research reported by MIT Technology Review specifically examines the model-training process for NLP—the subfield of AI that focuses on teaching machines to handle human language. In the last two years, the NLP community has reached several noteworthy performance milestones in machine translation, sentence completion, and other standard benchmarking tasks. However, such advances have required training ever-larger models on sprawling data sets of sentences scraped from the Internet. The approach is computationally expensive—and highly energy-intensive.Footnote 125

The researchers found that the computational and environmental costs of training grew proportionally to model size and then exploded when additional tuning steps were used to increase the model’s final accuracy.Footnote 126 In particular, they found that a tuning process, known as a neural-architecture search, which tries to optimize a model by incrementally tweaking a neural network’s design through exhaustive trial and error, had extraordinarily high associated costs for little performance benefit.

(2) From a long-term perspective, we face an urgent phenomenon, called singularity, which, according to some researchers, is a hypothetical dystopic future point in time at which technological growth might become uncontrollable and irreversible, resulting in unfathomable changes to human civilization.Footnote 127 Technological singularity is the moment at which AI crosses the human brain’s abilityFootnote 128 —one moment that, according to technology prophets, immediately turns AI machines to create even more technology with even more advanced intellectual functions.Footnote 129 This does not mean a new industrial revolution, but a total transformation of society that challenges man’s domination of the world.

The author and futurologist Ray Kurzweil predicts 2029 to be the year in which AI will have achieved human levels of intelligence and 2045 the year for singularity by merging human intelligence with the AI that we have created.Footnote 130

(3) The governance problem in relation to AI is the question of a proactive or a reactive regulation strategy. In order to approach this problem, a new research agenda is required that diverts from social science based on the industrial model for society. In the future—whether we like it or not—it seems that it will no longer be politicians, through laws, or ordinary people, via social norms, who determine the preferences of society, but instead technicians and markets that interplay. There are many indications of an economic influence on the development of AI.Footnote 131

The market forces are the main allies of algo norms and AI. If one tries to trace the driving forces behind AI development, the economic system would, as in almost any other societal issue, provide the answer. Is it possible from a proactive perspective to influence this development? That would require research on AI that makes visible and articulates the driving forces behind the content of these norms and their hidden preferences; still, it would be hard to influence in a proactive way. Since we lack, at least for the time being, knowledge about the effects of new digital techniques in different societal respects, for now, it remains to stick to the strategy of trial and error—that is, to wait and see what the consequences are and, after that, to make decisions about preventive actions.

At the same time, we are aware of that, if AI is released, it might be too late to intervene. This calls for an ex ante strategy. A unique feature of AI as a regulatory problem is its capacity for self-reproducing even without human involvement. AI represents not only a system that reproduces and maintains itself; it goes a step further by being able to develop itself in a kind of autonomous process and turn into something else. As a consequence, we are caught in a dilemma between a desirable proactive and a factual reactive strategy. Misinformation, threats, and fake news and manipulation have to be combated. Warning bells in the form of statistical engines that, in a dynamic way and with the help of AI, collect data across platforms alert if a system grows itself to be so big that it threatens institutions in society. In any case, something has to be done. Otherwise, new techniques will take the upper hand and primarily benefit huge companies and totalitarian states.

We face a situation in which we are as vulnerable as the indigenous people were during the colonization time when foreign powers came with firearms and armour while those who lived there had a bow and arrow to defend themselves with. It seems as though nobody owns the questions of where technological development should lead us and what technology should be allowed to do. We have no idea about or vision on what society we expect in the future and what we want to defend or which institutions will be sustainable in the future. Under such circumstances, the playing field is open for misuse of different kinds. This situation calls for a radical new approach to politics, law, and society.